[1] Can programming be liberated from the von Neumann style. Commun ACM, 21, 613(1978).

[2] Moore’s law. Electron Magaz, 38, 114(1965).

[3] Moore's law: Past, present and future. IEEE Spectr, 34, 52(1997).

[4] Fifty years of Moore's law. IEEE Trans Semicond Manufact, 24, 202(2011).

[5] The chips are down for Moore's law. Nature, 530, 144(2016).

[6] Hitting the memory wall. SIGARCH Comput Archit News, 23, 20(1995).

[7] In-memory computing with resistive switching devices. Nat Electron, 1, 333(2018).

[8] et alMixed-precision in-memory computing. Nat Electron, 1, 246(2018).

[9] The building blocks of a brain-inspired computer. Appl Phys Rev, 7, 011305(2020).

[10] et alMemory devices and applications for in-memory computing. Nat Nanotechnol, 15, 529(2020).

[11] Nanoscale resistive switching devices for memory and computing applications. Nano Res, 13, 1228(2020).

[12] et alEmerging memory devices for neuromorphic computing. Adv Mater Technol, 4, 1800589(2019).

[13] et alDevice and materials requirements for neuromorphic computing. J Phys D, 52, 113001(2019).

[14] Neuromemristive circuits for edge computing: A review. IEEE Trans Neural Netw Learn Syst, 31, 4(2020).

[15] et alLow-power neuromorphic hardware for signal processing applications: A review of architectural and system-level design approaches. IEEE Signal Process Mag, 36, 97(2019).

[16] et alA review of near-memory computing architectures: Opportunities and challenges. 2018 21st Euromicro Conference on Digital System Design (DSD), 608(2018).

[17] et alNear-memory computing: Past, present, and future. Microprocess Microsyst, 71, 102868(2019).

[18] et alA million spiking-neuron integrated circuit with a scalable communication network and interface. Science, 345, 668(2014).

[19] et alDaDianNao: A machine-learning supercomputer. 2014 47th Annual IEEE/ACM International Symposium on Microarchitecture, 609(2014).

[20] et alLoihi: A neuromorphic manycore processor with on-chip learning. IEEE Micro, 38, 82(2018).

[21] et alTowards artificial general intelligence with hybrid Tianjic chip architecture. Nature, 572, 106(2019).

[22] Memristor – The missing circuit element. IEEE Trans Circuit Theory, 18, 507(1971).

[23] et alPhase change memory. Proc IEEE, 98, 2201(2010).

[24] et alFerroelectric memories. Ferroelectrics, 104, 241(1990).

[25] et alSpin-transfer torque magnetic random access memory (STT-MRAM). J Emerg Technol Comput Syst, 9, 1(2013).

[26] et alResistive switching materials for information processing. Nat Rev Mater, 5, 173(2020).

[27] et alRecommended methods to study resistive switching devices. Adv Electron Mater, 5, 1800143(2019).

[28] et alRedox-based resistive switching memories–nanoionic mechanisms, prospects, and challenges. Adv Mater, 21, 2632(2009).

[29] et alMemristor crossbar arrays with 6-nm half-pitch and 2-nm critical dimension. Nat Nanotechnol, 14, 35(2019).

[30] et alHigh-speed and low-energy nitride memristors. Adv Funct Mater, 26, 5290(2016).

[31] et alThree-dimensional memristor circuits as complex neural networks. Nat Electron, 3, 225(2020).

[32] et alNanoscale memristor device as synapse in neuromorphic systems. Nano Lett, 10, 1297 613(2010).

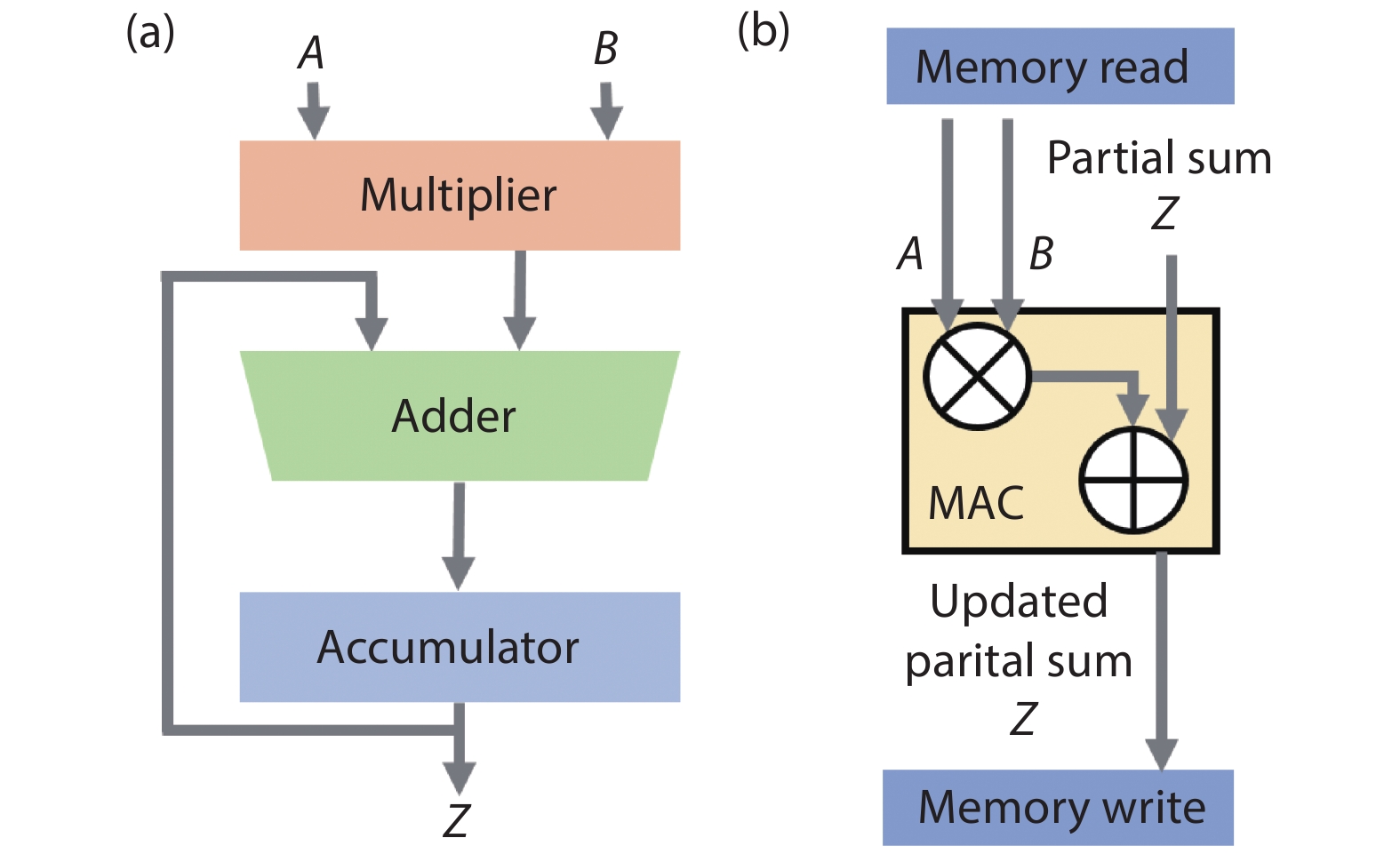

[33] High speed and area-efficient multiply accumulate (MAC) unit for digital signal prossing applications. 2007 IEEE International Symposium on Circuits and Systems, 3199(2007).

[34] Review on multiply-accumulate unit. Int J Eng Res Appl, 7, 09(2017).

[35] A high-performance multiply-accumulate unit by integrating additions and accumulations into partial product reduction process. IEEE Access, 8, 87367(2020).

[36] Efficient posit multiply-accumulate unit generator for deep learning applications. 2019 IEEE International Symposium on Circuits and Systems (ISCAS), 1(2019).

[37] et alReview and benchmarking of precision-scalable multiply-accumulate unit architectures for embedded neural-network processing. IEEE J Emerg Sel Topics Circuits Syst, 9, 697(2019).

[38] ImageNet classification with deep convolutional neural networks. Commun ACM, 60, 84(2017).

[39] et alDot-product engine for neuromorphic computing: Programming 1T1M crossbar to accelerate matrix-vector multiplication. 2016 53nd ACM/EDAC/IEEE Design Automation Conference (DAC), 1(2016).

[40] et alMemristor-based analog computation and neural network classification with a dot product engine. Adv Mater, 30, 1705914(2018).

[41] et alAnalogue signal and image processing with large memristor crossbars. Nat Electron, 1, 52(2018).

[42] et alAlgorithmic fault detection for RRAM-based matrix operations. ACM Trans Des Autom Electron Syst, 25, 1(2020).

[43] et alTheory study and implementation of configurable ECC on RRAM memory. 2015 15th Non-Volatile Memory Technology Symposium (NVMTS), 1(2015).

[44] Low power memristor-based ReRAM design with Error Correcting Code. 17th Asia and South Pacific Design Automation Conference, 79(2012).

[45] Multilayer feedforward networks are universal approximators. Neural Networks, 2, 359(1989).

[46] Deep learning. Nature, 521, 436(2015).

[47] et alPhoto-realistic single image super-resolution using a generative adversarial network. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 105(2017).

[48] Long short-term memory. Neural Comput, 9, 1735(1997).

[49] et alEyeriss: an energy-efficient reconfigurable accelerator for deep convolutional neural networks. IEEE J Solid-State Circuits, 52, 127(2017).

[50]

[51] et alFathom: reference workloads for modern deep learning methods. 2016 IEEE International Symposium on Workload Characterization (IISWC), 1(2016).

[52] et alForming-free, fast, uniform, and high endurance resistive switching from cryogenic to high temperatures in W/AlOx/Al2O3/Pt bilayer memristor. IEEE Electron Device Lett, 41, 549(2020).

[53] et alSiGe epitaxial memory for neuromorphic computing with reproducible high performance based on engineered dislocations. Nat Mater, 17, 335(2018).

[54] et alReview of memristor devices in neuromorphic computing: Materials sciences and device challenges. J Phys D, 51, 503002(2018).

[55] et alRecent advances in memristive materials for artificial synapses. Adv Mater Technol, 3, 1800457(2018).

[56] Memristive crossbar arrays for brain-inspired computing. Nat Mater, 18, 309(2019).

[57] et alA comprehensive review on emerging artificial neuromorphic devices. Appl Phys Rev, 7, 011312(2020).

[58] et alPerspective on training fully connected networks with resistive memories: Device requirements for multiple conductances of varying significance. J Appl Phys, 124, 151901(2018).

[59] et alResistive memory device requirements for a neural algorithm accelerator. 2016 International Joint Conference on Neural Networks (IJCNN), 929(2016).

[60] et alRecent progress in analog memory-based accelerators for deep learning. J Phys D, 51, 283001(2018).

[61] NeuroSim: A circuit-level macro model for benchmarking neuro-inspired architectures in online learning. IEEE Trans Comput-Aided Des Integr Circuits Syst, 37, 3067(2018).

[62] et alResistive memory-based in-memory computing: From device and large-scale integration system perspectives. Adv Intell Syst, 1, 1900068(2019).

[63] et alLiSiOx-based analog memristive synapse for neuromorphic computing. IEEE Electron Device Lett, 40, 542(2019).

[64] et alHfZrOx-based ferroelectric synapse device with 32 levels of conductance states for neuromorphic applications. IEEE Electron Device Lett, 38, 732(2017).

[65] et alTiOx-based RRAM synapse with 64-levels of conductance and symmetric conductance change by adopting a hybrid pulse scheme for neuromorphic computing. IEEE Electron Device Lett, 37, 1559(2016).

[66] et alA large-scale in-memory computing for deep neural network with trained quantization. Integration, 69, 345(2019).

[67] A quantized training method to enhance accuracy of ReRAM-based neuromorphic systems. 2018 IEEE International Symposium on Circuits and Systems (ISCAS), 1(2018).

[68] et alBinary neural network with 16 Mb RRAM macro chip for classification and online training. 2016 IEEE International Electron Devices Meeting (IEDM), 16.2.1(2016).

[69] et alImplementation of multilayer perceptron network with highly uniform passive memristive crossbar circuits. Nat Commun, 9, 2331(2018).

[70] et alFace classification using electronic synapses. Nat Commun, 8, 15199(2017).

[71] et alA fully integrated analog ReRAM based 78.4TOPS/W compute-in-memory chip with fully parallel MAC computing. 2020 IEEE International Solid- State Circuits Conference (ISSCC), 500(2020).

[72] et alEfficient and self-adaptive in situ learning in multilayer memristor neural networks. Nat Commun, 9, 2385(2018).

[73]

[74] et alA fully integrated reprogrammable memristor–CMOS system for efficient multiply–accumulate operations. Nat Electron, 2, 290(2019).

[75]

[76] et alDeep residual learning for image recognition. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 770(2016).

[77] et alError-reduction controller techniques of TaOx-based ReRAM for deep neural networks to extend data-retention lifetime by over 1700x. 2018 IEEE Int Mem Work IMW, 1(2018).

[78] et alHigh-precision symmetric weight update of memristor by gate voltage ramping method for convolutional neural network accelerator. IEEE Electron Device Lett, 41, 353(2020).

[79] Better performance of memristive convolutional neural network due to stochastic memristors. International Symposium on Neural Networks, 39(2019).

[80] et alImpacts of state instability and retention failure of filamentary analog RRAM on the performance of deep neural network. IEEE Trans Electron Devices, 66, 4517(2019).

[81] et alStrategies to improve the accuracy of memristor-based convolutional neural networks. IEEE Trans Electron Devices, 67, 895(2020).

[82] Training deep convolutional neural networks with resistive cross-point devices. Front Neurosci, 11, 538(2017).

[83] et alPerformance impacts of analog ReRAM non-ideality on neuromorphic computing. IEEE Trans Electron Devices, 66, 1289(2019).

[84] Demonstration of convolution kernel operation on resistive cross-point array. IEEE Electron Device Lett, 37, 870(2016).

[85] et alImplementation of convolutional kernel function using 3-D TiOx resistive switching devices for image processing. IEEE Trans Electron Devices, 65, 4716(2018).

[86] et alDemonstration of 3D convolution kernel function based on 8-layer 3D vertical resistive random access memory. IEEE Electron Device Lett, 41, 497(2020).

[87] et alFully hardware-implemented memristor convolutional neural network. Nature, 577, 641(2020).

[88] et alCMOS-integrated memristive non-volatile computing-in-memory for AI edge processors. Nat Electron, 2, 420(2019).

[89] et alEmbedded 1-Mb ReRAM-based computing-in-memory macro with multibit input and weight for CNN-based AI edge processors. IEEE J Solid-State Circuits, 55, 203(2020).

[90]

[91] et alDemonstration of generative adversarial network by intrinsic random noises of analog RRAM devices. 2018 IEEE International Electron Devices Meeting (IEDM), 3.4.1(2018).

[92] et alLong short-term memory networks in memristor crossbar arrays. Nat Mach Intell, 1, 49(2019).

[93]

[94] A memristor-based long short term memory circuit. Analog Integr Circ Sig Process, 95, 467(2018).

[95] et alMemristive LSTM network for sentiment analysis. IEEE Trans Syst Man Cybern: Syst, 1(2019).

[96] A survey on LSTM memristive neural network architectures and applications. Eur Phys J Spec Top, 228, 2313(2019).

[97] et alA parallel RRAM synaptic array architecture for energy-efficient recurrent neural networks. 2018 IEEE International Workshop on Signal Processing Systems (SiPS), 13(2018).

[98] et alA general memristor-based partial differential equation solver. Nat Electron, 1, 411(2018).

[99]

[100] et alSolving matrix equations in one step with cross-point resistive arrays. PNAS, 116, 4123(2019).

[101] et alIn-memory PageRank accelerator with a cross-point array of resistive memories. IEEE Trans Electron Devices, 67, 1466(2020).

[102]

[103] et alTime complexity of in-memory solution of linear systems. IEEE Trans Electron Devices, 67, 2945(2020).

[104] et alIn-memory eigenvector computation in time O (1). Adv Intell Syst, 2, 2000042(2020).

[105]

[106] et alChip-scale optical matrix computation for PageRank algorithm. IEEE J Sel Top Quantum Electron, 26, 1(2020).

[107] et alMemristive and CMOS devices for neuromorphic computing. Materials, 13, 166(2020).

[108] et alEquivalent-accuracy accelerated neural-network training using analogue memory. Nature, 558, 60(2018).

[109] et alFerroelectric FET analog synapse for acceleration of deep neural network training. 2017 IEEE International Electron Devices Meeting (IEDM), 6.2.1(2017).

[110] et alFast, energy-efficient, robust, and reproducible mixed-signal neuromorphic classifier based on embedded NOR flash memory technology. 2017 IEEE International Electron Devices Meeting (IEDM), 6.5.1(2017).

[111] et alVisual pattern extraction using energy-efficient “2-PCM synapse” neuromorphic architecture. IEEE Trans Electron Devices, 59, 2206(2012).

[112] et alPhase change memory as synapse for ultra-dense neuromorphic systems: Application to complex visual pattern extraction. 2011 International Electron Devices Meeting, 4.4.1(2011).

[113] et alExperimental demonstration and tolerancing of a large-scale neural network (165 000 synapses) using phase-change memory as the synaptic weight element. IEEE Trans Electron Devices, 62, 3498(2015).

[114] et alThe impact of resistance drift of phase change memory (PCM) synaptic devices on artificial neural network performance. IEEE Electron Device Lett, 40, 1325(2019).

[115] et alAccelerating deep neural networks with analog memory devices. 2020 IEEE International Memory Workshop (IMW), 1(2020).

[116] et alUltra-low power Hf0.5Zr0.5O2 based ferroelectric tunnel junction synapses for hardware neural network applications. Nanoscale, 10, 15826(2018).

[117] et alLearning through ferroelectric domain dynamics in solid-state synapses. Nat Commun, 8, 14736(2017).

[118]

[119] et alExploiting hybrid precision for training and inference: A 2T-1FeFET based analog synaptic weight cell. 2018 IEEE International Electron Devices Meeting (IEDM), 3.1.1(2018).

[120] et alHigh-density and highly-reliable binary neural networks using NAND flash memory cells as synaptic devices. 2019 IEEE International Electron Devices Meeting (IEDM), 38.4.1(2019).

[121]

[122] et alEfficient and robust spike-driven deep convolutional neural networks based on NOR flash computing array. IEEE Trans Electron Devices, 67, 2329(2020).

[123] et alStorage reliability of multi-bit flash oriented to deep neural network. 2019 IEEE International Electron Devices Meeting (IEDM), 38.2.1(2019).