[1] Y Chen, T Krishna, J Emer et al. Eyeriss: An energy-efficient reconfigurable accelerator for deep convolutional neural networks. IEEE J Solid-State Circuits, 52, 127(2017).

[2]

[3]

[4] J Cong, B Xiao. Minimizing computation in convolutional neural networks. Artificial Neural Networks and Machine Learning, 281(2014).

[5] S Yin, P Ouyang, S Tang et al. A high energy efficient reconfigurable hybrid neural network processor for deep learning applications. IEEE J Solid-State Circuits, 53, 968(2017).

[6] O Russakovsky, J Deng, H Su et al. ImageNet large scale visual recognition challenge. Int J Comput Vision, 115, 211(2015).

[7]

[8]

[9] K Simonyan, A Zisserman. Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556, 2014.

[10]

[11]

[12]

[13] V Mnih, K Kavukcuoglu, D Silver et al. Playing atari with deep reinforcement learning. arXiv: 1312.5602(2013).

[14] V Mnih, K Kavukcuoglu, D Silver et al. Human-level control through deep reinforcement learning. Nature, 518, 529(2015).

[15] D Silver, A Huang, C Maddison et al. Mastering the game of Go with deep neural networks and tree search. Nature, 529, 484(2016).

[16] D Silver, J Schrittwieser, K Simonyan et al. Mastering the game of Go without human knowledge. Nature, 550, 354(2017).

[17]

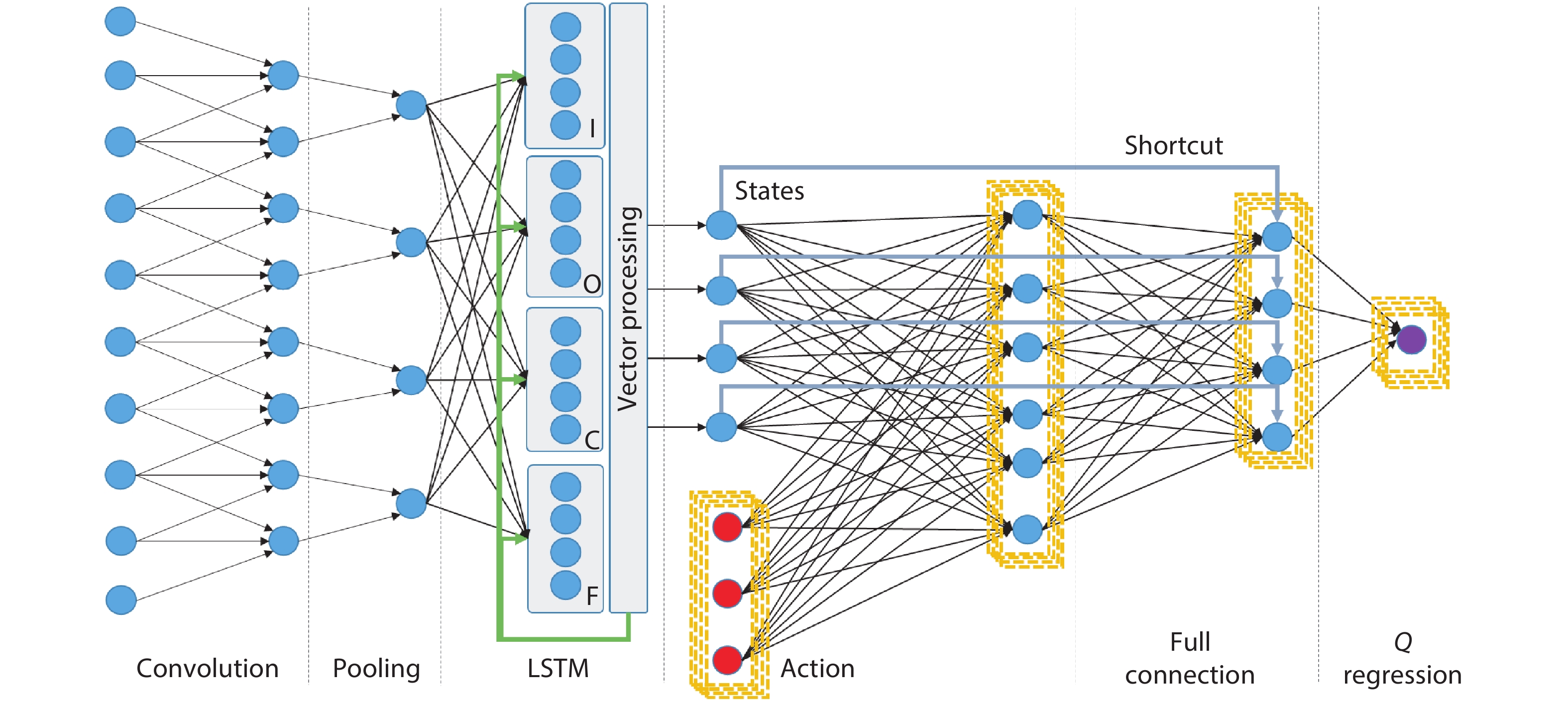

[18] F Gers, J Schmidhuber, F Cummins. Learning to forget: Continual prediction with LSTM. 9th International Conference on Artificial Neural Networks, 850(1999).

[19]

[20]

[21]

[22]

[23]

[24]

[25]

[26]

[27]

[28]

[29]

[30] S Yin, P Ouyang, S Tang et al. 1.06-to-5.09 TOPS/W reconfigurable hybrid-neural-network processor for deep learning applications. Symposium on VLSI Circuits(2017).