- Advanced Photonics Nexus

- Vol. 3, Issue 3, 036005 (2024)

Abstract

1 Introduction

Light-field fluorescence microscopy (LFM) serves as an elegant compact solution to long-term high-speed volumetric microscopy due to its low photobleaching and simultaneous 3D imaging and is suitable for biological applications such as neuron activity observing1

In light-field photography for macro scenes, a light-field image could be compressed according to its 4D structure by making full use of angular continuity, which refers to the utilization of time continuity in video compression techniques.36,37 In addition, coding a part of subaperture images of light-field data with a depth map could also greatly improve the efficiency of light-field compression.38 But both methods are achieved on 8-bit RGB light-field images with lossy compression, since the loss of some information (e.g., tiny structures) in macro photographic data is tolerable. Therefore, existing methods cannot be directly applied to the lossless compression of microscopy data with low signal-to-noise ratio (SNR) and high dynamic range (HDR), such as fluorescence microscopy data.

Here, we propose a 4D phase-space continuity-enhanced bzip2 (PC-bzip2) lossless compression method, as a lightweight and high-throughput compression tool for LFM data. By applying a prediction based on 4D phase-space continuity and graphics processing unit (GPU)-based fast entropy judgment, we could get a predicted image with a smaller size and then compress the image with a multicore CPU-based high-speed lossless compression method. Compared with the original bzip2, we achieve almost 10% compression ratio improvement while keeping the capability of high-speed compression. A fast MATLAB interface and ImageJ plug-in were provided for ease of use. To demonstrate the performance of our method, we tested fluorescence beads data and different types of cell data under different light conditions. Moreover, we showed the superior compression ratio of our method on time series data of zebrafish blood vessels by introducing temporal continuity prediction.

Sign up for Advanced Photonics Nexus TOC. Get the latest issue of Advanced Photonics Nexus delivered right to you!Sign up now

2 Methods

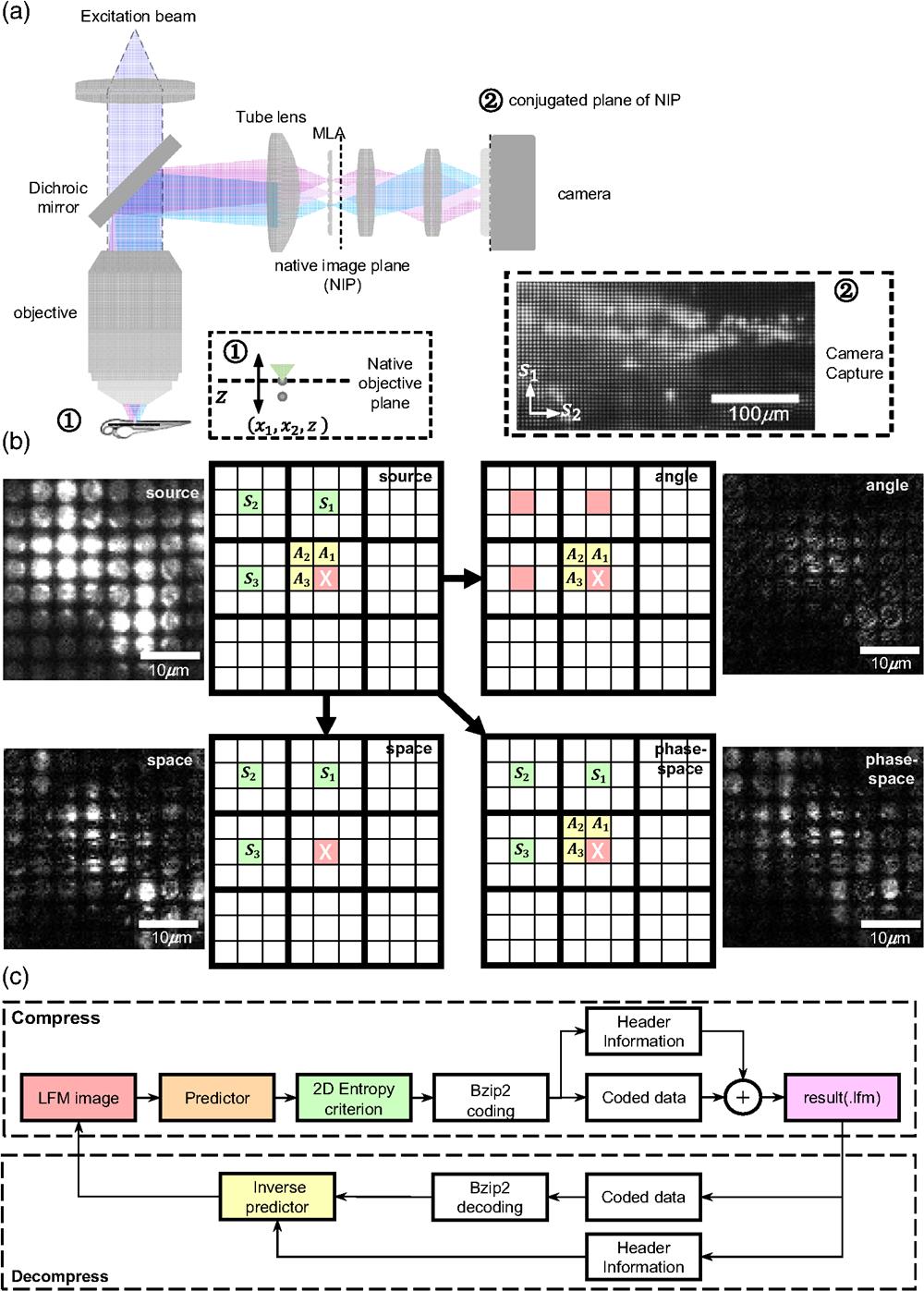

We built a light field fluorescence microscope based on a wide-field microscope by inserting a microlens array into the image plane of the tube lens [Fig. 1(a)]. In this way, the light field of the sample will be formed behind the microlens array, which then will be relayed to the image sensor for recording [Fig. 1(a)]. A sample zebrafish image captured by the light-field microscope shows the basic structure of the data. The LFM data has a 4D structure with not only spatial continuity but also angular continuity. Angular continuity characterizes the relationship between adjacent pixels in a microlens, while spatial continuity characterizes the relationship between adjacent microlens pixels at the same position. Traditional image compression algorithms mainly make predictions by using adjacent pixels. However, the predictors of a light field are no longer limited by this constraint.

![]()

Figure 1.PC-bzip2 framework for high-speed lossless LFM data compression. (a) Schematic of the light-field microscopy system and LFM data structure. (b) Schematic of the predictors based on spatial continuity, angular continuity, and 4D phase-space continuity. (c) A complete framework including PC-bzip2 compression and decompression process. The compression pipeline consists of prediction part, 2D image entropy criterion, multicore CPU accelerated bzip2 coding, and packing header information and coded data. The decompression pipeline consists of unpacking header information, multicore CPU-accelerated bzip2 decoding, and inverse prediction.

In this case, the angular or spatial continuity can be applied alone for prediction. What is more, we can make full use of the continuity in both angular and spatial domains, which is named phase-space continuity, to make predictions for LFM data [Fig. 1(b)], thereby theoretically improving the compression ratio. Therefore, based on the phase-space continuity, we propose a PC-bzip2 compression framework for lossless LFM data compression [Fig. 1(c)]. The main idea of this framework is to add a prior knowledge-based predictor for the LFM data before the multicore CPU-accelerated bzip2 compression. In addition, we propose a two-dimensional (2D) image entropy criterion to determine which predictor is used to optimize the compression ratio. These two parts, as the preprocessing unit before bzip2 compression, can ensure that each compressed image has as little information redundancy as possible. Finally, the header information, including the selected predictor information and the coded data, can be packaged and stored in the file. In the decompression process, after multicore CPU-accelerated bzip2 decompression, we only need to select the corresponding inverse predictor according to the header information to restore the original LFM data (Fig. 1).

To sum up, the proposed PC-bzip2 lossless compression pipeline can be decomposed into four parts: prediction part, 2D image entropy criterion, multicore CPU accelerated bzip2 coding, and packing header information and coded data. We describe the details of each part in the following sections. The detailed schematic of the algorithm is shown in Fig. 1(c).

2.1 Prediction part

The prediction of pixel values leverages the interpixel continuity to reduce redundant information. Considering the 4D structure of light-field microscopy (LFM) data, characterized by 4D phase-space continuity, we separately predict the pixel value employing spatial continuity, angular continuity, and 4D phase-space continuity and compare their respective performances. When using spatial continuity exclusively, the pixel value is predicted by the neighboring pixels situated to the left, top, and top left. When employing angular continuity separately, the pixel value is predicted by the pixels in the same position from the neighboring microlenses located to the left, top, and top left. When incorporating 4D phase-space continuity, however, the predicted pixel value is not only related to neighboring pixels, but also influenced by those in adjacent microlenses. The schematic of these different predictors is shown in Fig. 1(b).

2.2 2D image entropy criterion

A 2D image entropy criterion is used to determine whether the prediction of pixel value will improve the compression ratio. As shown in Fig. 2, for the corresponding pixel, it not only has angle-adjacent pixels , , and , but also spatially adjacent pixels , , and . Therefore, the value of pixel can be predicted by considering both these continuities. This process can be simply expressed as

![]()

Figure 2.Main functions used in this work for LFM data compression. (a) Schematic of LFM data structure.

We further realign the 16-bit predicted image as an 8-bit data string and do a GPU-accelerated Burrows-Wheeler transform (BWT) on the 8-bit data string, which can be expressed as

![]()

Figure 3.The schematic of the 2D image entropy calculation. In BWT transformation, the realigned 16-bit image sequence is regarded as an 8-bit image sequence by splitting each 16-bit value into high and low 8 bits. After BWT transformation, every two adjacent 8 bits will be formed into 16 bits for histogram statistics.

2.3 Multicore CPU accelerated bzip2 coding and packing

After validating the effectiveness of the prediction in enhancing compression ratio through 2D image entropy, the predicted image is then compressed utilizing the multicore CPU accelerated bzip2 algorithm. We reused the KLB code for our own purpose.7 KLB is currently one of the most advanced methods for bzip2 acceleration. The main idea of KLB is to split data into multiple blocks and then compress them in parallel, getting the utmost out of the multicore design of advanced computers. The KLB compression process consists of three steps. First, the data to be compressed is divided into some blocks. The block number corresponds to the number of threads supported by the computer processor, representing the maximum number of parallel operations. Second, multiple blocks are simultaneously compressed using the bzip2 algorithm, which is the main reason for the speed improvement of KLB compared to general bzip2. Finally, the compression results of each block in the previous step are written into the output file in turn, with the relevant information of the image and the block packed at the same time. Since we use different predictors for different images according to the 2D image entropy judgment result, we need to pack the predictor information into the final compressed file. Here, the predictor information is represented by an 8-bit unsigned integer in the header information of the final compressed file. The mapping table of predictors can be viewed in Fig. 2.

3 Results

3.1 Different Compression Ratios for Different SNR Images

We investigated different predictors in the compression algorithm. The schematic of the LFM data is shown in Fig. 2(a). Among the seven types of predictors mainly applied in traditional compression methods (such as PNG and JPEG), four of them utilize more than one adjacent pixel for predictor calculation [Fig. 2(b)]. We then extend these predictors to the LFM data [Fig. 2(c)], including angle prediction, space prediction, and phase-space prediction.

To verify the performance of our PC-bzip2 compression experimentally, we imaged the fluorescent beads and MCF-10A cells separately with the LFM under the different exposure times [Fig. 4(a)], where the image of the fluorescent beads represents typical LFM data without continuous structural information, while the image of the MCF-10A cells represents a typical LFM data with better structural information. Different exposure times will cause images to have different SNRs. Then we tested the performance of all the phase-space predictors [Figs. 2(b) and 2(c)] on these two types of LFM images with different SNRs. We find that as the image SNR increases (i.e., more information is detected within the image), the compression ratio gradually increases, which means the increase in the number of bits required for each dimension for all the predictors [Figs. 4(b) and 4(c)]. For the images of the fluorescent beads without continuous structural information, the prediction step did not improve the final compression ratio [Fig. 4(c)]. But for the MCF-10A cells images with better continuous structure information, the prediction step can significantly increase the final compression rate [Fig. 4(b)] and the maximum improvement can reach 0.77 bits/dim.

![]()

Figure 4.Comparing different compression ratios on images of different specimens with different SNRs. (a) Two groups of samples are captured at different exposure time (the change of the exposure time results in different SNRs on images). The beads images are obtained by imaging green fluorescent beads with the LFM, and MCF-10A images are obtained by imaging MCF-10A cells with the LFM. The image in the lower-right corner is a close-up marked by the yellow box. (b) The performance comparison on MCF-10A images with different predictors. The automatic criterion can accurately choose

In the compression tests of both fluorescent bead images and MCF-10A cell images, the 2D entropy criterion can accurately predict the optimal predictors with the optimal compression ratios [Fig. 4(b) and 4(c)]. In addition, we further tested the predictor () using angle prediction, space prediction, and phase-space prediction separately [Fig. 4(d)], and the phase-space predictor showed the highest compression ratio. In addition, to further demonstrate the enhancement of the compression ratio in our PC-bzip2, we compared our PC-bzip2 with KLB on the images of MCF-10A in terms of time consumption (including compression and decompression) and compression rate. With almost no additional time consumption, the compression ratio can be increased by 10% [Fig. 4(e)]. This tiny time gap can be further reduced with the upgrade of the GPU.

3.2 Extension to Time Series Data

Since the recording of biological dynamic processes such as neuron activity by LFM usually lasts for a long time with a high frame rate, massive quantities of time series data will consequently be generated. Therefore, we have extended our method to the time dimension to further improve its practicality (Fig. S2 in the Supplementary Material). When performing lossless compression on time series LFM data, we consider introducing the temporal continuity into the basis of original 4D phase-space continuity. First, in order to find the best predictor for a single frame, the first frame is processed according to the compression method of PC-bzip2 [Fig. 1(c)], where the result of the 2D entropy criterion will be used as the main predictor in the subsequent frames. For the subsequent frames, we introduce the interframe predictor combined with the single-image predictor to further reduce redundant information in time series data (Fig. S3 in the Supplementary Material). Once the predictor for a single image is determined by the first frame, all subsequent frames will be predicted by introducing temporal continuity on the basis of this predictor. After all the time series data are predicted and symbolized, the result will be divided into several blocks (equal to the maximum number of threads supported by the computer), and then compressed by bzip2 in parallel. The compression result and header information are written to the file.

To verify the advantage of the extension method, we imaged the live larval zebrafish by recording 20 frames of the heartbeat of the zebrafish under different exposure times [Fig. 5(a)], which will be later compressed in the separate use of spatial continuity, angular continuity, phase-space continuity, and their respective expansions in the time dimension. Introducing the time continuity into the predictor is beneficial for the improvement of compression ratios [Fig. 5(b)]. Without time continuity, the compression ratio using the spatial predictor is better than that using the angular predictor. On the contrary, the method of angular predictor combined with time continuity is better, which means that the angular continuity is higher than the spatial continuity in the time dimension. Compared with only keeping the phase-space continuity, our method could realize higher compression ratios by combining with the time continuity [Fig. 5(c)]. We also compared our methods with the state-of-the-art lossless compression method KLB. Whether or not in combination with temporal continuity, our methods could be significantly better than KLB in the compression ratio [Fig. 5(c)]. Comparing the compression results under different SNRs, it can be seen that the final compression ratios mainly depend on the amount of information contained in the video. To demonstrate the truly lossless compression of our method on time series, we perform local statistics on the images randomly selected from the decompressed time series under different SNRs [Figs. 6(c) and 6(d)], showing that our method achieves the really lossless compression and decompression processes whether in the intuitive vision of the image or its specific distribution.

![]()

Figure 5.Compression performance by extending PC-bzip2 to the time dimension. (a) Two sets of 20-frame videos are obtained by imaging larval zebrafish with different laser powers using the LFM [

![]()

Figure 6.Comparison of PC-bzip2 compression performance on image data and time series image data. (a) Comparison of lossy compression and lossless compression on biomedical image data captured by light-field microscope. The left column shows the decompressed larval zebrafish image by B3D lossy compression, and the right column shows the decompressed larval zebrafish image by PC-bzip2 lossless compression. The upper right corner shows the magnified areas marked by the yellow box, and the gray-scale histogram of the areas marked by the yellow box is shown below. (b) Comparison of PC-bzip2 decompressed results and PC-bzip2 compression input image of MCF-10A cells image data with an exposure time of 1024 ms. The left column shows the PC-bzip2 compression input image and its gray-scale histogram. The right column shows the PC-bzip2 decompressed image and its gray-scale histogram. (c) and (d) The performance of PC-bzip2 extended to the time dimension, where the images are randomly selected from the time series. (c) A larval zebrafish image with laser power of

3.3 Lossless Compression for Image and Video Data

In order to visually demonstrate the performance of our PC-bzip2 method in generalizing to different data formats, including image data and time series (video) data, we show multiple sets of comparison of the images and their gray-scale performance before and after PC-bzip2 compression in Fig. 6. We first compared the decompressed larval zebrafish image compressed by B3D and PC-bzip2, indicating that our method can realize truly lossless compression [Fig. 6(a)] Then we compared the decompressed image of MCF-10A image data [Fig. 6(b)] compressed by our PC-bzip2 with its original images. Similarly, we also compared the images from the time series zebrafish data of different excitation powers [Figs. 6(c) and 6(d)]. The results demonstrated the ability of our PC-bzip2 to achieve efficient lossless compression and decompression performance for both image data and time series image data.

4 Conclusion

We have developed a 4D phase-space continuity-enhanced bzip2 lossless compression method to realize high-speed and efficient LFM data compression. By adding a suitable predictor determined by the 2D image entropy criterion before bzip2 or KLB compression, we achieved almost 10% improvement in compression ratio with a little increase in time. We demonstrated the performance of the PC-bzip2 algorithm on fluorescence beads data and cells data with different SNRs. Since the recording of multicellular organisms by LFM usually generates huge time series data, we further extended our method to the time dimension to improve its practicality. We validate the temporal extension of PC-bzip2 on time series recording of zebrafish blood vessels. Compared to the predictor in the traditional compression method, we fully exploited the structure of LFM image or video to achieve high compression performance. We provided a fast MATLAB interface, and an ImageJ plug-in was provided for ease of use. The PC-bzip2 can become a promising and lightweight tool for any light-field microscope.

Since the improvement of compression ratio in PC-bzip2 is mainly determined by the redundancy of LFM data, the PC-bzip2 algorithm will not show significant improvement on all samples. Therefore, in our method, we use the 2D image entropy criterion to choose a suitable predictor or directly apply the bzip2 compression algorithm without a predictor for the adaption to different samples. Further improvement may include extending the algorithm to the spectral dimension and optimizing the time consumption of the 2D image entropy criterion. We believe such improvements in compression performance will bring advanced data storage capacity with a lightweight tool to the broad microscopic community, facilitating mass data storage and processing in various biomedical applications like multicellular organism observation.

Bo Xiong is a postdoc at Peking University. He received his BS and PhD degrees in control science and engineering from Tsinghua University in 2015 and 2022, respectively. He is the author of more than 10 journal papers. His current research interests include computational imaging & microscopy, and neuromorphic vision. He is a member of OPTICA and IEEE.

Biographies of the other authors are not available.

References

[10] Y. Bai et al. Deep lossy plus residual coding for lossless and near-lossless image compression. IEEE Trans. Pattern. Anal. Mach. Intell., 1-18(2024).

[11] K. K. Shukla, M. Prasad. Lossy Image Compression: Domain Decomposition-Based Algorithms(2011).

[12] M. Rabbani, P. W. Jones. Digital Image Compression Techniques(1991).

[15] M. Lu et al. Transformer-based image compression, 469-469(2022).

[16] Y. Qian et al. Entroformer: a transformer-based entropy model for learned image compression(2021).

[17] D. W. Cromey. Digital images are data: and should be treated as such. Cell Imaging Technol. Methods. Protoc., 931, 1-27(2013).

[21] S. Radhakrishnan, L. M. Matos, A. J. Neves, A. J. Pinho. Lossy-to-lossless compression of biomedical images based on image decomposition. Applications of Digital Signal Processing through Practical Approach(2015).

[24] B. Balázs et al. A real-time compression library for microscopy images(2017).

[26] J. Townsend, T. Bird, D. Barber. Practical lossless compression with latent variables using bits back coding, 7(2020).

[27] J. Townsend et al. Hilloc: lossless image compression with hierarchical latent variable models, 7(2019).

[29] F. Kingma, P. Abbeel, J. Ho. Bit-swap: recursive bits-back coding for lossless compression with hierarchical latent variables, 3408-3417(2019).

[30] J. Ho, E. Lohn, P. Abbeel. Compression with flows via local bits-back coding(2019).

[31] I. Goodfellow et al. Generative adversarial nets(2014).

[32] F. Mentzer et al. High-fidelity generative image compression, 11913-11924(2020).

[33] L. Wu, K. Huang, H. Shen. A GAN-based tunable image compression system, 2334-2342(2020).

[35] E. Hoogeboom et al. Integer discrete flows and lossless compression(2019).

[41] R. van den Berg et al. IDF++: analyzing and improving integer discrete flows for lossless compression(2020).

[43] S. Zhang et al. iFlow: numerically invertible flows for efficient lossless compression via a uniform coder, 5822-5833(2021).

Set citation alerts for the article

Please enter your email address