Xiaojun He, Xuan Liu, Xian Wei. Classification Method of High-Resolution Remote Sensing Scene Image Based on Dictionary Learning and Vision Transformer[J]. Laser & Optoelectronics Progress, 2023, 60(14): 1410019

Search by keywords or author

- Laser & Optoelectronics Progress

- Vol. 60, Issue 14, 1410019 (2023)

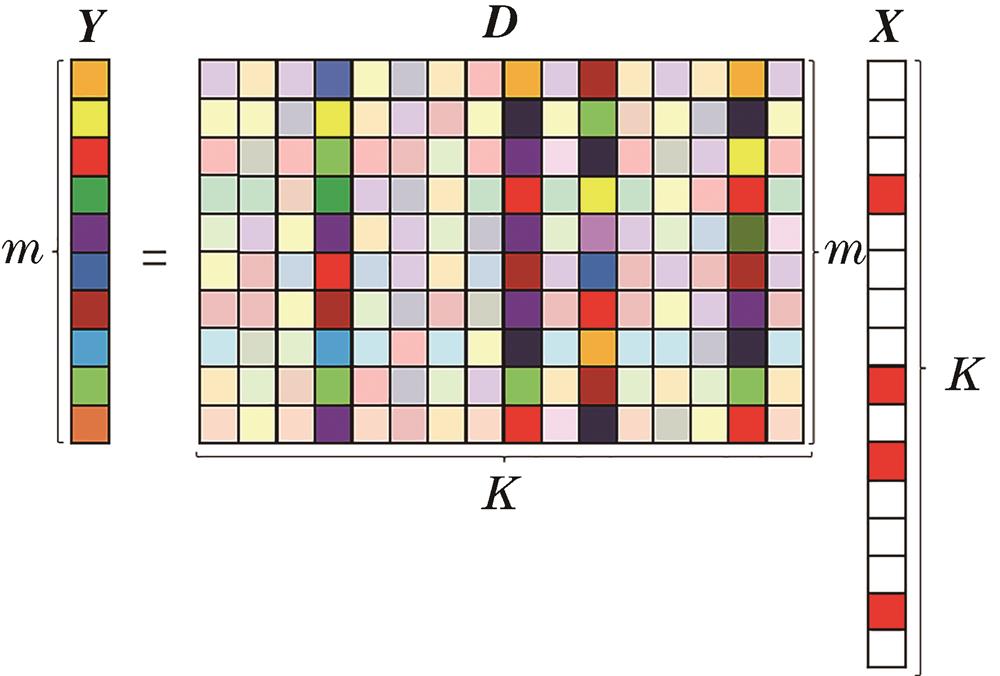

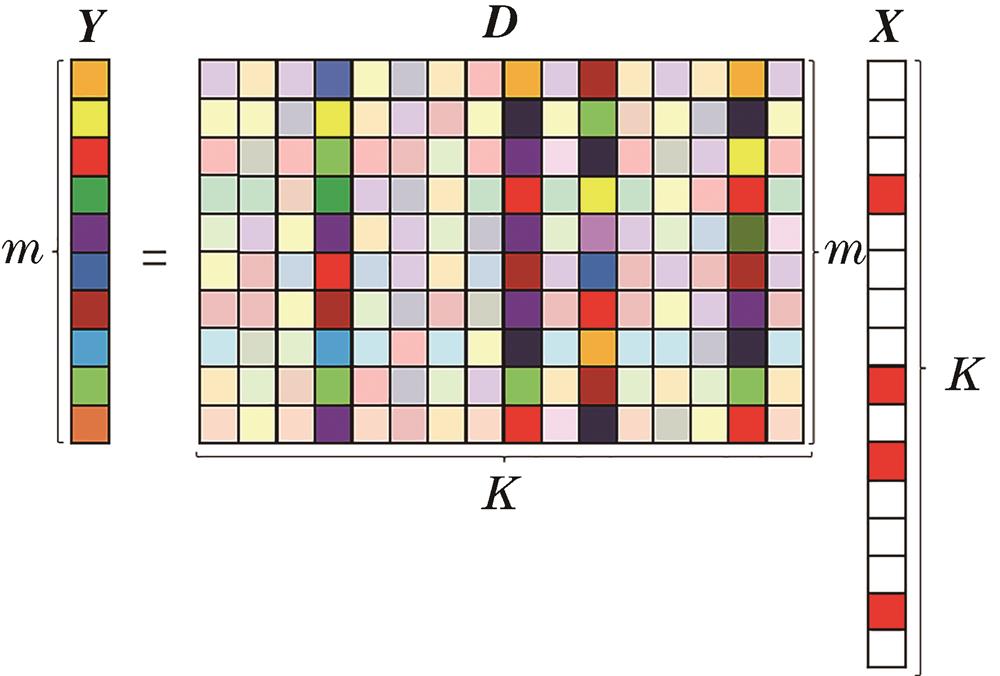

Fig. 1. Diagram of dictionary learning

Fig. 2. Flowchart of the proposed method

Fig. 3. Batch normalization and layer normalization

Fig. 4. Schematic of multilayer perceptron

Fig. 5. Flowchart of attention module method

Fig. 6. Attention module based on dictionary learning

Fig. 7. RSSCN7 dataset

Fig. 8. NWPU-RESISC45 dataset

Fig. 9. AID dataset

Fig. 10. Rate of change of classification accuracy on Gaussian noise images

|

Table 1. Introduction of datasets

|

Table 2. Laboratory environment

|

Table 3. Accuracy of different networks on RSSCN7 dataset

|

Table 4. Accuracy of different networks on NWPU-RESISC45 dataset

|

Table 5. Accuracy of different networks on AID dataset

| |||||||||||||||||||||||||||||||||||||||||

Table 6. Parameter indicators of two methods on three datasets

|

Table 7. Parameters of different classification frameworks

Set citation alerts for the article

Please enter your email address