- Journal of Semiconductors

- Vol. 41, Issue 2, 021402 (2020)

Abstract

1. Introduction

Since AlexNet[

CNN is one type of DNN mainly used in the computer vision area. CNN[

DNN makes AI reach and surpass a human’s ability in many tasks. The increased performance of DNN is at the cost of increased computation complexity and more memory. For example, compared to AlexNet, VGG-16[

GPU[

Reconfigurability, customizable dataflow and data-width, low-power, and real-time, makes FPGA an appealing platform to accelerate CNN. But the performance of the CNN accelerator is limited by computation and memory resource on FPGA. Zhang et al.[

Though we can apply various techniques[

For the ALM module, in order to support low-precision MAC, Boutros[

For the memory module, Xilinx[

Other design considerations include new architecture or specialized AI processor. Xilinx provides ACAP[

2. DSP module design for AI

With acceptable accuracy, low-precision can make FPGA implement more MAC operations, which improves computation performance. So, one design of DSP for AI is to make DSP support low-precision multiplication. However, some DNN or even some layers of one DNN need high-accuracy floating point computation. So, the other design of DSP for AI is to make DSP support floating-point MAC operation.

2.1. Low-precision design

Most of MAC operations are done by DSP module in FPGA. Boutros et al.[

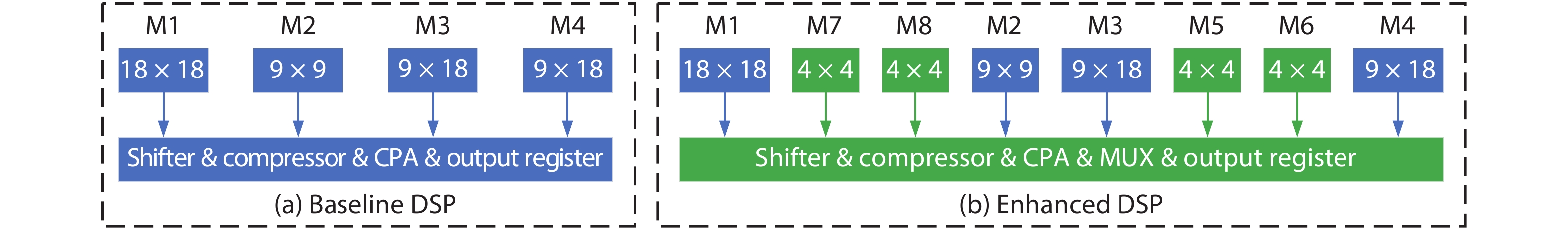

First, they built a base-line DSP which looks like the one in Arria-10 Intel FPGA, it can perform one 27-bit multiplication, and two 18-bit multiplication. Its maximum operation frequency is 600 MHz. Fig. 1(a) shows the simplified architecture of base-line DSP.

![]()

Figure 1.(Color online) Simplified architecture of (a) baseline DSP and (b) enhanced DSP.

For one 27-bit multiplication, A[26:0] × B[26:0], A[26:9] × B[26:9] is implemented on 18 × 18 M1 multiplier, A[8:0] × B[8:0] is implemented on 9 × 9 M2 multiplier, A[8:0] × B[26:9] is implemented on 9 × 18 M3 multiplier, A[26:9] × B[8:0] is implemented on 9 × 18 M4 multiplier. For two 18-bit multiplication, one is implemented on 18 × 18 M1 multiplier, the other one is implemented on 9 × 18 M3 multiplier and 9 × 18 M4 multiplier. The 9 × 9 M2 multiplier is left unused.

The enhanced DSP keeps working at 600 MHz frequency, without increasing the inputs and outputs, and ensure backward compatible to base-line DSP.

The enhanced DSP support four 9-bit multiplication, because the baseline DSP has 72 (= 18 × 4) outputs. The four 9-bit multiplication is implemented on M1, M2, M3 and M4, with additional Shifter, Compressor and MUX.

Boutros et al.[

From experiments, the enhanced DSP blocks can pack twice as many 9-bit and four times as many 4-bit multiplications as DSP blocks in Arria 10. Boutros et al.[

Finally, Boutros et al. [

DSP block of Intel AgileX can work at fixed-point four 9 × 9 multiplier adder mode[

2.2. Floating-point design

Intel Arria 10[

Previous DSP[

3. ALM/CLB module design for AI

As mentioned before, with acceptable accuracy, low-precision can improve FPGA MAC performance. Most MAC operation is done by DSP module in FPGA. But DSP blocks represent only 5% of the FPGA core area in DSP-rich devices[

BNN[

3.1. Low-precision design

Boutros et al.[

Fig. 2(a) shows the first architecture enhancement, that is ALM with the proposed extra carry chain architecture modifications. Fig. 2(b) shows the second architecture enhancement, that is ALM with the proposed 4-bit adder architecture which fracture each 4-LUT into two 3-LUT and has two additional full adders and multiplexing. In this way, ALM can implement more adders. Fig. 2(c) shows the third architecture enhancement, that is LAB with the proposed shadow multiplier. When shallow multiplier works, the middle two ALMs is not available. In this way, it can increase the density of on-chip MAC operation.

![]()

Figure 2.(Color online) Proposed extra carry chain architecture modifications.

Boutros et al.[

Combining shadow multiplier and 4-bit adder architectures can get the best result which increase 6.1 × MAC density, it also leads to larger tile area and larger critical path delay value.

3.2. BNN design

For each layer of neural network of BNN, the input (except for original input), the weights and activations are binarized (+1 or –1), which can be represented by 1-bit (see Fig. 3(b)). Figs. 3(a) and 3(b) make a comparison of flow between CNN and BNN.

![]()

Figure 3.(Color online) The difference of CNN and BNN: (a) CNN, (b) BNN and (c) XNOR replace multiplication for BNN.

The convolutional operation of CNN is multiply-accumulation. For BNN, we can use 1 to represent +1, use 0 to represent –1, so the multiplication can transform to XNOR operation. See Fig. 3(c). Then the accumulation operation can transform to popcount operation which is to count the number of ones in a large word. Thus, the MAC operation of a BNN is reduced to XNOR-popcount operation which can easily implemented on FPGA. And the weights and activations of BNN is 1-bit, makes it possible to store all parameters on chip memory of FPGA.

On an FPGA, the XNOR part is easily implemented with LUTs. For the popcount part, it is common to use a binary full adder tree to sum up the bits in vectors. This requires N − 1 binary adders in different bit-width[

Kim et al.[

This ALM modification only adds two dedicated ports in carry-chain direction, it doesn’t add other ports to ALM, so it does not affect the general routing circuit. This ALM modification allows two carry-chains to be used in two distinct modes. For the first mode, it uses the original carry chain, and outputs the sum result. For the second mode, it uses a new carry chain to perform popcount operation, and outputs carry result. Fig. 4(a) shows the first ALM modification for BNN, that is ALM with the proposed extra charry chain which propagates sum.

![]()

Figure 4.(Color online) ALM modifications: (a) ALM modification 1 and (b) ALM modification 2.

For the second ALM modification, Kim et al.[

Two architecture changes need to add corresponding SRAM to configurate MUX select port. Kim et al.[

4. Memory module design for AI

Xilinx ACAP[

Intel Agilex[

![]()

Figure 5.(Color online) Intel AgileX Architecture. (a) AgileX Architecture. (b) Advanced memory hierarchy.

5. Other designs for AI

5.1. Acceleration platform

At June 18th 2019, Xilinx announced that it has shipped VersalTM AI Core series and Versal Prime series devices. Versal devices are industry first adaptive compute acceleration platform (ACAP) which uses TSMC's 7 nm manufacturing process technology. ACAP[

![]()

Figure 6.(Color online) ACAP Architecture. (a) ACAP architecture. (b) AI engine.

The scalar engines include the dual-core Arm® Cortex-A72 and dual-core Arm® Cortex-R5. The adaptable engines are previous FPGA fabric, it is comprised of programmable logic and memory cells. The intelligence engines contain AI engines and DSP engines. ACAP achieves dramatic performance improvements of up to 20 × over today's fastest FPGA implementations and over 100 × over today's fastest CPU implementations—for Data Center, 5G wireless and AI, etc.[

5.2. Compute-intensive processor

Xilinx design the specialized AI engine[

For a CNN model, AI engines can perform convolution layers, fully connected layers, and activation function (Relu, Sigmoid, etc.). While programmable logic can implement pooling function (Max Pool), and store weights and activation values in UltraRAM.

5.3. FPGA-CPU platform

On August 29th 2019, Intel has begun shipments of the first 10 nm Agilex FPGAs. Agilex aims to deal with the data proliferation problem the edge to the network to the cloud, it is a data-centric product.

What it is most important is that AgileX supports compute express link, which enables a high-speed and memory coherent interconnect to future Intel Xeon scalable processors. Other innovations include: 2nd generation HyperFlex, embedded multi-die interconnect bridge (EMIB), PCIe Gen 5, variable-precision DSP, advanced memory hierarchy, Quad-core A53 HPS, 112 Gbps transceiver, etc. See Fig. 5(a). AgileX delivers up to 40% higher core performance, or up to 40% lower power over previous generation high-performance FPGAs[

6. Conclusion

Reconfigurability, low-power and real-time makes FPGA excel at inference tasks. The FPGA chip has to redesign to better implement different evolving DNN requirements. Table 1 shows the summary of all enhancements of FPGA for the AI era.

For the DSP module, in order to support low-precision techniques, Boutros et al. enhance DSP block to support 9-bit and 4-bit multiplication. DSP of Intel AgileX supports INT8 computation. In order to support high-accuracy, Intel and Xilinx design their DSP to support float point computation. For ALM module, Boutros et al. enhance ALM with extra carry chain, or more adders, or shadow multipliers modification increase the density of on-chip MAC operation. Kim et al. propose two modifications on ALM and CLB (configurable logic block) better support BNN implementation. For memory module, ACAP of Xilinx and Agilex of Intel provide more memory resources which include three types of memory which are embedded memory, in-package HBM (high bandwidth memory) and off-chip memory interfaces, such as DDR4/5.

Other design considerations include new architecture or specialized AI processor. Xilinx ACAP in 7 nm is the first industry adaptive compute acceleration platform. ACAP also provides specialized AI engine which can increase compute density by 8X with 50% lower power. Intel AgileX in 10 nm works coherently with Intel own CPU, which increase performance, reduced overhead and latency.

References

[1] A Krizhevsky, I Sutskever, G E Hinton. ImageNet classification with deep convolutional neural networks. Neural Information Processing Systems (NIPS), 1097(2012).

[2] S Liang, S Yin, L Liu et al. FP-BNN: Binarized neural network on FPGA. Neurocomputing, 275, 1072(2017).

[3]

[4] C Zhang, P Li, G Sun et al. Optimizing FPGA-based accelerator design for deep convolutional neural networks. The 2015 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, 161(2015).

[5] J Qiu, J Wang, S Yao et al. Going deeper with embedded FPGA platform for convolutional neural network. The 2016 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, 26(2016).

[6] S Yin, P Ouyang, S Tang et al. A high energy efficient reconfigurable hybrid neural network processor for deep learning applications. IEEE J Solid-State Circuits, 53, 968(2018).

[7] S Han, H Mao, W J Dally. Deep compression: compressing deep neural networks with pruning, trained quantization and huffman coding. ICLR(2016).

[8] P Gysel, M Motamedi, S Ghiasi. Hardware-oriented approximation of convolutional neural networks. ICLR(2016).

[9] S Han, X Liu, H Mao et al. EIE: efficient inference engine on compressed deep neural network. International Symposium on Computer Architecture (ISCA), 243(2016).

[10] A Zhou, A Yao, Y Guo et al. Incremental network quantization: towards lossless CNNs with low-precision weights. ICLR(2017).

[11]

[12] I Hubara, M Courbariaux, D Soudry. Binarized neural networks. Neural Information Processing Systems (NIPS), 1(2016).

[13] Y Umuroglu, N J Fraser, G Gambardella et al. FINN: A framework for fast, scalable binarized neural network inference. International Symposium on Field-Programmable Gate Arrays, 65(2017).

[14] A Boutros, S Yazdanshenas, V Betz. Embracing diversity: Enhanced DSP blocks for low precision deep learning on FPGAs. 28th International Conference on Field-Programmable Logic and Applications, 35(2018).

[15]

[16]

[17] A Boutros, M Eldafrawy, S Yazdanshenas et al. Math doesn’t have to be hard: logic block architectures to enhance low-precision multiply-accumulate on FPGAs. The 2019 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, 94(2019).

[18] J H Kim, J Lee, J H Anderson. FPGA architecture enhancements for efficient BNN implementation. International Conference on Field-Programmable Technology (ICFPT), 217(2018).

[19]

[20]

[21]

[22]

[23] S Yazdanshenas, V Betz. Automatic circuit design and modelling for heterogeneous FPGAs. International Conference on Field-Programmable Technology (ICFPT), 9(2017).

[24] S Yazdanshenas, V Betz. COFFE 2: Automatic modelling and optimization of complex and heterogeneous FPGA architectures. ACM Trans Reconfig Technol Syst, 12, 3(2018).

[25]

[26]

[27] M Langhammer, B Pasca. Floating-point DSP block architecture for FPGAs. The 2015 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, 117(2015).

[28]

Set citation alerts for the article

Please enter your email address