Daoquan WEI, Huiqin WANG, Ke WANG, Zhan WANG, Gang ZHEN. Pigment Classification Method of Mural Sparse Multi-spectral Image Based on Space Spectrum Joint Feature[J]. Acta Photonica Sinica, 2022, 51(4): 0430002

Search by keywords or author

- Acta Photonica Sinica

- Vol. 51, Issue 4, 0430002 (2022)

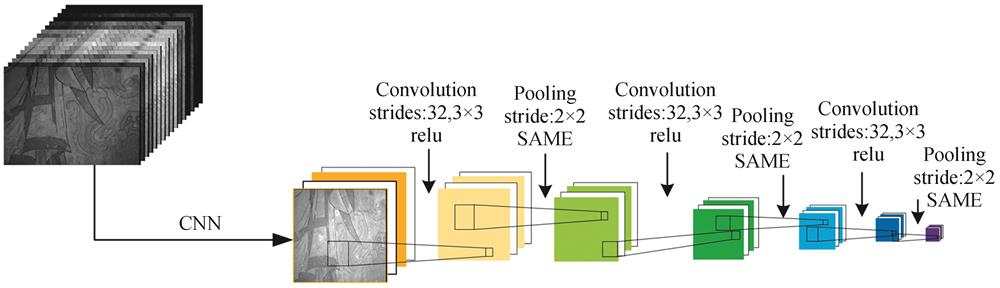

Fig. 1. Spatial feature extraction network structure

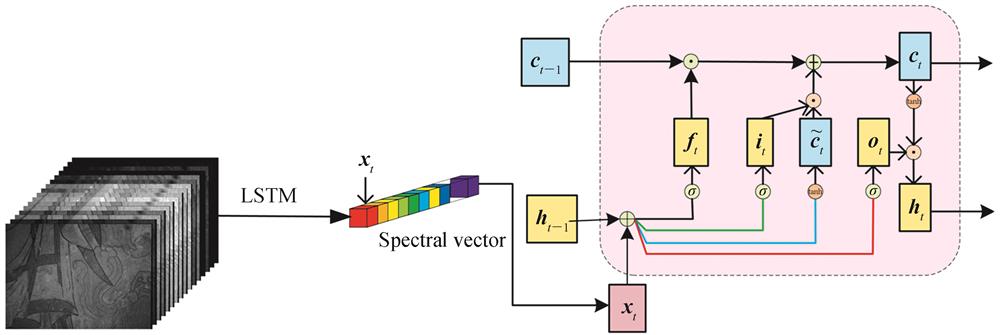

Fig. 2. Spectrum feature extraction network structure

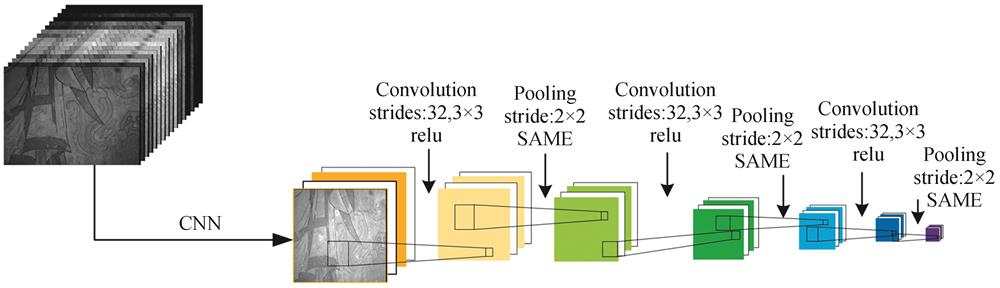

Fig. 3. Space-spectrum joint feature extraction network

Fig. 4. Multispectral pigment board

Fig. 5. Self-made mock murals

Fig. 6. The training results of different methods under the paint board

Fig. 7. Color board classification results

Fig. 8. Classification results of different methods under self-made murals

Fig. 9. Self-made mural classification results

Fig. 10. 16-channel multispectral image of Venerable Injanta's skirt

Fig. 11. Partial region samples and classification results of skirts

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Table 1. The division of training set and test set of multispectral paint board

|

Table 2. Classification accuracy of different methods under pigment board

|

Table 3. Comparison of the scales of subjective image evaluation

|

Table 4. Evaluation results of dual stimulation injury classification method

| |||||||||||||||||||||||||||||||||||

Table 5. Objective evaluation results of image quality

|

Table 6. Classification accuracy of self-made simulated murals by different methods

Set citation alerts for the article

Please enter your email address