Yichen Sun, Mingli Dong, Mingxin Yu, Jiabin Xia, Xu Zhang, Yuchen Bai, Lidan Lu, Lianqing Zhu. Modeling Method of Miniaturized Nonlinear All-Optical Diffraction Deep Neural Network Based on 10.6 μm Wavelength[J]. Laser & Optoelectronics Progress, 2021, 58(8): 0820001

Search by keywords or author

- Laser & Optoelectronics Progress

- Vol. 58, Issue 8, 0820001 (2021)

Fig. 1. Dataset examples. (a) MNIST dataset; (b) Fashion-MNIST dataset

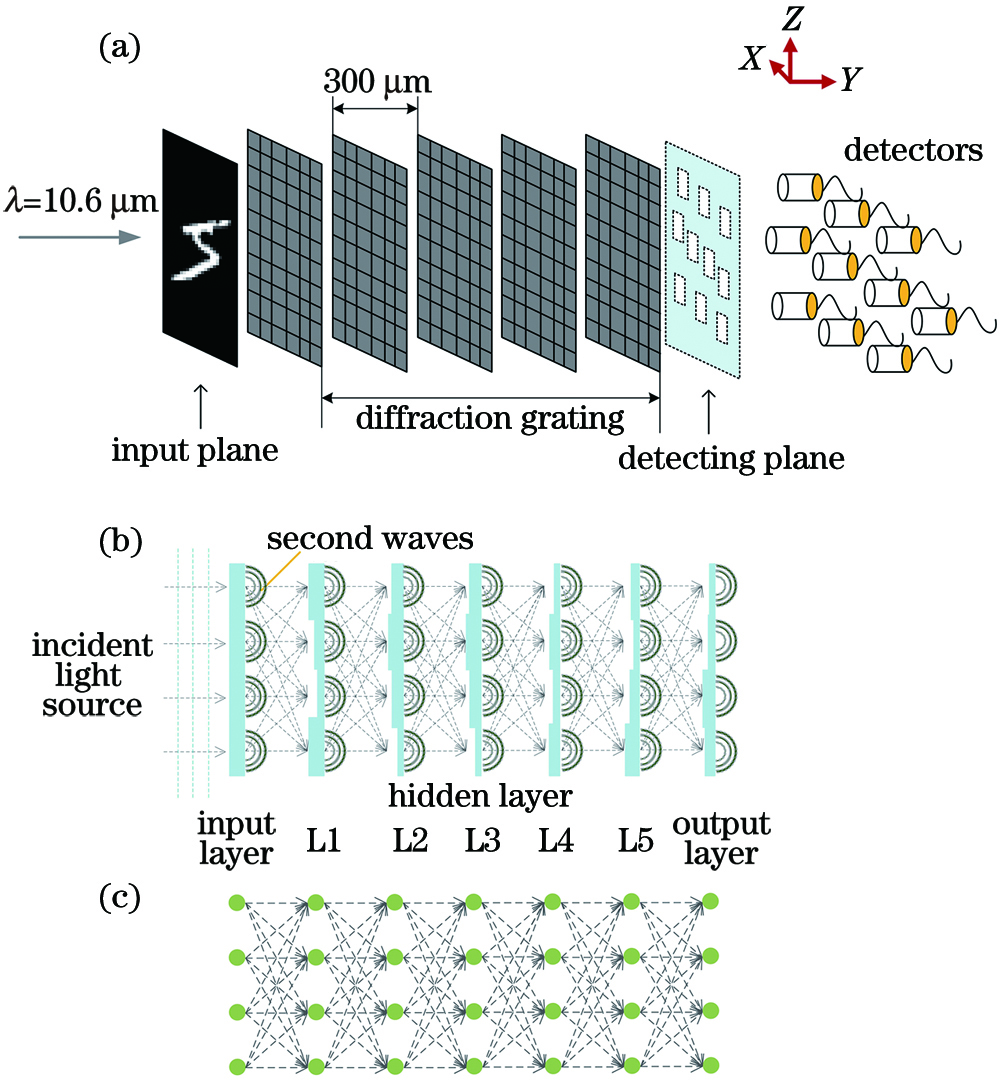

Fig. 2. Structural diagrams of nonlinear all-optical diffraction deep neural network. (a) Physical model of system; (b) optical path model; (c) neural network model

Fig. 3. Mathematical models of different activation functions. (a) Leaky-ReLU and PReLU; (b) RReLU

Fig. 4. Image label design

Fig. 5. Output image of each layer of grating after training

Fig. 6. Classification accuracy corresponding to each number of grating layers in MNIST dataset

Fig. 7. Classification accuracy of Fashion-MNIST dataset corresponding to each number of grating layers in Fashion-MNIST dataset

Fig. 8. Classification results of MNIST dataset by standard all-optical diffraction deep neural network. (a) Classification accuracy; (b) confusion matrix

Fig. 9. Classification results of Fashion-MNIST dataset by standard all-optical diffraction deep neural network. (a) Classification accuracy; (b) confusion matrix

Fig. 10. Classification accuracies and confusion matrixes of MNIST dataset by all-optical diffraction deep neural networks with different activation functions. (a)(b) Leaky-ReLU; (c)(d) PReLU; (e)(f) RReLU

Fig. 11. Recognition accuracy of each number in MNIST dataset by each all-optical diffraction deep neural network model

Fig. 12. Classification accuracies and confusion matrixes of Fashion-MNIST dataset by all-optical diffraction deep neural networks with different activation functions. (a)(b) Leaky-ReLU; (c)(d) PReLU; (e)(f) RReLU

Fig. 13. Recognition accuracy of each number in Fashion-MNIST dataset by each all-optical diffraction deep neural network model

|

Table 1. Label numbers and categories in Fashion-MNIST dataset

|

Table 2. Physical parameters of neural network grating in MNIST dataset

|

Table 3. Neural network training parameters in MNIST dataset

|

Table 4. Classification accuracy of MNIST dataset corresponding to each pixel size and diffraction grating spacing

|

Table 5. Physical parameters of neural network grating in Fashion-MNIST dataset

|

Table 6. Neural network training parameters in Fashion-MNIST dataset

|

Table 7. Classification accuracy of Fashion-MNIST dataset corresponding to each pixel size and diffraction grating spacing

|

Table 8. Classification accuracies of MNIST dataset by nonlinear all-optical diffraction deep neural networks with different activation functions

|

Table 9. Classification accuracies of Fashion-MNIST dataset by nonlinear all-optical diffraction deep neural networks with different activation functions

Set citation alerts for the article

Please enter your email address