Wenxuan Xue, Jianxia Liu, Ran Liu, Xiaohui Yuan. AnImproved Method for Retinal Vascular Segmentation in U-Net[J]. Acta Optica Sinica, 2020, 40(12): 1210001

Search by keywords or author

- Acta Optica Sinica

- Vol. 40, Issue 12, 1210001 (2020)

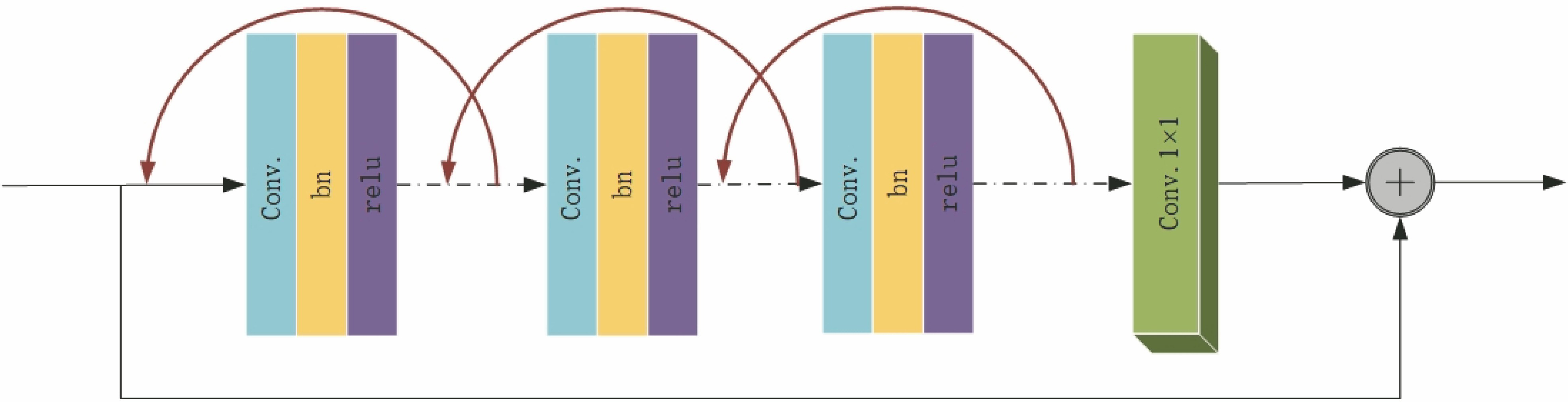

Fig. 1. Schematic of R2CU

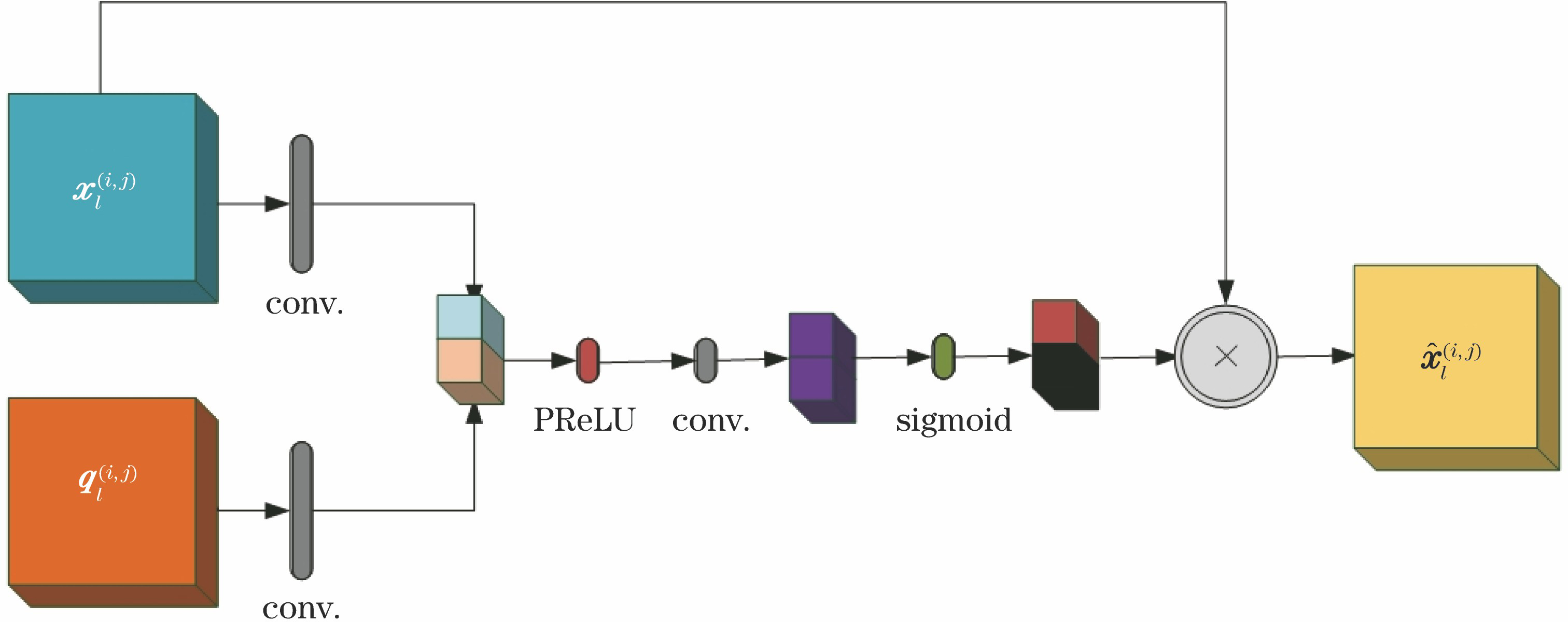

Fig. 2. Attention module schematic

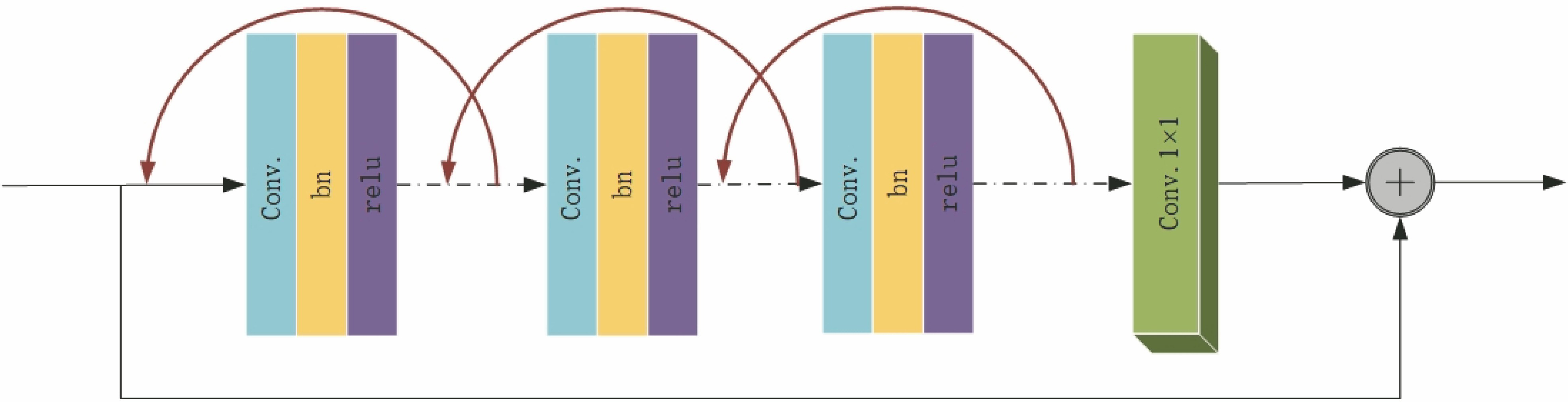

Fig. 3. AttR2U-Net network structure diagram

Fig. 4. Database partial images. (a) Original image; (b) human manual segmentation figure 1; (c) human manual segmentation figure 2; (d) mask

Fig. 5. Color image sub-channel maps. (a) RGB original image; (b) red channel; (c) green channel; (d) blue channel

Fig. 6. Local sample block. (a) Training local sample block; (b) ground truth local sample block

Fig. 7. DRIVE database segmentation results. (a) Original images; (b) ground truth images; (c) segmentation result images

Fig. 8. STARE database segmentation results. (a) Original images; (b) ground truth images; (c) segmentation result images

Fig. 9. Segmentation results. (a) Img255 image; (b) ground truth images; (c) U-Net segmentation result; (d) AttR2U-Net segmentation result; (e) img255 local image; (f) local ground truth images; (g) U-Net local segmentation results; (h) AttR2U-Net local segmentation result

|

Table 1. Standard formulas for evaluation parameters

|

Table 2. AttR2U-Net segmentation performance results

|

Table 3. Performance comparison of different algorithms based on U-Net networks

|

Table 4. Performance indicators of different algorithms in the DRIVE database

|

Table 5. Performance indicators of different algorithms in STARE database

Set citation alerts for the article

Please enter your email address