Wenjie Luo, Guoqing Han, Xuedong Tian. Retinal Vessel Segmentation Method Based on Multi-Scale Attention Analytic Network[J]. Laser & Optoelectronics Progress, 2021, 58(20): 2017001

Search by keywords or author

- Laser & Optoelectronics Progress

- Vol. 58, Issue 20, 2017001 (2021)

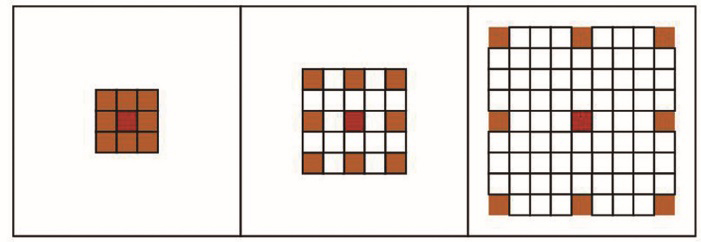

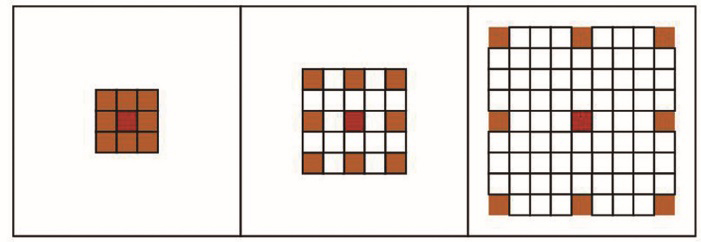

Fig. 1. Dilated convolution

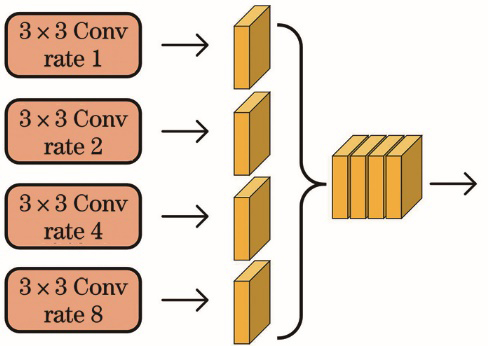

Fig. 2. Parallel multi-branch structure

Fig. 3. Attention residual block

Fig. 4. Spatial pyramid pooling module

Fig. 5. Detailed design of segmentation head in booster

Fig. 6. Multi-scale attention analytic network

Fig. 7. Training samples and labels. (a) Training samples; (b) labels

Fig. 8. Image preprocessing. (a) Original image; (b) preprocessed image

Fig. 9. Retinal vessel segmentation results of different algorithms. (a) Original images; (b) labels; (c) results of proposed algorithm; (d) results in Ref. [30]; (e) results in Ref. [17]; (f) results in Ref. [16]; (g) results in Ref. [31]

Fig. 10. Detail comparison of segmentation results. (a) Original images; (b) details of original images; (c) details of labels; (d) segmentation details of proposed algorithm; (e) segmentation details of algorithm in Ref. [30]; (f) segmentation details of proposed algorithm in Ref. [17]

Fig. 11. ROC curves of segmentation results of different algorithms. (a) ROC curves; (b) curves in box of Fig. 11 (a)

Fig. 12. PR curves of segmentation results of different algorithms. (a) PR curves; (b) curves in rectangular of Fig. 12 (a)

Fig. 13. Changes in various evaluation indicators. (a) F1 value; (b) accuracy; (c) sensitivity; (d) specificity; (e) AUC (ROC); (f) AUC (PR)

| ||||||||||||||||||||||||||||||||||||||||||||||||

Table 1. Evaluation metrics for cases not using α values and using different α values

|

Table 2. Average performance evaluation results on CHASEDB1 and STARE

|

Table 3. Comparison of the method proposed on CHASEDB1 with other advanced methods

|

Table 4. Comparison of proposed method with other advanced methods on STARE

|

Table 5. Influence of each module on whole model

Set citation alerts for the article

Please enter your email address