Hongchao Liu, Anguo Dong. Hyperspectral Remote Sensing Image Classification Algorithm Based on Nonlocal Mode Feature Fusion[J]. Laser & Optoelectronics Progress, 2020, 57(6): 061017

Search by keywords or author

- Laser & Optoelectronics Progress

- Vol. 57, Issue 6, 061017 (2020)

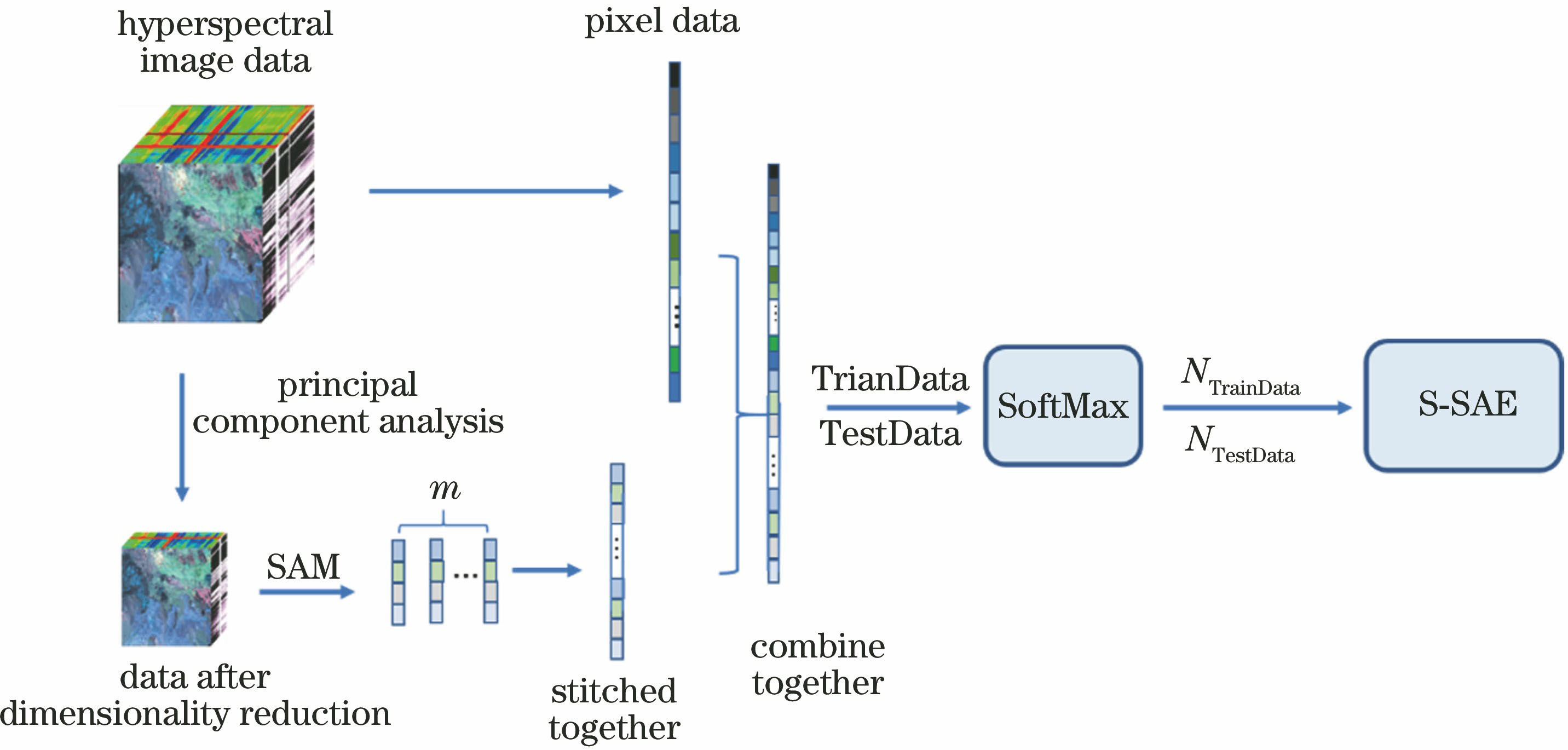

![Stack sparse auto-encoder model[11]](/richHtml/lop/2020/57/6/061017/img_1.jpg)

Fig. 1. Stack sparse auto-encoder model[11]

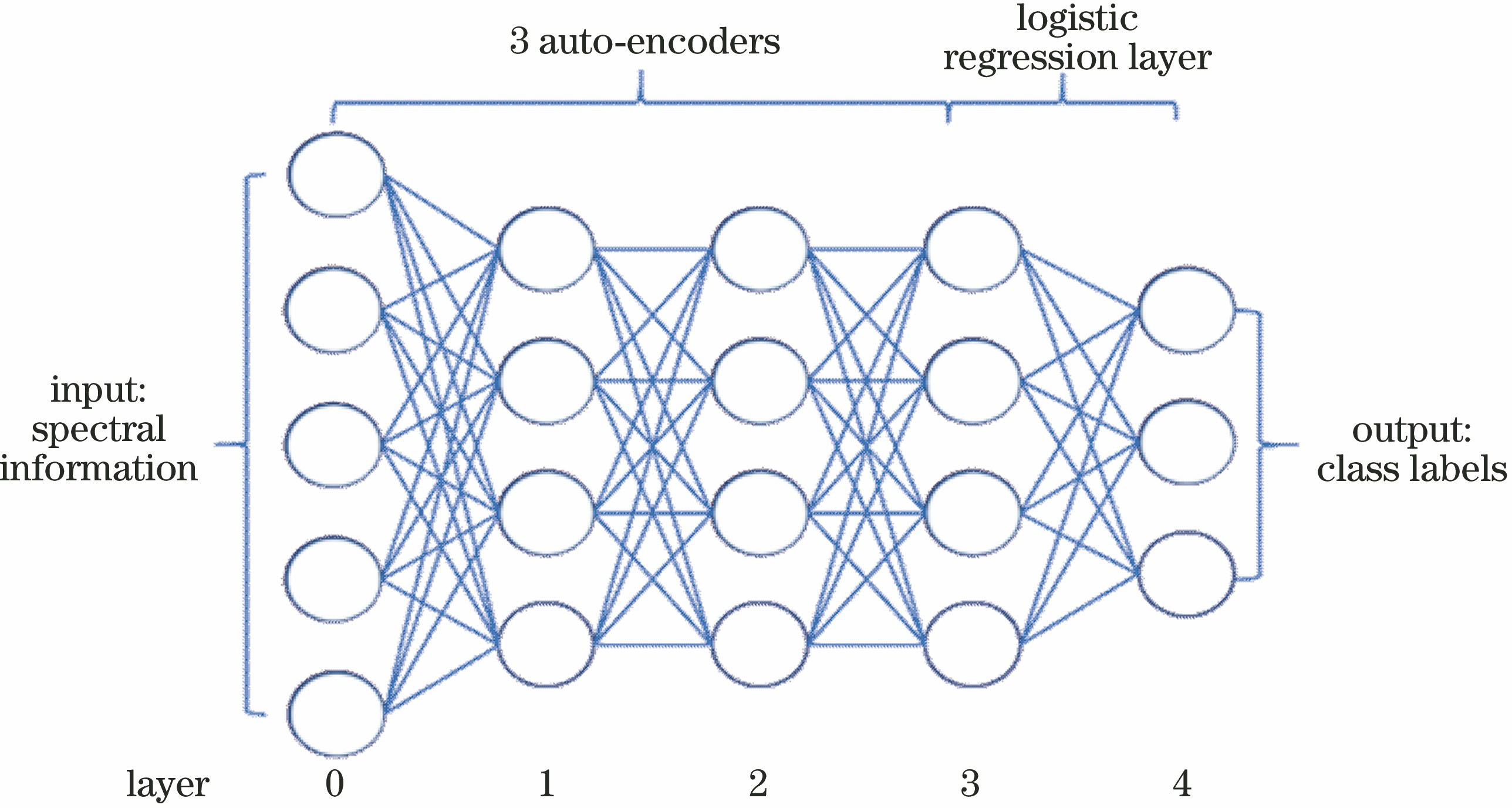

Fig. 2. Hyperspectral two-level classification model for nonlocal mode feature fusion

Fig. 3. Classification results. (a) Classification results combined with local neighborhood information; (b) classification results combined with nonlocal mode feature fusion

Fig. 4. Contrast figures before and after algorithm correction. (a) Before correction; (b) after correction

Fig. 5. Classification results of Pavia University dataset obtained by different algorithms. (a) Original image; (b) true classification picture; (c) SVM; (d) CK-SVM; (e) SOMP; (f) MASR; (g) S-SAE; (h) our method

Fig. 6. Classification results of Indian Pines dataset obtained by different algorithms. (a) Original image; (b) true classification picture; (c) SVM; (d) CK-SVM; (e) SOMP; (f)MASR; (g) S-SAE; (h) our method

Fig. 7. Effect of number of training samples of different data on OA in different algorithms. (a) Pavia University; (b) Indian Pines

| |||||||||||||||||||||||||||

Table 1. Relationship between imported label correctness rate and thresholdunit: %

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Table 2. Experimental data and classification accuracies of the Pavia University dataset

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Table 3. Experimental data and classification accuracies of the Indian Pines dataset

Set citation alerts for the article

Please enter your email address