Mingyang Cheng, Shaoyan Gai, Feipeng Da. A Stereo-Matching Neural Network Based on Attention Mechanism[J]. Acta Optica Sinica, 2020, 40(14): 1415001

Search by keywords or author

- Acta Optica Sinica

- Vol. 40, Issue 14, 1415001 (2020)

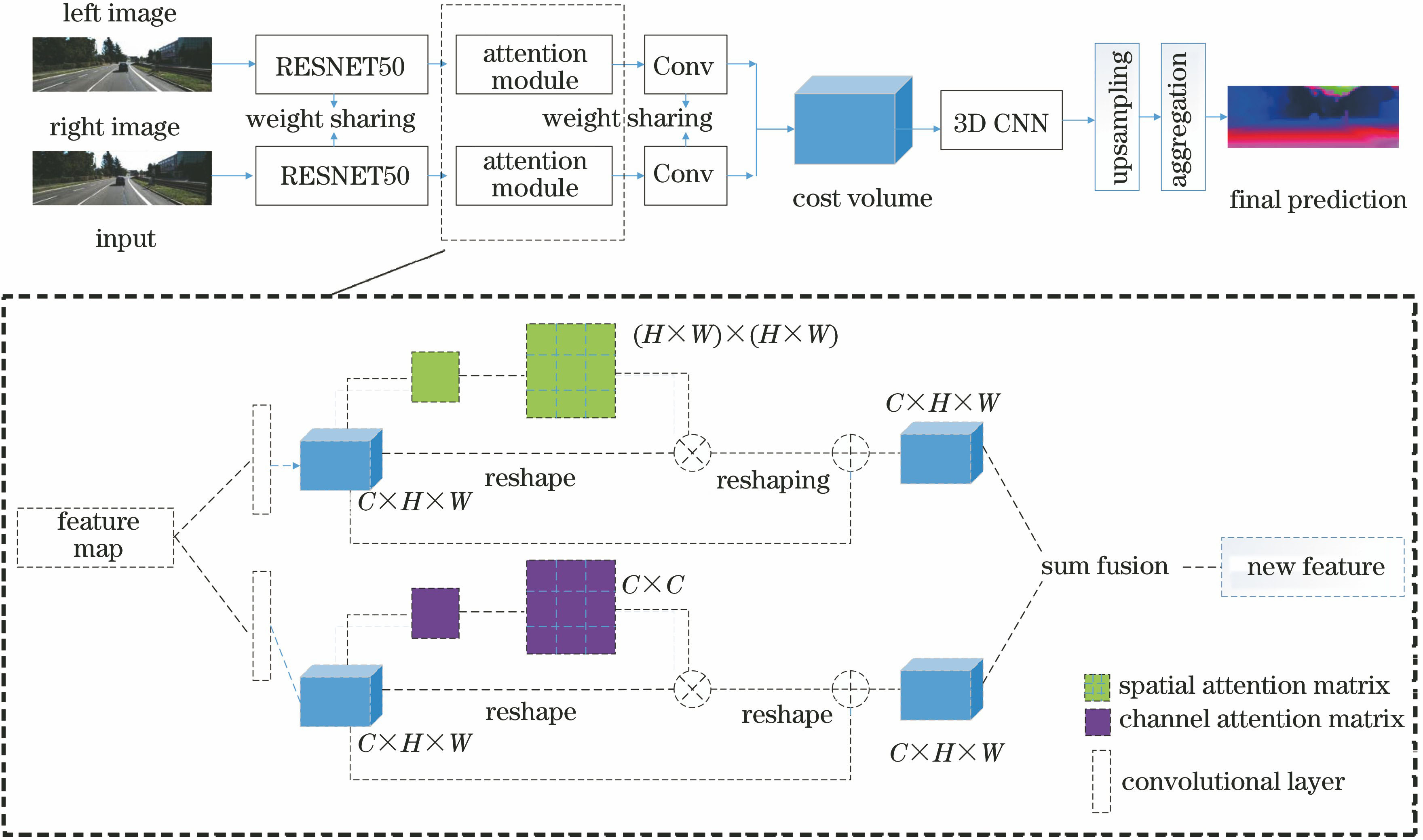

Fig. 1. Algorithm block diagram

Fig. 2. Structure of our proposed network

Fig. 3. Structure of spatial attention mechanism

Fig. 4. Structure of channel attention mechanism

Fig. 5. Results on KITTI2015, from the top to the bottom: the left input, predicted disparity map, actual disparity map, error map

Fig. 6. Results on KITTI2012, from the top to the bottom: the left input, predicted disparity map, actual disparity map, error map

Fig. 7. Results on Sceneflow, from the top to bottom: the left input, actual disparity map, predicted disparity map

Fig. 8. Comparison with other algorithms, from the top to the bottom: the PSM-Net results, the GWC-Net results, our results, our improvement results for the parts framed

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Table 1. Comparison with other algorithms

Set citation alerts for the article

Please enter your email address