[1] A Krizhevsky, I Sutskever, G E Hinton. Imagenet classification with deep convolutional neural networks. Communications of the ACM, 60, 84-90(2017).

[2] K Simonyan, A Zisserman. Very deep convolutional networks for large-scale image recognition. arXiv preprint, 1409.1556(2014).

[3] He K, Zhang X Y, Ren S Q, et al. Deep residual learning f image recognition[C]Proceedings of the IEEE Conference on Computer Vision Pattern Recognition, 2016: 770778.

[4] Vanhoucke V, Seni A, Mao M Z. Improving the speed of neural wks on CPUs[C]Advances in Neural Infmation Processing Systems, 2011.

[5] Gupta S, Agrawal A, Gopalakrishnan K, et al. Deep learning with limited numerical precision[C]International Conference on Machine Learning, 2015, 37: 17371746.

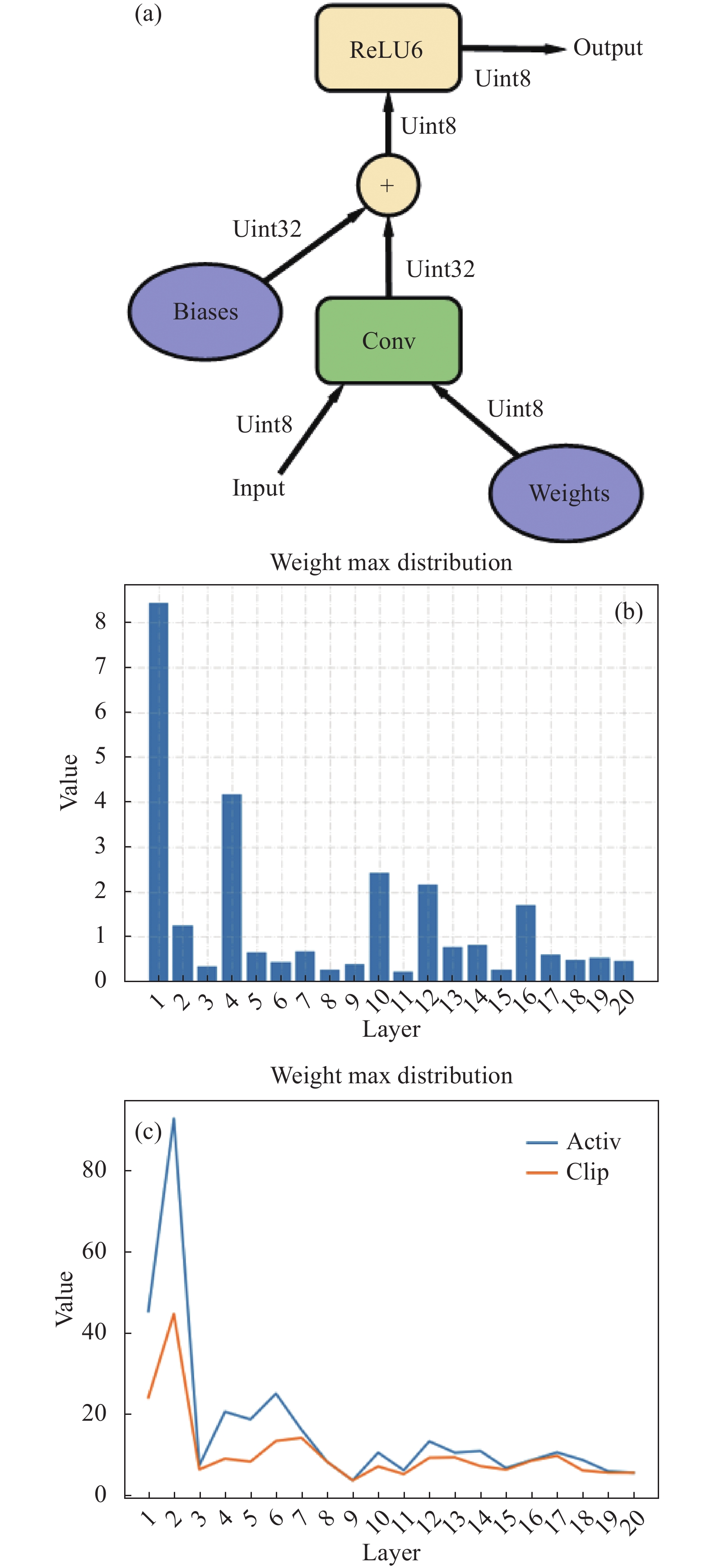

[6] Jacob B, Kligys S, Chen B, et al. Quantization training of neual wks f efficient integerarithmeticonly inference[C]Proceedings of the IEEE Conference on Computer Vision Pattern Recognition, 2018: 27042713.

[7] Cai Y H, Yao Z W, Dong Z, et al. ZeroQ: A novel zero shot quantization framewk[C]Proceedings of the IEEE Conference on Computer Vision Pattern Recognition, 2020: 1316913178.

[8] Wang K, Liu Z J, Lin Y J, et al. HAQ: Hardwareaware automated quantization with mixed precision[C]Proceedings of the IEEE Conference on Computer Vision Pattern Recognition, 2019: 86128620.

[9] Z Z Huang, H M Du, L B Chang. Mixed-clipping quantization for convolutional neural networks. Journal of Computer-Aided Design & Computer Graphics, 33, 553-559(2021).

[10] H Q Zeng, H L Hu, X W Lin, et al. Deep neural network compression and acceleration: An overview. Journal of Signal Processing, 38, 183-194(2022).

[11] Chen W L, Wilson J T, Tyree S, et al. Compressing neural wks with the hashing trick[C]32nd International Conference on Machine Learning, 2015, 37: 22852294.

[12] Liu Z, Li J G, Shen Z Q, et al. Learning efficient convolutional wks through wk sliming[C]2017 IEEE International Conference on Computer Vision (ICCV), 2017: 27752763.

[13] Y F Xu, D Z Zhang, L Wang, et al. Lightweight feature fusion network design for local feature recognition of non-cooperative target. Infrared and Laser Engineering, 49, 20200170(2020).

[14] Lin M, Ji R, Wang Y, et al. HRank: Filter pruning using highrank feature map[C]Proceedings of the IEEE Conference on Computer Vision Pattern Recognition, 2020: 15291538

[15] He Y, Ding Y, Liu P, et al. Learning filter pruning criteria f deep convolutional neural wks acceleration[C]Proceedings of the IEEECVF Conference on Computer Vision Pattern Recognition(CVPR), 2020: 20062015.

[16] Han S, Mao H, Dally W J. Deep compression: Compressing deep neural wks with pruning trained quantization huffman coding[C]Conference on Computer Vision Pattern Recognition, 2016.

[17] Gong R, Liu X, Jiang S, et al. Differentiable soft quantization: Bridging fullprecision lowbit neural wks[C] Proceedings of the IEEECVF International Conference on Computer Vision(ICCV), 2019: 48524861.

[18] Zhu F, Gong R, Yu F, et al. Towards unified int8 training f convolutional neural wk[C]Proceeding of the IEEECVF Conference on Computer Vision Pattern Recognition(CVPR), Virtual, 2020: 19691979.

[19] J Redmon, A Farhadi. YOLOV3: An incremental improvement. arXiv, 1804.02767(2018).