[1] Feng Y F, Yin H, Lu H Q et al. Infrared and visible light image fusion method based on improved fully convolutional neural network[J]. Computer Engineering, 46, 243-249, 257(2020).

[2] Ma J Y, Ma Y, Li C et al. Infrared and visible image fusion methods and applications: a survey[J]. Information Fusion, 45, 153-178(2019). http://www.sciencedirect.com/science/article/pii/S1566253517307972

[3] Liu Y, Chen X, Ward R K et al. Image fusion with convolutional sparse representation[J]. IEEE Signal Processing Letters, 23, 1882-1886(2016). http://ieeexplore.ieee.org/document/7593316

[4] Li H, Zhang L M, Jiang M R et al. An infrared and visible image fusion algorithm based on ResNet152[J]. Laser & Optoelectronics Progress, 57, 081013(2020).

[5] Ding L Y, Duan J, Song Y et al. Image fusion based on residual learning and visual saliency mapping[J]. Laser & Optoelectronics Progress, 57, 161008(2020).

[6] Li S T, Kang X D, Fang L Y et al. Pixel-level image fusion: a survey of the state of the art[J]. Information Fusion, 33, 100-112(2017). http://www.sciencedirect.com/science/article/pii/S1566253516300458

[7] Zhao C, Huang Y D. Infrared and visible image fusion via rolling guidance filtering and hybrid multi-scale decomposition[J]. Laser & Optoelectronics Progress, 56, 141007(2019).

[8] Qu G H, Zhang D L, Yan P F et al. Medical image fusion by wavelet transform modulus maxima[J]. Optics Express, 9, 184-190(2001). http://www.ncbi.nlm.nih.gov/pubmed/19421288

[9] Choi M, Kim R Y, Nam M R et al. Fusion of multispectral and panchromatic satellite images using the curvelet transform[J]. IEEE Geoscience and Remote Sensing Letters, 2, 136-140(2005).

[10] Naidu V P S. Image fusion technique using multi-resolution singular value decomposition[J]. Defence Science Journal, 61, 479(2011). http://www.researchgate.net/publication/278008876_Image_Fusion_Technique_using_Multi-resolution_Singular_Value_Decomposition

[11] Li H, Wu X J, Kittler J. Infrared and visible image fusion using a deep learning framework[C]. //2018 24th International Conference on Pattern Recognition (ICPR), August 20-24, 2018, Beijing, China., 2705-2710(2018).

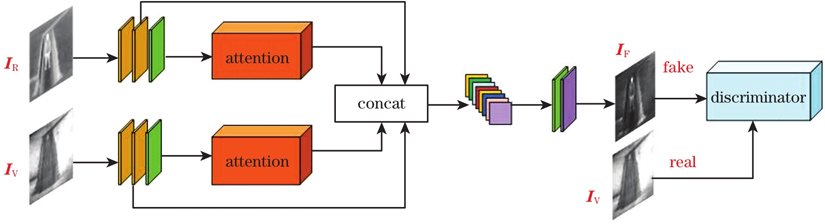

[12] Ma J Y, Yu W, Liang P W et al. FusionGAN: a generative adversarial network for infrared and visible image fusion[J]. Information Fusion, 48, 11-26(2019). http://www.sciencedirect.com/science/article/pii/S1566253518301143

[13] Li H, Wu X J. DenseFuse: a fusion approach to infrared and visible images[J]. IEEE Transactions on Image Processing, 28, 2614-2623(2019). http://www.ncbi.nlm.nih.gov/pubmed/30575534

[14] Zhang H, Xu H, Xiao Y et al. Rethinking the image fusion: a fast unified image fusion network based on proportional maintenance of gradient and intensity[J]. Proceedings of the AAAI Conference on Artificial Intelligence, 34, 12797-12804(2020). http://www.researchgate.net/publication/342311360_Rethinking_the_Image_Fusion_A_Fast_Unified_Image_Fusion_Network_based_on_Proportional_Maintenance_of_Gradient_and_Intensity

[15] Tang C Y, Pu S L, Ye P Z et al. Fusion of low-illuminance visible and near-infrared images based on convolutional neural networks[J]. Acta Optica Sinica, 40, 1610001(2020).

[16] Zhao L. Research on multimodal data fusion methods[D], 23-24(2018).

[17] He Q, Li Y, Song W et al. Multimodal remote sensing image classification with small sample size based on high-level feature fusion[J]. Laser & Optoelectronics Progress, 56, 111001(2019).

[18] Zhu D T. Traffic signs recognition based on deep learning[D], 30-58(2018).

[19] Kamnitsas K, Ledig C, Newcombe V F J et al. Efficient multi-scale 3D CNN with fully connected CRF for accurate brain lesion segmentation[J]. Medical Image Analysis, 36, 61-78(2017). http://www.sciencedirect.com/science/article/pii/S1361841516301839?via=ihub

[20] Toet A. TNO image fusion dataset[DB/OL]. (2014-04-26) [2020-08-10]. https://figshare.com/articles/TN_Image_Fusion_Dataset/1008029

[21] Li H, Wu X J. Infrared and visible image fusion using latent low-rank representation[EB/OL]. (2019-08-09) [2020-08-10]. https://arxiv.org/abs/1804.08992

[22] Chen Y, Blum R S. A new automated quality assessment algorithm for image fusion[J]. Image and Vision Computing, 27, 1421-1432(2009). http://www.sciencedirect.com/science/article/pii/S026288560700220X

[23] Chen H, Varshney P K. A human perception inspired quality metric for image fusion based on regional information[J]. Information Fusion, 8, 193-207(2007). http://dl.acm.org/citation.cfm?id=1225218