Hao Wen, Yang Cao, Yuchao Dang. Research on DNN-NOMS decoding method of polarization code in wireless optical communication[J]. Infrared and Laser Engineering, 2022, 51(5): 20210420

Search by keywords or author

- Infrared and Laser Engineering

- Vol. 51, Issue 5, 20210420 (2022)

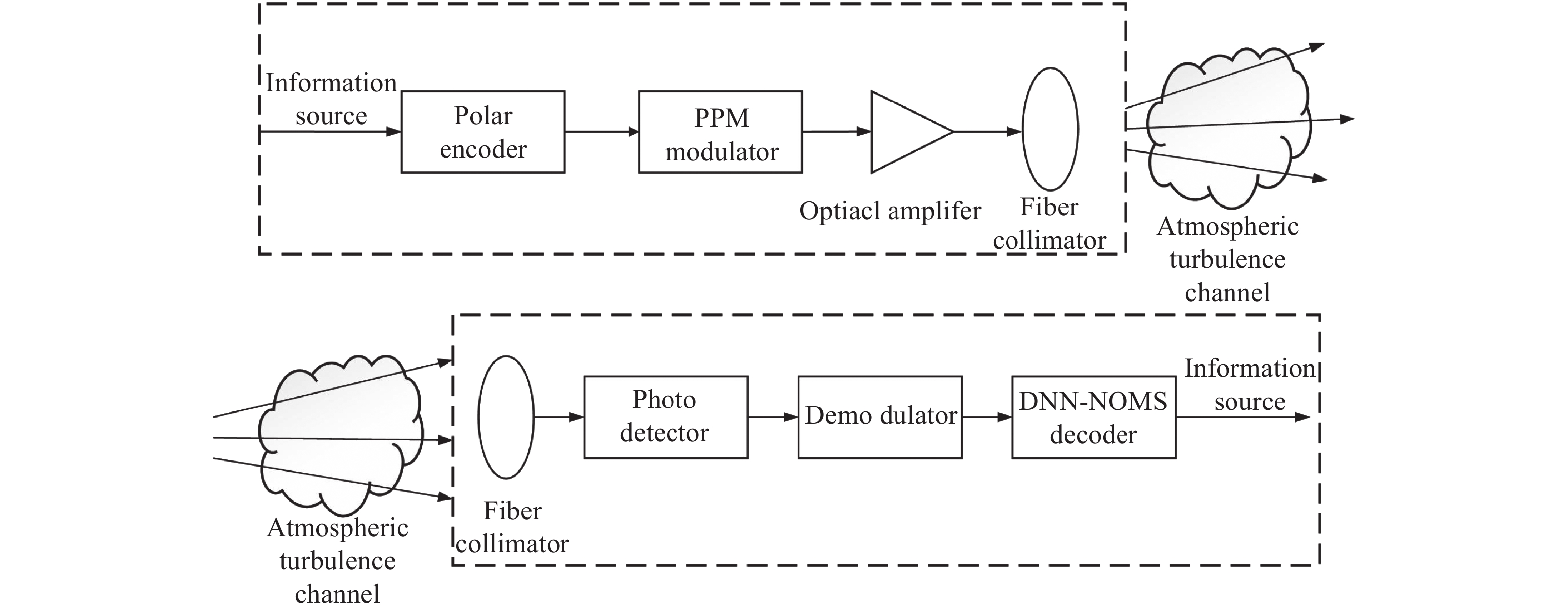

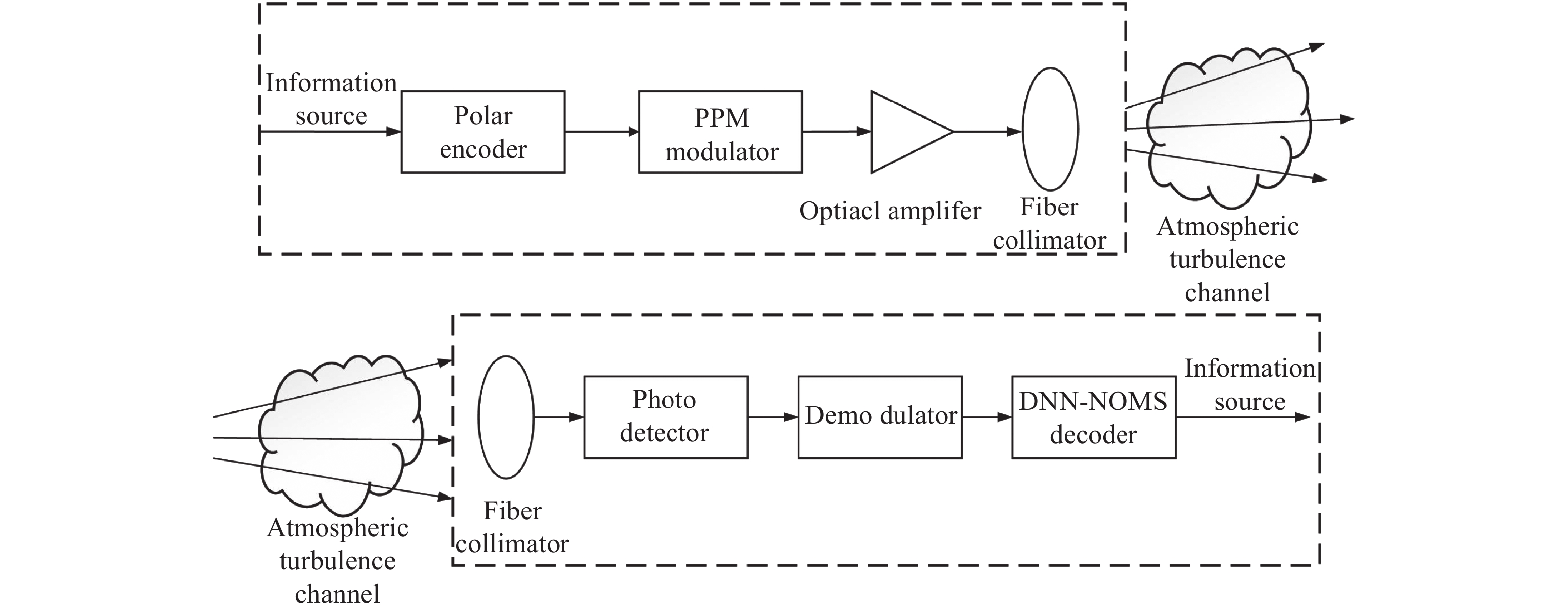

Fig. 1. DNN neural network model assisted system model for polarization code decoding

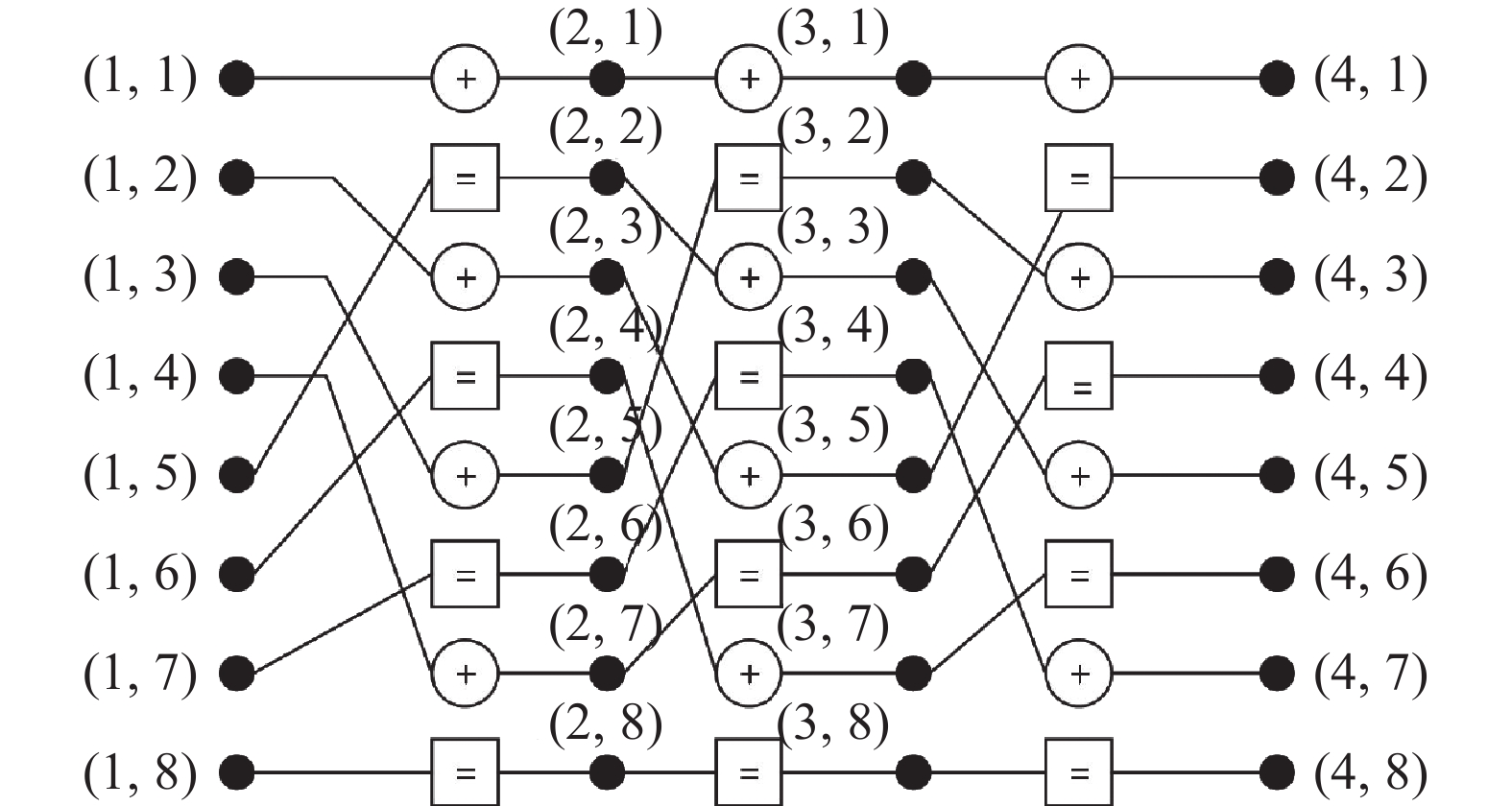

Fig. 2. (8,4) factor diagram of polarization code BP decoding method

Fig. 3. (8,4) PE information transmission process of the processing unit of the polarization code

Fig. 4. (8,4) Dense Tanner graph (a) and sparse Tanner graph (b) of polarization codes

Fig. 5. (8,4) sparse neural network decoding structure of polarization codes

Fig. 6. Structure diagram of DNN-NOMS neural network decoder

Fig. 7. Comparison of the decoding performance of the neural network model under different network layers

Fig. 8. Evolution of loss function and training parameters

Fig. 9. Performance comparison of different BP decoding methods

Fig. 10. Performance comparison of decoding methods under different code rates

Fig. 11. Performance comparison of decoding methods under different turbulence intensities

|

Table 1. Symbol meaning of NOMS decoding method

|

Table 2. Simulation parameters

|

Table 3. Calculation of optimal factor parameters under different turbulence intensity conditions

|

Table 4. Comparison of decoding complexity of different BP modified decoding methods

Set citation alerts for the article

Please enter your email address