Yuan Deng, Yiping Shi, Jie Liu, Yueying Jiang, Yamei Zhu, Jin Liu. Multi-Angle Facial Expression Recognition Algorithm Combined with Dual-Channel WGAN-GP[J]. Laser & Optoelectronics Progress, 2022, 59(18): 1810013

Search by keywords or author

- Laser & Optoelectronics Progress

- Vol. 59, Issue 18, 1810013 (2022)

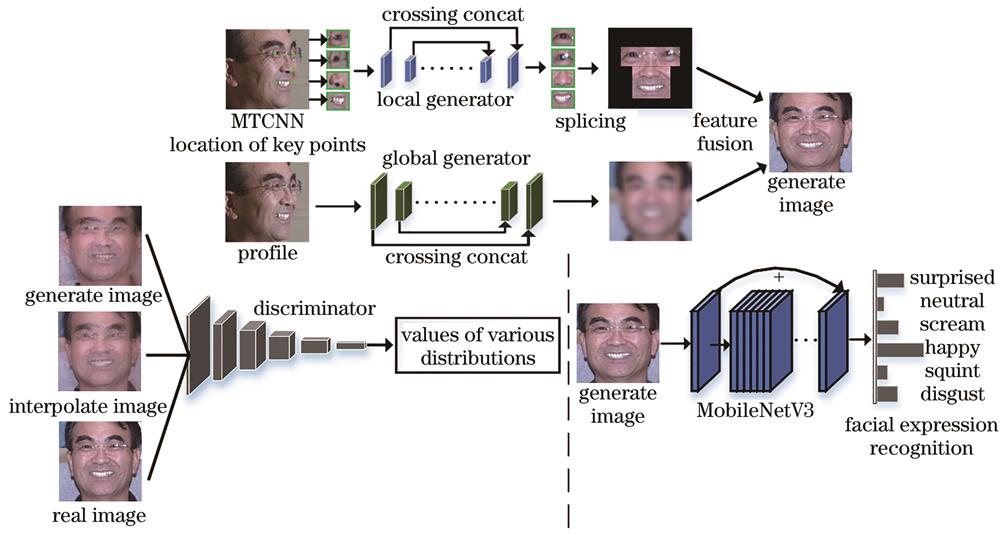

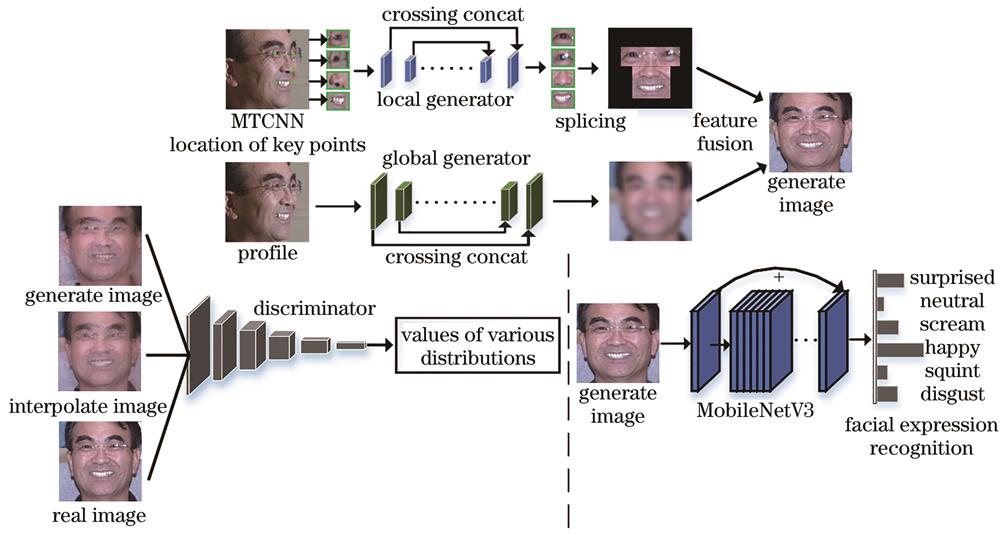

Fig. 1. Overall structure of proposed model

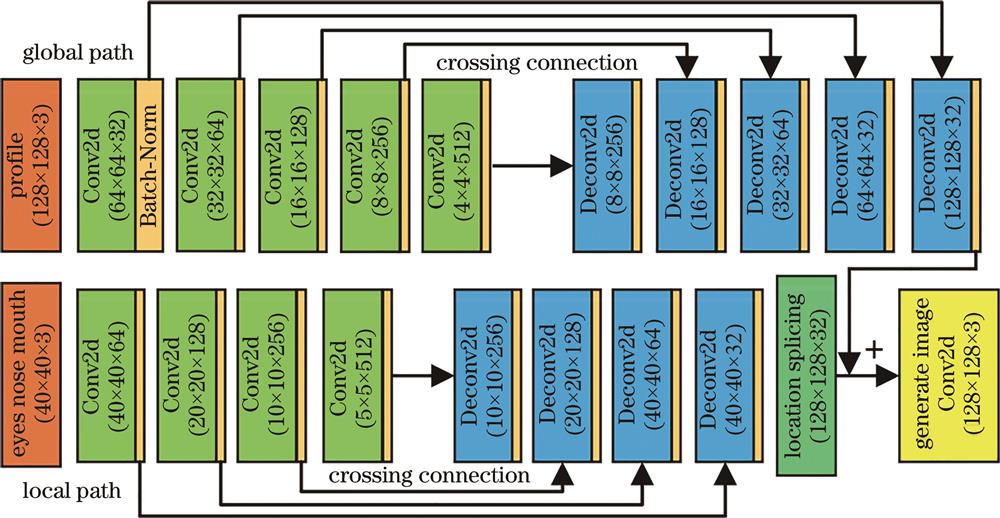

Fig. 2. Dual-channel generator network structure

Fig. 3. Discriminator network structure

Fig. 4. Generation effect of frontal expression images on KDEF dataset

Fig. 5. Generation effect of frontal expression images on Multi-PIE dataset

Fig. 6. Confusion matrix of expression recognition under different angles on KDEF dataset. (a) 0° rotation; (b) 45° rotation; (c) 90° rotation

Fig. 7. Partial error samples on KDEF dataset

Fig. 8. Confusion matrix of expression recognition under different angles on Multi-PIE dataset. (a) 0° rotation; (b) 30° rotation; (c) 60° rotation; (d) 90° rotation

Fig. 9. Partial error samples on Multi-PIE dataset

Fig. 10. Comparison of network loss function changes. (a) TP-GAN discriminant loss; (b) TP-GAN adversarial loss; (c) proposed model discriminant loss; (d) proposed model adversarial loss

Fig. 11. Comparison of facial expression images generation effect after model ablation. (a) Profile; (b) without local path; (c) without Wasserstein; (d) without GP; (e) proposed method; (f) real frontal

Fig. 12. Comparison of facial expression recognition rate under different ablation results

Fig. 13. Comparison of frontalization generation effect. (a) Real profile; (b) proposed method; (c) TP-GAN[11]; (d) CAPG-GAN[9]; (e) FI-GAN[23]; (f) MTDNN[24]; (g) 3DMM[25]; (h) real frontal

|

Table 1. Structure of improved MobileNetV3

|

Table 2. Multi-angle facial expression recognition rate on KDEF dataset

|

Table 3. Multi-angle facial expression recognition rate on Multi-PIE dataset

|

Table 4. Expression recognition rate under different loss functions on Multi-PIE dataset

|

Table 5. Recognition results of frontal expressions under different models

|

Table 6. Comparison of multi-angle facial expression recognition rate with existing methods on KDEF dataset

|

Table 7. Comparison of multi-angle facial expression recognition rate with existing methods on Multi-PIE dataset

Set citation alerts for the article

Please enter your email address