Author Affiliations

1Key Laboratory of Digital Earth, Aerospace Information Research Institute, Chinese Academy of Sciences, Beijing 100094, China2School of Electronic, Electrical and Communication Engineering, University of Chinese Academy of Science, Beijing 100049, China3Institute of Forest Resource Information Techniques CAF, Beijing 100091, Chinashow less

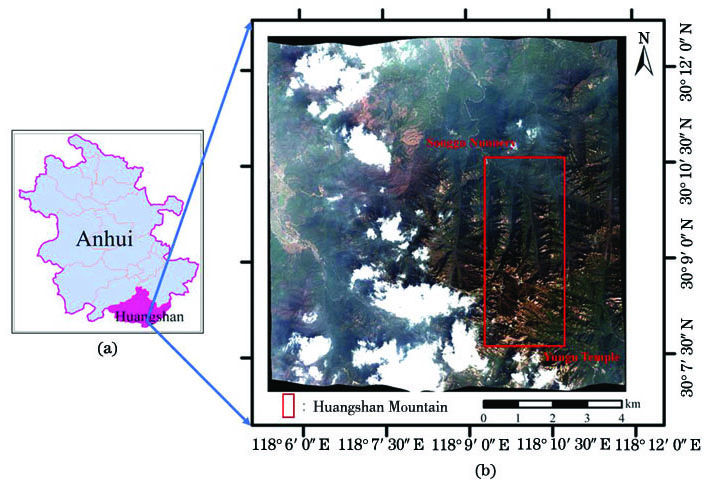

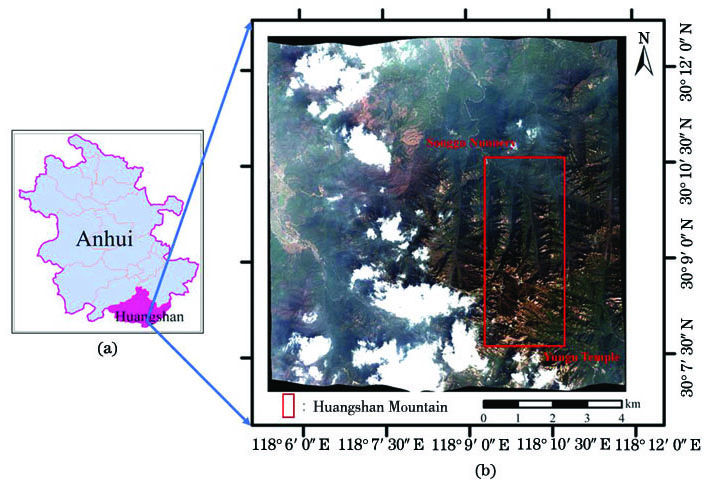

Fig. 1. Location of the research area. (a) Huangshan City, Anhui Province; (b) true color schematic of WorldView3, the box indicates the location of Huangshan Mountain

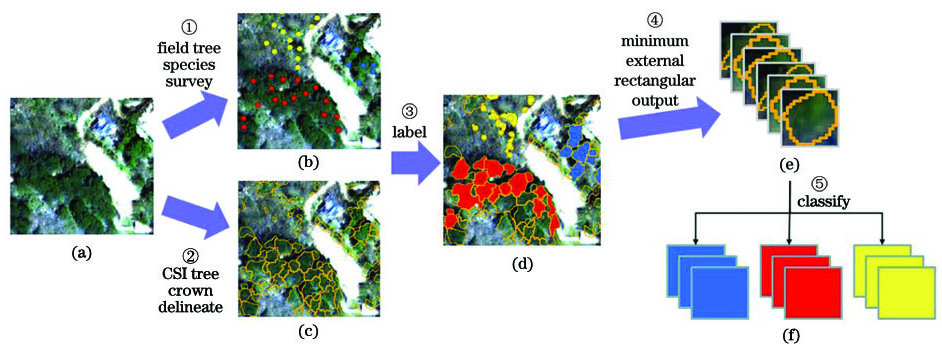

Fig. 2. Construction steps of sample set of remote sensing imagery of individual tree species. (a) Remote sensing imagery of research area; (b) distribution diagram of tree species; (c) delineation diagram of tree crown; (d) labeling diagram of tree crown category; (e) remote sensing imagery of individual tree species; (f) sample set of remote sensing imagery of individual tree species

Fig. 3. Classification labeling result of sample set of remote sensing imagery of individual tree species

Fig. 4. Histogram of training accuracy, validation accuracy, and network layers when CNN model converges

Fig. 5. Classification diagram of tree species of Huangshan Mountain

| Tree species | Training sample set | Validation sample set | Test sample set |

|---|

| Before | After | Before | After |

|---|

| Ph.h | 66 | 396 | 23 | 138 | 23 | | E.a | 266 | 1596 | 89 | 534 | 89 | | C.l | 83 | 498 | 28 | 168 | 28 | | Pi.h | 1199 | 7194 | 401 | 2406 | 401 | | D.a | 369 | 2214 | 124 | 744 | 124 | | Total | 1983 | 11898 | 665 | 3990 | 665 |

|

Table 1. Results of sample set division before and after data augmentation

| Layer | Output size | Parameter |

|---|

| Input | 32×32×8 | - | | Convolutional C1 | 28×28×6 | Kernel 5×5, filter 6, stride 1, ReLU | | Pooling S1 | 14×14×6 | Average_pooling 2×2, stride 2 | | Convolutional C2 | 10×10×16 | Kernel 5×5, filter 16, stride 1, ReLU | | Pooling S2 | 5×5×16 | Average_pooling 2×2, stride 2 | | Convolutional C3 | 1×1×120 | Kernel 5×5, filter 120, stride 1, ReLU | | Fully-connected F1 | 84 | Node 84, FC, ReLU | | Classification | 5 | Node 5, FC, Softmax |

|

Table 2. LeNet5_relu model parameter

| Layer | Output size | Parameter |

|---|

| Input | 32×32×8 | - | | Convolutional C1 | 32×32×12 | Kernel 7×7, filter 12, stride 1, ReLU | | Pooling S1 | 15×15×12 | Average_pooling 3×3, stride 2 | | Convolutional C2 | 15×15×36 | Kernel 5×5, filter 36, stride 1, ReLU | | Pooling S2 | 7×7×36 | Average_pooling 3×3, stride 2 | | Convolutional C3 | 7×7×54 | Kernel 3×3, filter 54, stride 1, ReLU | | Convolutional C4 | 7×7×54 | Kernel 3×3, filter 54, stride 1, ReLU | | Convolutional C5 | 3×3×36 | Kernel 3×3, filter 36, stride 1, ReLU | | Pooling S3 | 3×3×36 | Average_pooling 3×3, stride 2 | | Fully-connected F1 | 320 | Node 320, FC, ReLU | | Fully-connected F2 | 100 | Node 100, FC, ReLU | | Classification | 5 | Node 5, FC, Softmax |

|

Table 3. AlexNet_mini model parameter

| Layer | Output size | Parameter |

|---|

| Input | 32×32×8 | -- | | Convolutional C1 | 32×32×12 | Kernel 7×7, filter 12, stride 1 | | Inception V1 block (1a) | 32×32×32 | -- | | Inception V1 block (1b) | 32×32×60 | -- | | Pooling S1 | 15×15×60 | Max_pooling 3×3, stride 2 | | Inception V1 block (2a) | 15×15×64 | -- | | Inception V1 block (2b) | 15×15×64 | -- | | Inception V1 block (2c) | 15×15×64 | -- | | Inception V1 block (2d) | 15×15×66 | -- | | Inception V1 block (2e) | 15×15×104 | -- | | Pooling S2 | 7×7×104 | Max_pooling 3×3, stride 2 | | Inception V1 block (3a) | 7×7×104 | -- | | Inception V1 block (3b) | 7×7×128 | -- | | Pooling S3 | 1×1×128 | Average_pooling 7×7, stride 1 | | Classification | 5 | Node 5, FC, Softmax |

|

Table 4. GoogLeNet_mini56 model parameter

| Layer | Inception V1 block |

|---|

| Ⅰ | Ⅱ | Ⅲ | Ⅳ |

|---|

| Convolutionalkernel 1×1 | Bottleneckkernel 1×1 | Convolutionalkernel 3×3 | Bottleneckkernel 1×1 | Convolutionalkernel 5×5 | Pooling3×3 | Bottleneckkernel 1×1 | | 1a | Filter 8 | Filter 12 | Filter 16 | Filter 2 | Filter 4 | Stride 1 | Filter 4 | | 1b | Filter 16 | Filter 16 | Filter 24 | Filter 4 | Filter 12 | Stride 1 | Filter 8 | | 2a | Filter 24 | Filter 12 | Filter 26 | Filter 2 | Filter 6 | Stride 1 | Filter 8 | | 2b | Filter 20 | Filter 14 | Filter 28 | Filter 3 | Filter 8 | Stride 1 | Filter 8 | | 2c | Filter 16 | Filter 16 | Filter 32 | Filter 3 | Filter 8 | Stride 1 | Filter 8 | | 2d | Filter 14 | Filter 18 | Filter 36 | Filter 4 | Filter 8 | Stride 1 | Filter 8 | | 2e | Filter 32 | Filter 20 | Filter 40 | Filter 4 | Filter 16 | Stride 1 | Filter 16 | | 3a | Filter 32 | Filter 20 | Filter 40 | Filter 4 | Filter 16 | Stride 1 | Filter 16 | | 3b | Filter 48 | Filter 24 | Filter 48 | Filter 6 | Filter 16 | Stride 1 | Filter 16 |

|

Table 5. Inception V1 block parameter

| Layer | Output size | Parameter | Residual blockfilter |

|---|

| Ⅰ | Ⅱ | Ⅲ |

|---|

| Bottleneckkernel 1×1 | Convolutionalkernel 3×3 | Bottleneckkernel 1×1 | | Input | 32×32×8 | -- | -- | | Convolutional C1 | 16×16×12 | Kernel 7×7, filter 12, stride 2 | -- | | Residual block(1) | Pooling S1 | 8×8×12 | Max_pooling 3×3, stride 2 | -- | | Bottleneck | 8×8×12 | × 3 | 12 | -- | -- | | Convolutional | 8×8×12 | -- | 12 | -- | | Bottleneck | 8×8×48 | -- | -- | 48 | | Residual block(2) | Bottleneck | 4×4×24 | × 4 | 24 | -- | -- | | Convolutional | 4×4×24 | -- | 24 | -- | | Bottleneck | 4×4×96 | -- | -- | 96 | | Residual block(3) | Bottleneck | 2×2×48 | ×8 | 48 | -- | -- | | Convolutional | 2×2×48 | -- | 48 | -- | | Bottleneck | 2×2×192 | -- | -- | 192 | | Residual block(4) | Bottleneck | 1×1×96 | × 3 | 96 | -- | -- | | Convolutional | 1×1×96 | -- | 96 | -- | | Bottleneck | 1×1×384 | -- | -- | 384 | | Classification | 384 | Global_average_pooling | -- | | 5 | Node 5, FC,Softmax |

|

Table 6. ResNet_mini56 model parameter

| Layer | Output size | Parameter | Dense block filter |

|---|

| Ⅰ | Ⅱ |

|---|

| Bottleneckkernel 1×1 | Convolutionalkernel 1×1 | | Input | 32×32×8 | -- | -- | | Convolutional C1 | 32×32×12 | Kernel 3×3, filter 12, stride 2 | -- | | Dense block(1) | Bottleneck | 32×32×42 | ×5 | 24 | -- | | Convolutional | -- | 6 | | Compression(1) | 32×32×21 | Kernel 1×1 | -- | | 16×16×21 | Average_pooling 2×2, stride 2 | | Dense block(2) | Bottleneck | 16×16×51 | × 5 | 24 | -- | | Convolutional | -- | 6 | | Compression(2) | 16×16×36 | Kernel 1×1 | -- | | 8×8×36 | Average_pooling 2×2, stride 2 | | Dense block(3) | Bottleneck | 8×8×66 | ×5 | 24 | -- | | Convolutional | -- | 6 | | Compression(3) | 8×8×51 | Kernel 1×1 | -- | | 4×4×51 | Average_pooling 2×2, stride 2 | | Dense block(4) | Bottleneck | 4×4×81 | × 5 | 24 | -- | | Convolutional | -- | 6 | | Compression(4) | 4×4×66 | Kernel 1×1 | -- | | 2×2×66 | Average_pooling 2×2, stride 2 | | Dense block(5) | Bottleneck | 2×2×99 | ×5 | 24 | -- | | Convolutional | -- | 6 | | Classification | 99 | Global_average_pooling | -- | | 5 | Node 5, FC, Softmax |

|

Table 7. DenseNet_BC_mini56 model parameter

| Model name | Total parameter | Trainable parameter | Non-trainable parameter | Network layer |

|---|

| LeNet5_relu | 62331 | 62331 | 0 | 5 | | AlexNet_mini | 213537 | 213537 | 0 | 8 | | GoogLeNet_mini56 | 97251 | 97227 | 24 | 56 | | ResNet_mini56 | 934025 | 924401 | 9624 | 56 | | DenseNet_BC_mini56 | 82979 | 78839 | 4140 | 56 |

|

Table 8. CNN model parameter

| Model name | Evaluation index | Tree species |

|---|

| Ph.h | E.a | C.l | Pi.h | D.a |

|---|

| LeNet5_relu | Producer accuracy /% | 86.96 | 67.42 | 75.00 | 98.00 | 87.90 | | User accuracy /% | 90.91 | 76.92 | 87.50 | 93.57 | 90.08 | | Overall accuracy /% | 90.68 | | | | | | Kappa coefficient | 0.84 | | | | | | AlexNet_mini | Producer accuracy /% | 86.96 | 71.91 | 64.29 | 97.76 | 92.74 | | User accuracy /% | 90.91 | 79.01 | 78.26 | 94.00 | 94.26 | | Overall accuracy /% | 91.58 | | Kappa coefficient | 0.85 | | GoogLeNet_mini56 | Producer accuracy /% | 95.65 | 74.16 | 75.00 | 97.76 | 97.58 | | User accuracy /% | 100.00 | 84.62 | 95.45 | 94.92 | 93.08 | | Overall accuracy /% | 93.53 | | Kappa coefficient | 0.89 | | ResNet_mini56 | Producer accuracy /% | 95.65 | 76.40 | 78.57 | 97.51 | 92.74 | | User accuracy /% | 100.00 | 80.95 | 100.00 | 94.90 | 92.00 | | Overall accuracy /% | 92.93 | | Kappa coefficient | 0.88 | | DenseNet_BC_mini56 | Producer accuracy /% | 95.65 | 75.28 | 85.71 | 98.25 | 95.97 | | User accuracy /% | 100.00 | 87.01 | 100.00 | 94.71 | 94.44 | | Overall accuracy /% | 94.14 | | Kappa coefficient | 0.90 |

|

Table 9. Classification accuracy evaluation index of CNN model