- High Power Laser Science and Engineering

- Vol. 8, Issue 3, 03000e28 (2020)

Abstract

1 Introduction

Accurate laser pointing is crucial for many applications such as free-space communication[

The artificial neural network technique can establish the connection between the input and the output of systems by learning from datasets, and has been used in many fields for function approximation and pattern recognition[

In this study, a neural network is applied to extract full information from the intensity distribution of a laser spot, and the position and angular errors of a laser beam can be determined from a single spot image. The datasets for the neural network are obtained by the simulation of a prototype laser-pointing system with a special setup, including a tilted charge-coupled device (CCD) detector with known defocus distance and a small-focal-length lens. This setup is designed for obtaining spot images with more distinct features for neural network analysis, such as higher intensity contrast and the required spot size. Compared with traditional setups, the current system supplies a more compact structure and an alternative way to approach high measurement accuracy through data methods, so there may be some advantages in accuracy, reliability and synchronization in laser-pointing measurement.

Sign up for High Power Laser Science and Engineering TOC. Get the latest issue of High Power Laser Science and Engineering delivered right to you!Sign up now

2 Neural network method for laser-pointing error measurement

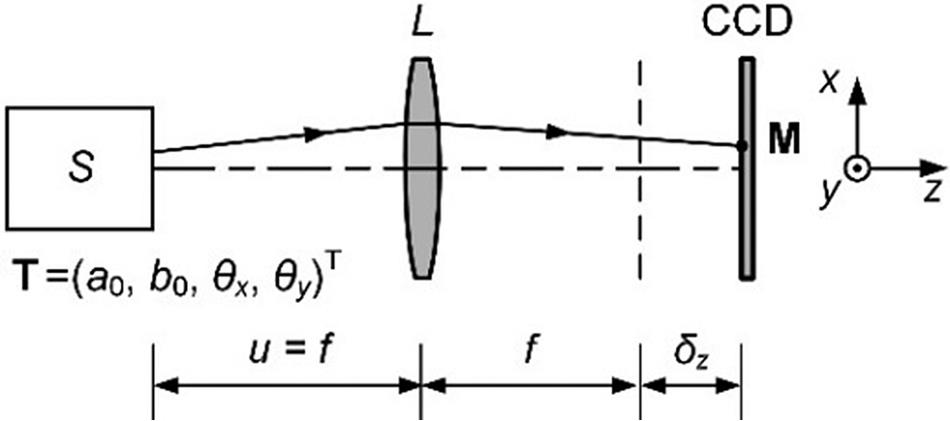

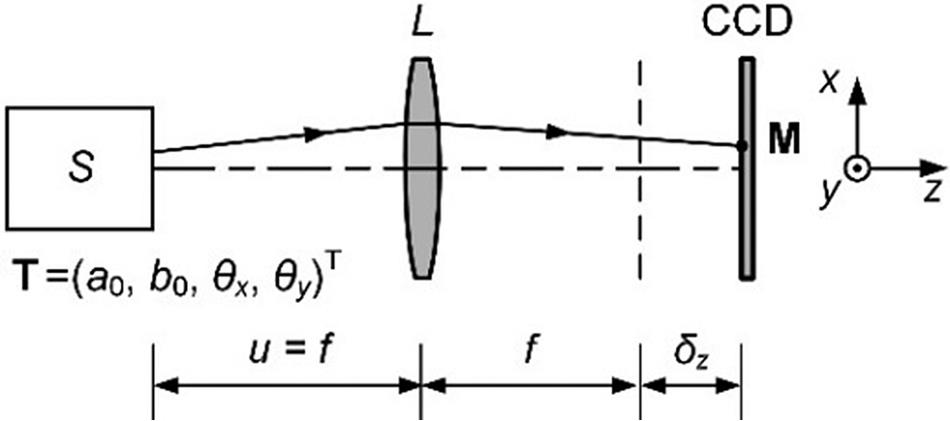

Our prototype laser-pointing system contains a laser source, a thin lens and a CCD detector, as shown in

We chose a laser of the wavelength λ = 632.8 nm, and a beam waist radius w01 = 2.0 mm with Gaussian distribution at the beam waist, corresponding to a typical transverse electromagnetic mode (TEM00) He-Ne laser source. The CCD had a pixel size of 0.0057 mm, with an output gray level of 12 bits, providing an intensity range 0–4095. The pixel intensities of an image are all integers to simulate analog-to-digital (A/D) conversion, which is equivalent to introducing a detection noise of less than 0.5. We limit the position offsets a0 and b0 within the range [−0.5, 0.5] mm, and the inclination angles θx and θy within [−25, 25] Ҽrad. To compare the prediction performance for image sets with different system parameters, the intensity of the collimated beam at the center of the CCD is fixed to a particular value by adjusting the intensity of the laser source. A dataset composed of 12,000 spot images of 36 × 36 pixel regions with the corresponding randomly generated beam tilts can then be obtained.

Figure 1.Prototype laser-pointing system. S is the laser source; L is the thin lens;

The neural network used in this study is implemented by Python[

where

![]()

Figure 2.Prediction errors

In order to enlarge the image difference, we designed the system with a tilted CCD rotated by 60° around either the y or x axis.

For non-Gaussian beams, the maximum difference is expected to appear at different positions but with a similar magnitude. For flat-topped beams common in high-energy systems, the maximum difference would occur around the edges of the pattern with a similar magnitude; it is expected that this can be recognized accurately by a neural network, as discussed below.

3 Results and discussion of prediction in two directions

For practical prediction of beam tilts in two directions, we consider the prototype system under different combinations of parameters θ, f and δz. The tilting angle θ is chosen from 0°, 15°, 30°, 45° and 60°. The y = −x axis is chosen as the rotation axis of the CCD, allowing the spot to be stretched diagonally and contained in a smaller square pixel region. The focal length f is taken as 40, 60, 80, 100 or 120 mm. The spot images of the positive and negative defocus distances are symmetrical about the focal plane, so only the positive defocus distances δz/ZR2 = 0, 0.5, 1, 1.5 or 2 are considered. The positive defocus distance δz/ZR2 is set to a constant here, so that the spot radius can be changed with different focal length f. Finally, 125 generated datasets are substituted into the neural network to obtain the prediction performances of the beam tilts.

![]()

Figure 3.Prediction performances

To clearly elucidate the effect of the defocus distance, we focus on the prediction performances with the tilting angles θ = 45° and 60° as shown in

Similarly, we can derive the influence of the focal length from

According to the analysis above, some useful rules can be drawn. The position and angular errors cannot be decoupled by spot images on the vertical CCD. A tilted CCD can help to solve this problem, and better prediction performance can be acquired at a larger tilting angle of the CCD. A smaller focal length and defocus distance have greater potential in prediction, but they may also cause a too small spot size, which leads to performance degradation. Factors that can increase the spot size may improve the performance. For the pixel size and error ranges in the prototype system, the optimal parameter combination is the focal length f of 60 mm, defocus distance δz of 0 and tilting angle θ of 60°.

We briefly discuss the potential errors in this method below. Due to its data-based character[

4 Conclusions

In this paper, we provide a neural network method for the decoupling of position and angular errors of a laser beam in laser-pointing systems. With a novel setup, including an appropriate small focal length lens and tilting the detector at the focal plane, the position and angular errors can be predicted from the intensity distribution of a single spot image. Compared to the common centroid method, this method has a more concise structure and great potential for high-precision measurement through both optical design and data analysis. It may be useful when both the position and angular errors are needed, or when real-time feature and complexity of systems are rigorously required, such as precise optical systems or multi-beam monitoring.

References

[1] J. Yin, J. Ren, H. Lu. Nature, 488, 185(2012).

[2] K. Wilhelmsen, A. Awwal, G. Brunton. Fusion. Eng. Des., 87, 1989(2012).

[3] G. Genoud, F. Wojda, M. Burza, A. Persson, C.-G. Wahlström. Rev. Sci. Instrum., 82, 033102(2011).

[4] B. Shirinzadeh, P. L. Teoh, Y. Tian, M. M. Dalvand, Y. Zhong, H. C. Liaw. Robot. Cim-Int. Manuf., 26, 74(2010).

[5] M. J. Beerer, H. Yoon, B. N. Agrawal. Control. Eng. Pract., 21, 122(2013).

[6] A. S. Koujelev, A. E. Dudelzak. Opt. Eng., 47, 085003(2008).

[7] E. H. Anderson, R. L. Blankinship, L. P. Fowler, R. M. Glaese, P. C. Janzen. Proc. SPIE, 6569, 65690Q(2007).

[8] I. Moon, S. Lee, M. K. Cho. Proc. SPIE, 5877, 58770I(2005).

[9] S. T. Dawkins, A. N. Luiten. Appl. Opt., 47, 1239(2008).

[10] W. Zhao, J. Tan, L. Qiu, L. Zou, J. Cui, Shi Z.. Rev. Sci. Instrum., 76, 036101(2005).

[11] J. Pan, J. Viatella, P. P. Das, Y. Yamasaki. Proc. SPIE, 5377, 1894(2004).

[12] L. Lublin, D. Warkentin, P. P. Das, A. I. Ershov, J. Vipperman, R. L. Spangler, B. Klene. , , , , , , and , Proc. SPIE , ()., 5040(2003).

[13] Q. Zhou, P. Ben-Tzvi, D. Fan, A. A. Goldenberg.

[14] P. H. Merritt, J. R. Albertine. Opt. Eng., 52, 021005(2012).

[15] K. Hornic. Neural Networks, 2, 359(1989).

[16] Y. LeCun, Y. Bengio, G. Hinton. Nature, 521, 436(2015).

[17] D. G. Sandler, T. K. Barrett, D. A. Palmer, R. Q. Fugate, W. J. Wild. Nature, 351, 300(1991).

[18] J. R. P. Angel, P. Wizinowich, M. Lloyd-Hart, D. Sandler. Nature, 348, 221(1990).

[19] P. L. Wizinowich, M. Lloyd-Hart, B. McLeod. , , Proc. SPIE , ()., 1542, 148(1991).

[20] F. Breitling, R. S. Weigel, M. C. Downer, T. Tajima. Rev. Sci. Instrum., 72, 1339(2001).

[21] H. Guo, N. Korablinova, Q. Ren, J. Bille. Opt. Express, 14, 6456(2006).

[22] N. A. Abbasi, T. Landolsi, R. Dhaouadi. Mechatronics, 25, 44(2015).

[23] H. Yu, G. Rossi, A. Braglia, G. Perrone. Appl. Opt., 55, 6530(2016).

[24] L. Xia, Y. Gao, X. Han. Opt. Commun., 387, 281(2017).

[25] M. A. Nielsen.

[26] D. E. Rumelhart, G. E. Hinton, R. J. Williams. Nature, 323, 533(1986).

Set citation alerts for the article

Please enter your email address