- Chinese Optics Letters

- Vol. 16, Issue 7, 071101 (2018)

Abstract

Space-based surveillance has gradually attracted increasing attention. To detect targets, a wide field of view (FOV) to cover a sufficiently wide surveillance area is required. To track targets, relatively high-resolution imaging is required to observe the targets in greater detail. A wide-field surveillance system based on the conventional optical system usually utilizes the cooperation of multiple cameras to realize multi-target detection and tracking, where the wide-field camera scans to detect the targets, and the high-resolution camera obtains high-resolution images of the targets by movement, zoom control, or other methods[

This Letter presents the design of a detection and tracking system based on the concept of a segmented planar imaging detector for electro-optical reconnaissance (SPIDER), which can significantly reduce the imaging system volume and weight utilizing photonic integrated circuit (PIC) technology[

The new detection and tracking system based on the SPIDER concept works on the Van Cittert–Zernike theorem and imaging interferometer techniques[

![]()

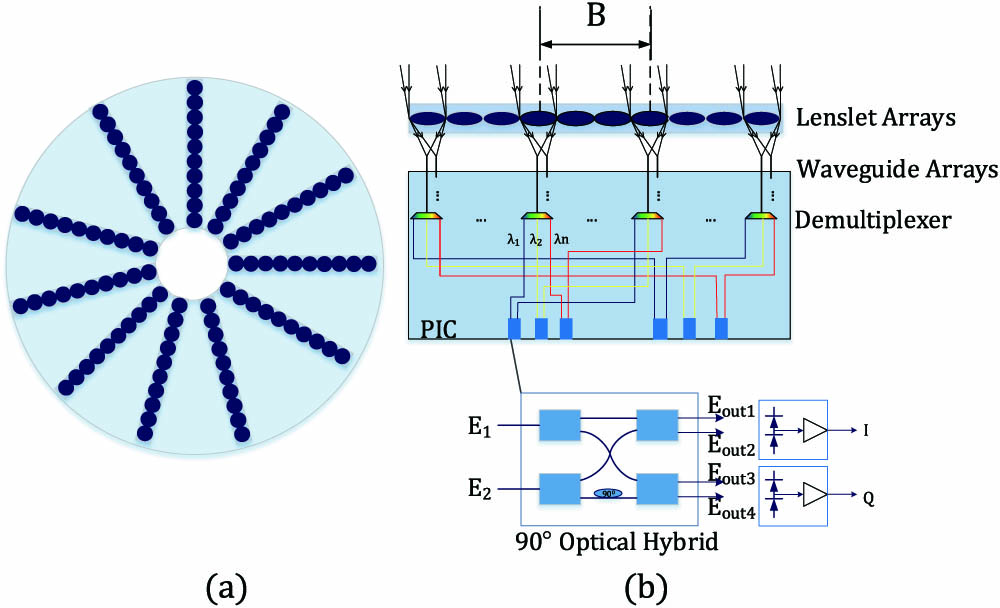

Figure 1.Schematic of the detection and tracking system. (a) Top view of the system. (b) Working principle of the system.

The output signals detected by photodetectors[

According to the Van Cittert–Zernike theorem, the mutual coherent intensity

The spatial frequency of the image is given by

From Eq. (

From Eq. (

When the wavelength is given, the imaging resolution can be determined by the longest baseline, which is the longest distance of the paired lenslets.

The light from one lenslet is coupled into a waveguide, where the coupling efficiency can be given by[

From Eq. (

If the FOV needs to be expanded,

When the diameter and working wavelength of the lenslet are given, the available system FOV can be determined by the waveguide array size.

According to the previous analysis, the imaging resolution can be determined by the longest baseline and working wavelength, and the available FOV can be determined by the lenslet diameter, waveguide array size, and working wavelength. Different combinations of the working lenslet arrays and working waveguides correspond to different imaging resolutions and FOVs. Thus, we can obtain two operating modes: a detection mode with a wide field and low resolution and a tracking mode with a narrow field and high resolution.

Detection mode with wide field and low resolution: the side view of the lenslets and waveguide arrays of a single interferometer for the detection mode is illustrated in Fig.

![]()

Figure 2.Schematic of system operating modes. (a) Detection mode with wide field and low resolution. (b) Tracking mode with narrow field and high resolution.

Tracking mode with narrow field and high resolution: the side view of the lenslets and waveguide arrays of a single interferometer for the tracking mode is illustrated in Fig.

When using this system to detect and track targets, firstly, the system switches to the detection mode and obtains a wide field with relatively low-resolution imaging. When the focus targets are found from the detection mode results, the system can evaluate the size and position of the focus targets and change the working electronic switches. Then, the system switches to the tracking mode with specific working waveguides, obtains high-resolution images of the focus targets, and predicts the moving path of the focus targets to track them. When the focus targets disappear in the narrow field of the tracking mode, the system switches to the detection mode again to find other new focus targets. The detection and tracking system working flowchart is outlined in Fig.

![]()

Figure 3.Flowchart of the detection and tracking system.

![]()

Figure 4.Schematic of the working waveguides for tracking focus targets.

To verify the feasibility of the system, an example simulated by MATLAB is presented below. The simulation process is as follows: (1) perform a pristine target scene to obtain the intensity; (2) get the light-field distribution at the lenslets plane; (3) perform interference intensity of two beams of light transmitted by waveguides at the output of 90° optical hybrids; (4) obtain the outputs

The required performance parameters of the system are listed in Table

| Performance Parameters | Value |

|---|---|

| Object distance | 250 km |

| Wavelength | 500–900 nm |

| Number of spectral bins | 10 |

| Wide-field resolution | 10 arcsec |

| Focus targets resolution | 2 arcsec |

| FOV of wide field | 10° |

| FOV of focus targets | 0.2° and 0.3° |

Table 1. Target Detection and Tracking System Imaging Performance

According to the solution to Eq. (

Considering the processing difficulty of the lenslets and the aim to collect more light from the targets, the waveguide arrays are chosen to enlarge the FOV. According to Eq. (

According to the initial structure of SPIDER, the system uses 37 interferometric arms, and, to sample more spatial frequency, the maximum number of baselines is chosen. The lenslets are arranged next to each other in one interferometric arm[

The design structural parameters of the system are listed in Table

| Structure Parameters | Value |

|---|---|

| 0.014 m | |

| 0.072 m | |

| Waveguide arrays for detection mode | |

| Waveguide arrays for tracking mode | |

| Lenslet diameter | 0.802 mm |

| Interferometer arms | 37 |

| Baselines in single interferometer arm | 45 |

Table 2. Target Detection and Tracking System Structure

Because of the difficulty in simulating such a large image corresponding to an FOV of 10°, we choose to simulate a relatively small image corresponding to an FOV of 1°, which does not affect the system principle verification. The waveguide array is

![]()

Figure 5.Simulation results for target detection and tracking system. (a) Pristine target scene used for the simulation; (b) detection mode simulation result; (c)–(e) tracking mode simulation results.

The peak signal-to-noise ratio (PSNR) is an objective standard for evaluating the imaging quality[

| Comparison Figures | PSNR |

|---|---|

| Fig. | 29.2622 |

| Fig. | 38.9698 |

| Fig. | 39.1527 |

| Fig. | 36.9610 |

Table 3. PSNRs of Imaging Result with Original Image

We can verify the image quality of the two operation modes from the results of Table

In summary, the simulation result indicates that the detection and tracking system can realize detection of the targets from a wide area and track multiple targets simultaneously without any moving parts.

In contrast, compared to all of the baselines and waveguide arrays working to realize multiple target high-resolution imaging in a wide field, the target detection and tracking system can reduce the system power consumption, which is achieved through two operating modes via system integration control. The simulation results indicate that the power of the detection mode is 20% of all of the baselines and waveguide arrays, and the power of the tracking mode with three airplanes and synchronous tracking in the FOV is 0.17% of all of the baselines and working waveguide arrays. In addition, the detection and tracking system can reduce the amount of data processing to improve the processing speed and achieve real-time target detection and tracking.

In this Letter, we present a target detection and tracking system using SPIDER. The system principle is introduced and includes two operating modes: a detection mode and a tracking mode. The system searches the targets from a wide field in the detection mode, and, once the focus targets are found, the system switches to the tracking mode and images the focus targets with a high resolution. We provided a simulation example of the system and used the quality evaluation method PSNR to analyze the simulation results. The results indicate that this system can achieve wide-field target detection and multi-target tracking simultaneously with a high imaging resolution via the integrated system control without any moving parts.

In conclusion, this system provides a design reference for the application of the new space-based imaging system with simultaneous multi-target detection and tracking in a wide field. As research reports show that the SPIDER technology is still in its infancy, SPIDER imaging system development includes micro–nano manufacturing technology, PICs, spatial frequency domain undersampling image inversion, and other technologies. With the development of SPIDER, the design of the detection and tracking system will be widely applied.

References

[2] G. Li, L. Li, H. Shen, Y. He, J. Huang, S. Mao, Y. Wang. Appl. Opt., 52, 7919(2013).

[3] T. Manzur, J. Zeller, S. Serati. Appl. Opt., 51, 4976(2012).

[4] I. Cohen, G. Medioni. Proceedings of the CVPR ‘98 Workshop on Interpretation of Visual Motion(1998).

[5] Y. Gao, X. L. Zhang. Proceedings of the 2010 5th IEEE International Conference on Intelligent Systems, 402(2010).

[6] V. Reilly, H. Idrees, M. Shah. Proceedings of the ECCV 2010, 186(2010).

[7] R. Bodor, R. Morlok, N. Papanikolopoulos. Proceedings of the 2004 IEEE/RSJ International Conference on Intelligent Robots and Systems, 643(2004).

[8] R. Kendrick, A. Duncan, J. Wilm, S. T. Thurman, D. M. Stubbs, C. Ogden. Proceedings of the Advanced Maui Optical and Space Surveillance Technologies Conference(2013).

[9] R. Kendrick, S. T. Thurman, A. Duncan, J. Wilm, C. Ogden. Imaging and Applied Optics, OSA Technical Digest, CM4C.1(2013).

[10] A. Duncan, R. Kendrick, S. Thurman, D. Wuchenich, R. P. Scott, S. J. B. Yoo, T. Su, R. Yu, C. Ogden, R. Proiett. Proceedings of the Advanced Maui Optical and Space Surveillance Technologies Conference(2015).

[12] M. Li, C. L. Zou, G. C. Guo, X. F. Ren. Chin. Opt. Lett., 15, 092701(2017).

[13] A. Duncan, C. Ogden, D. Wuchenich, R. L. Kendrick, S. T. Thurman. Frontiers in Optics 2015, OSA Technical Digest, FM3E.3(2015).

[14] M. Francon, S. S. Ballard. Encyclopedia of Physical Science and Technology, 371(2003).

[15] J. W. Goodman, L. M. Narducci. Phys. Today, 39, 126(1986).

[17] C. S. Li, X. Y. Qiu, X. Li. Photon. Res., 5, 97(2017).

[18] T. Hong, W. Yang, H. Yi, X. Wang, Y. Li, Z. Wang, Z. Zhou. Proc. SPIE, 8255, 82551Z(2012).

[19] P. Zhao, Y. B. Yuan, Y. Zhang, W. P. Qian. Chin. Opt. Lett., 14, 042801(2016).

[20] A. L. Duncan, R. L. Kendrick. Segmented planar imaging detector for electro-optic reconnaissance. U.S. patent(2014).

[21] O. Guyon. Astron. Astrophys., 387, 366(2002).

[22] K. Badham, R. L. Kendrick, D. Wuchenich, C. Ogden, G. Chriqui, A. Duncan, S. T. Thurman, S. J. B. Yoo, T. Su, W. Lai. Conference on Lasers and Electro-Optics Pacific Rim, 1(2017).

[23] A. Hore, D. Ziou. Proceedings of 2010 20th International Conference on Pattern Recognition, 2366(2010).

Set citation alerts for the article

Please enter your email address