Journals >Laser & Optoelectronics Progress

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 120901 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121001 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121002 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121004 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121005 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121007 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121008 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121009 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121010 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121011 (2020)

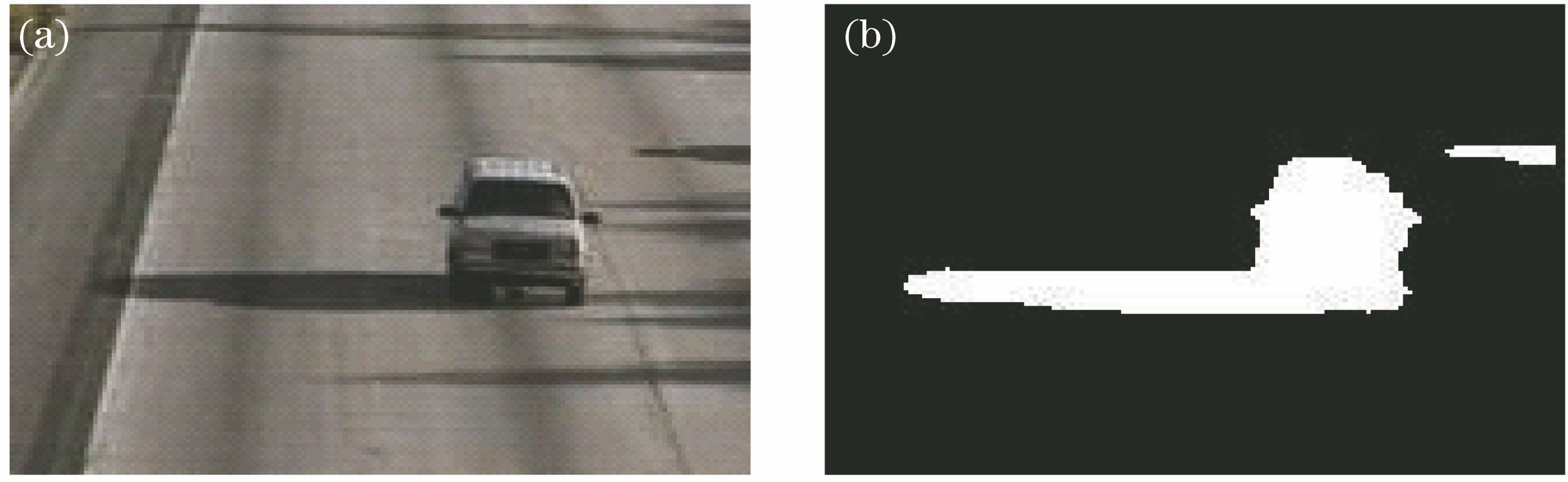

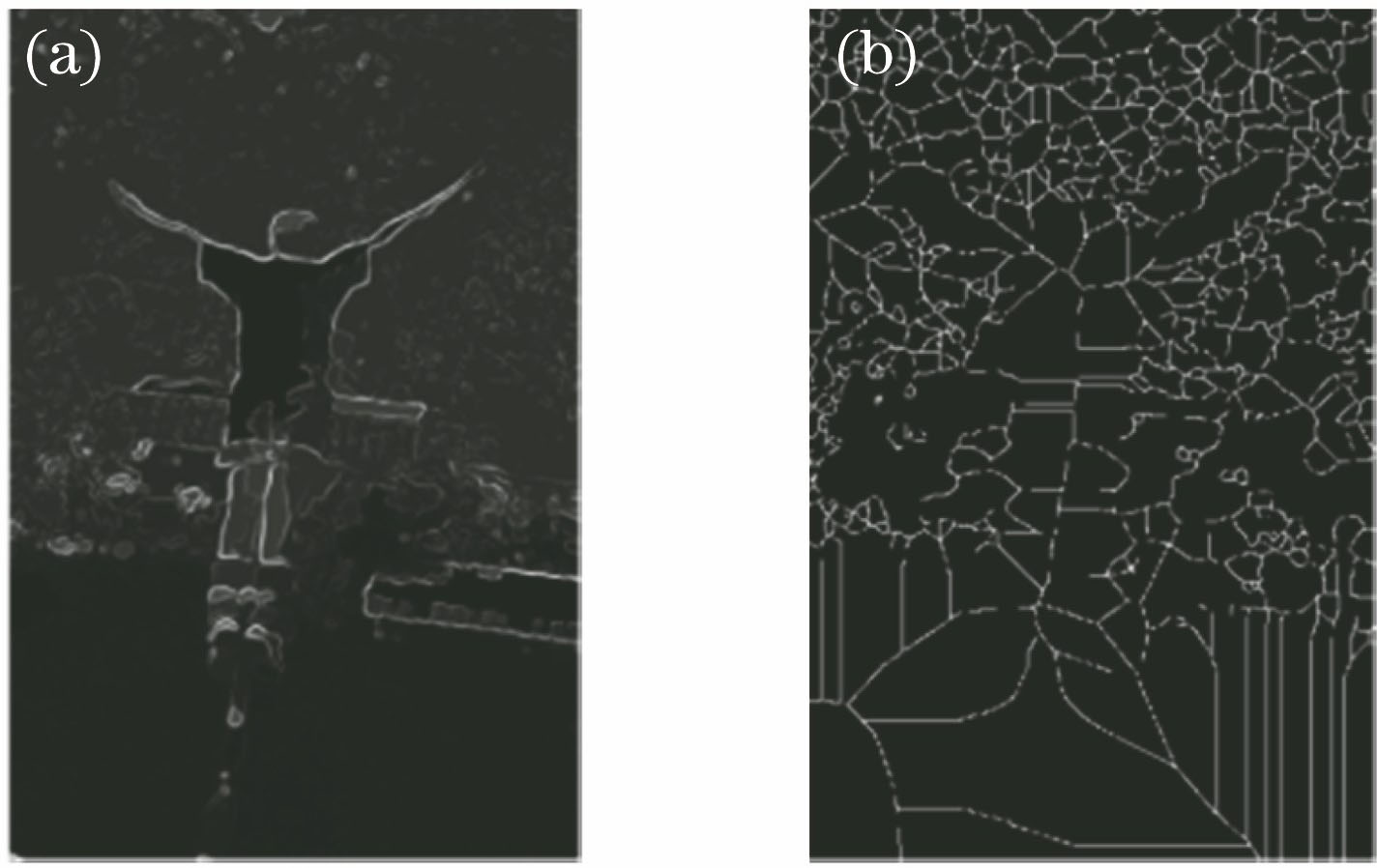

ing at the problem of insufficient expression ability of traditional single manual feature and model degradation caused by error accumulation in the process of model updating in complex scenes, Based on this, the object tracking algorithm based on correlation filtering and convolution residual learning is proposed. The multi-feature correlation filtering algorithm is defined as a layer in the neural network, and the feature extraction, response graph generation, and model update are integrated into the end-to-end neural network for model training. In order to reduce the degradation of model during online updating, the residual learning mode is introduced to guide model updating. The proposed method is validated on the benchmark datasets OTB-2013 and OTB-2015. The experimental results show that the proposed algorithm can effectively deal with motion blur, deformation, and illumination in the complex scene, and has high tracking accuracy and robustness.

.- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121012 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121013 (2020)

ing at the problem of low registration rate in image feature point extraction and matching algorithm, an image registration algorithm is proposed to optimize the grid motion statistics. The algorithm first uses oriented fast and rotated brief algorithm to extract the feature points, and then rough feature point registration is carried out by Brute-Force matching algorithm. According to the size of the image and the number of feature points, the image is divided into multiple grids, and the number of feature points in the grid is moved for statistics. A score statistics template is created by using Gaussian function through the distance between the nine-square grid feature points and the central feature points. The score statistics of the nine-square grid is compared with the set threshold. If the threshold is exceeded, it is considered to be a correct matching point, otherwise it will be filtered out. Experimental results show that the number of exact matching points between the proposed algorithm and the grid-based motion statistics algorithm is increased by 18.17%, and the speed can be increased by about 41.3% compared with the traditional feature point extraction matching algorithm. This method can effectively eliminate false matches and improve the matching rate.

.- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121014 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121015 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121016 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121017 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121018 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121019 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121020 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121101 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121102 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121103 (2020)

ing at the problem of low accuracy of the segmentation algorithm for three-dimensional (3D) point cloud data, a new segmentation algorithm combining point cloud skeleton points and external feature points is proposed. This method can effectively segment local small-scale convex objects, which cannot be segmented by traditional methods. This would make the segmentation of 3D point cloud data more perfect and provide a new idea for the segmentation of 3D point clouds. In this paper, C++ and its open source point cloud library are used to program. First, L1 median algorithm is used to extract skeleton points from 3D point clouds. At the same time, feature points are extracted by scale-invariant feature transform algorithm. Then, a segmentation plane is constructed based on skeleton points and feature points, segmentation is conducted, and the remaining feature points are detected. At last, a segmentation plane is constructed again for segmentation, therefore getting the final result. Experimental results show that the algorithm can efficiently segment small-scale convex surface of 3D point clouds and improve the accuracy of segmentation.

.- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121104 (2020)

ing at the problem of low accuracy of the segmentation algorithm for three-dimensional (3D) point cloud data, a new segmentation algorithm combining point cloud skeleton points and external feature points is proposed. This method can effectively segment local small-scale convex objects, which cannot be segmented by traditional methods. This would make the segmentation of 3D point cloud data more perfect and provide a new idea for the segmentation of 3D point clouds. In this paper, C++ and its open source point cloud library are used to program. First, L1 median algorithm is used to extract skeleton points from 3D point clouds. At the same time, feature points are extracted by scale-invariant feature transform algorithm. Then, a segmentation plane is constructed based on skeleton points and feature points, segmentation is conducted, and the remaining feature points are detected. At last, a segmentation plane is constructed again for segmentation, therefore getting the final result. Experimental results show that the algorithm can efficiently segment small-scale convex surface of 3D point clouds and improve the accuracy of segmentation.

.- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121120 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121501 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121502 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121503 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 121504 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 122801 (2020)

ing at the problem that the traditional 2D deep learning method can not realize the 3D point cloud classification, this study proposes a novel classification method for airborne LiDAR point clouds based on 3D deep learning. First, airborne LiDAR point clouds and multi-spectral imagery are fused to expand the spectral information of point clouds. Then, 3D point clouds are placed on grids to make the LiDAR data suitable for the 3D deep learning. Subsequently, the local and global features in different scales are extracted by multi-layer perceptron. Finally, airborne LiDAR point clouds are classified into semantic objects using the 3D deep learning algorithm. The data sets provided by the International Society of Photogrammetry and Remote Sensing (ISPRS) are used to validate the proposed method, and the experimental results show that the classification accuracy can be increased by 13.39% by fusing the LiDAR point clouds and multi-spectral images. Compared with some of the methods submitted to ISPRS, the proposed method achieves better performance by simplifying the process of feature extraction.

.- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 122802 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 122803 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 122804 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 120001 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 120002 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 120003 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 120004 (2020)

- Publication Date: May. 30, 2020

- Vol. 57, Issue 12, 120005 (2020)