- Photonics Research

- Vol. 10, Issue 7, 1712 (2022)

Abstract

1. INTRODUCTION

Digital high-speed cameras have been used to monitor a plethora of transient events ranging from laser ablation and plasma formation [1,2] to cell dynamics [3,4] and the investigation of geomaterials [5]. The demand for affordable methods to investigate even faster events in high quality has been, and is, ever increasing, serving as the driving force behind the extensive development of single sensor digital cameras. This has resulted in a increase in frame rates over the last 30 years [6,7].

Various electronic sensor architectures such as charged coupled devices (CCDs), complementary metal oxide sensors (CMOSs), and

This apparent trade-off among speed, sensor size, and sequence length will unavoidably dictate the level of resolvable spatial and temporal characteristics of the phenomena under study. Currently available fast single sensors are able to produce short, low-resolution burst videos at low burst repetition rates and are thus useful for globally monitoring rapidly evolving events whose dynamics are localized in time and where the onset can be predicted or triggered [10–14]. In contrast, sensors with a reduced frame rate can provide long video sequences at high pixel resolution for monitoring structural changes occurring on a time scale [15–17], yet those sensors are limited to more slowly evolving events.

Sign up for Photonics Research TOC. Get the latest issue of Photonics Research delivered right to you!Sign up now

To overcome some of the rapid single-sensor-based limitations, alternative videography solutions have emerged. For example, multi-channel intensified cameras can acquire a burst of up to 16 images ( pixels) at 200 Mfps [18]. This type of technology, where the event is imaged onto multiple sensors in series, allows for high pixel resolution and imaging speed, but at the cost of low burst repetition rates (10 Hz for the multi-channel intensified camera Imacon 468, and 0.5 Hz for the Icarus 2, an X-ray nanosecond gated camera) [14,19,20]. Another way of overcoming electronic sensor-based trade-offs is with the use of image multiplexing concepts. These techniques can include the application of spatial masks on images, followed by algorithmic extraction [21,22] or via spectral filtering of different colors [23], enabling alternative means to produce fast burst videos in the Mfps regime. Such solutions have, however, been able to provide only low burst repetition rates due to the technological restrictions set by either the camera (e.g., streak camera) or the employed illumination (e.g., LEDs).

To date, there is no existing video technology that has demonstrated the ability to circumvent the aforementioned technological trade-offs to monitor small-scale structural details, whose evolution occurs at a nanosecond time scale while the entire event may unfold over multiple orders of magnitude longer in time. Such events, however, do occur within many fields of the natural sciences such as within transient spray diagnostics [24,25], combustion and plasma diagnostics [26–29], nanostructure dynamics [30], or velocimetry in general [31,32].

The ability to both track extremely fast small-scale dynamics and to continuously monitor the slower large-scale motion would require technology that provides (1) a high frame rate (within a single burst), (2) high burst repetition rate, and (3) imaging with high pixel and spatial resolution. In this paper, we combine multiplexed imaging with high-speed single sensor technology to meet all these requirements, allowing for the tracking of transient events occurring on the MHz time scale with standard, non-intensified kHz–MHz camera technology. The capabilities of the system are demonstrated, to the best of the authors’ knowledge, for the first time by extracting 2D accelerometry maps at kHz rates over a period of time spanning the entire event of interest ().

2. EXPERIMENTAL SETUP

To obtain high-speed accelerometry data of dynamic transient events at kHz time scales, we hybridize high-speed illumination-based multiplexed imaging with high-speed CMOS camera technology. The optical setup presented herein allows for three burst images with a temporal resolution of up to 52 ns (19 MHz) at a burst repetition rate of up to 1 Mfps (depending on the desired field of view).

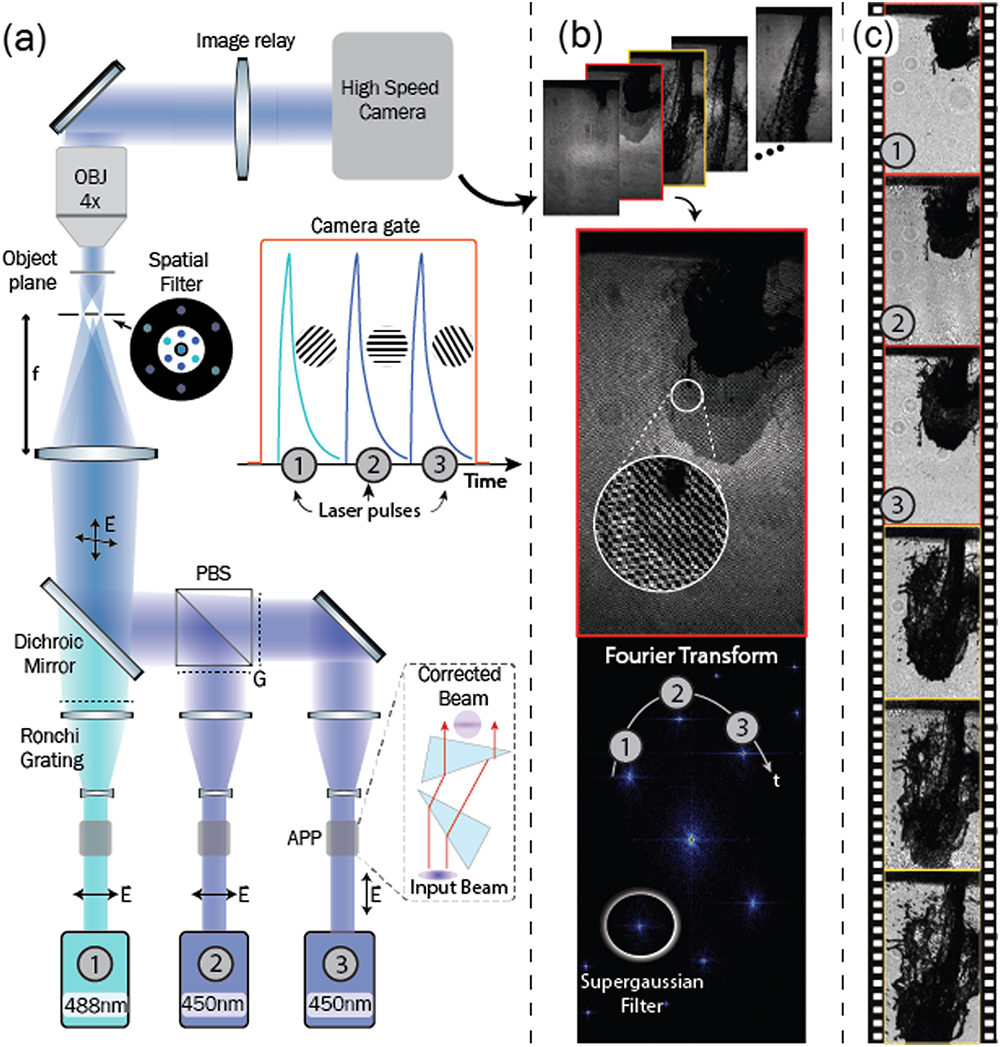

The system that attains these capabilities is divided into three units: (i) a high-speed passive imaging unit whose main component is a high-speed CMOS camera [Photron SA5, top of Fig. 1(a)], (ii) a high-speed illumination system, consisting of three individual high-repetition-rate pulsed nanosecond laser sources [Thorlabs NPL45- and 49-B, bottom of Fig. 1(a)], and (iii) an image multiplexing unit built of optical components that image unique spatial modulations onto the sample.

![System capable of monitoring microscopic MHz dynamics with kHz technology. (a) The light from each nanosecond pulsed laser source, triggered w.r.t the high-speed camera, is spatially modulated with Ronchi gratings (20 lp/mm) and combined into a pulse train incident on the sample. The imaged event is then relayed to the high-speed camera via a microscopy configuration. Due to the laser pulses’ temporal separation [inset of (a)] the event of interest will be illuminated at three distinct times within a single camera exposure. (b) Each high-speed camera image consists of three superimposed spatially modulated images of which an example is framed in red. Its Fourier transform depicts distinct peaks, within which the individual pulse information is contained. Performing a spatial lock-in followed by low-pass filtering on the peaks extracts said information. (c) Carrying out this process (including background correction) on each high-speed camera image results in a video sequence where bursts of three images (FRAME triplets) are taken at a repetition rate set by the camera, allowing, e.g., the extraction of 2D accelerometry data from each camera image. The complete video of (c) is included as Visualization 4.](/richHtml/prj/2022/10/7/1712/img_001.jpg)

Figure 1.System capable of monitoring microscopic MHz dynamics with kHz technology. (a) The light from each nanosecond pulsed laser source, triggered w.r.t the high-speed camera, is spatially modulated with Ronchi gratings (20 lp/mm) and combined into a pulse train incident on the sample. The imaged event is then relayed to the high-speed camera via a microscopy configuration. Due to the laser pulses’ temporal separation [inset of (a)] the event of interest will be illuminated at three distinct times within a single camera exposure. (b) Each high-speed camera image consists of three superimposed spatially modulated images of which an example is framed in red. Its Fourier transform depicts distinct peaks, within which the individual pulse information is contained. Performing a spatial lock-in followed by low-pass filtering on the peaks extracts said information. (c) Carrying out this process (including background correction) on each high-speed camera image results in a video sequence where bursts of three images (FRAME triplets) are taken at a repetition rate set by the camera, allowing, e.g., the extraction of 2D accelerometry data from each camera image. The complete video of (c) is included as

The two electronic units, (i) and (ii), are synchronized in time via a triggering system such that within a single camera exposure, each laser is triggered once at separated time intervals [inset of Fig. 1(a)]. This pulse train is optically relayed onto the sample via a polarizing beam splitter (PBS), dichroic mirror, and lens (). The now illuminated sample is then imaged onto the high-speed camera with a microscope objective ( Olympus plan achromat microscope objective) and a relay lens ().

All three illumination events within the rapid succession of pulses reach the camera within a single exposure and need to be separated digitally. To enable this separation and thereby identify the information retained within each pulse, a multiplexed imaging technique named frequency recognition algorithm for multiple exposures (FRAME) [22,33,34] is used. With FRAME, the intensity profile of each laser pulse is spatially modulated at a unique angle [inset of Fig. 1(a)], optically achieved by imaging Ronchi gratings (20 lp/mm) onto the sample plane (zeroth order and undesired harmonics are rejected using spatial filtering).

Upon video capture, the high-speed camera produces a series of multiplexed images where each includes three superimposed, spatially modulated, and temporally separated illumination events of the dynamic sample [Fig. 1(b)]. This can be visualized in the example image, framed in red, where the event’s progression is seen by the stepwise varying gray scales, overlayed with unique modulation combinations. As a result of these modulated structures, the Fourier transform of the image depicts three peaks separated from the DC component, each corresponding to the image produced from a single laser pulse. Algorithmic separation is then achieved by spatially locking-in to each peak, low-pass filtering (with a 2D Gaussian to the power of eight), then inverse Fourier transforming, resulting in three images of the event’s progression [red framed images of Fig. 1(c)], dubbed herein as a FRAME triplet. Iterating this procedure on each image of the raw high-speed video results in an image sequence that contains MHz dynamic information (up to 19 MHz) at the repetition rate offered by the CMOS camera [video reel of Fig. 1(c)], which in the current case corresponds to a maximum of 1 Mfps.

3. VERSATILE MONITORING OF HIGH-SPEED EVENTS

To demonstrate the capabilities of this high-speed hybrid imaging system, accelerometry is performed upon the acquired microscopic video recordings of 60 ms long injection events set about by a commercial fuel injector (Bosch EV1 four-hole nozzle running at 5 bar water injection pressure). These transient liquid dynamics are imaged at a camera speed, i.e., burst repetition rate, of 21 kHz (variable C in Fig. 2) and varying burst rates (variable L in Fig. 2). The complete videos, comprising about images, of these and additional injection events are included in

![]()

Figure 2.Microscopic imaging of transient fluid dynamics at high speed. (a) Imaging the spray injection event at a camera speed of 21 kHz and a shutter time of 12 μs within which three pulses, with constant inter-pulse time (250 kHz FRAME triplet), are recorded. (b) Non-linear temporal triggering where the first two pulses arrive at the sample with an inter-pulse time of 20 μs (50 kHz), while the last two are incident with an inter-pulse time of 2 μs (500 kHz). At the beginning of the event, 50 kHz suffices to track the fluid’s evolution within the camera exposure, while already at 95 μs ASOI, higher speeds are necessary. The zoom-ins of the USAF 1951 resolution target correspond to the resolution limits in

A prerequisite for accelerometry measurements is high-contrast, blur-free images such that, e.g., the accurate segmentation of structures at varying scales can be performed. The short illumination time in conjunction with the high photon flux offered by the light sources ( and in this case) is able to provide such images by (1) eliminating motion blur despite the relatively long camera exposure and (2) maintaining a uniform intensity profile over the entire image for high-contrast shadows of the event. In addition, the lock-in filtering procedure associated with FRAME suppresses image blur caused by multiple light scattering [35].

A further requirement for measuring velocity vector fields of a transient event (the precursor for acceleration vector fields) is versatility in the time domain. Indeed, for different versions of non-seeded image flow analyses to function efficiently, the distance traveled by the event between two images must be optimized such that the correlation between the spatial structures remains high for the given distance traveled [36,37]. Furthermore, to extract velocity vector fields at more than two time scales (one slow [25] and the other fast [24]), one needs to vary the inter-pulse delay such that the time between any pair of pulses is optimized for vector extraction at the given time scale. Figure 2(b) illustrates these capabilities offered by the high-speed FRAME imaging system. The independence of the light sources within the illumination system allows for a varied inter-pulse time (20 μs between the first two and 2 μs between the last two), all at a burst repetition rate set by the camera speed.

The temporal pulse shape of the illumination sources, on the other hand, allows for a maximum burst rate of 19 MHz (Appendix A). Due to the nature of the light sources and the multiplexing methodology (i.e., each light source and its corresponding event information are independent of each other), the image quality does not deteriorate as the burst rate increases. Figure 2(c) shows this trait for a burst rate of 10 MHz, where the image spatial resolution of 29 and 18 lp/mm [inset of Fig. 2(b)] and high image contrast are conserved. On the other hand, imaging at increased burst repetition rates usually results in a reduced pixel number of the high-speed camera, affecting only the field of view. Hence, imaging at higher rates overall (burst and burst repetition rates) does not inherently imply a deteriorated spatial resolution or image quality.

A. High-Speed Tracking of Liquid Dynamics over the Entire Event at Various Spatial Scales

An inherent problem with tracking the acceleration of fast transient events is the requirement of three measurements close enough in time such that a finite difference approximation of the velocity’s time derivative can be accurately extracted. Previous attempts at capturing the data necessary for such an analysis have primarily focused on ballistic imaging techniques using a multiple number of time-gated cameras (ICCDs) [38] or by combining two CCD cameras in PIV mode with polarization-based filtering, resulting in two velocity maps close in time [39]. Although these techniques provide excellent temporal and spatial resolution per acquisition, both ICCDs and interline transfer (double-framed) CCDs often have a restricted frame rate and would be able to extract accelerometry data only at a repetition rate of . The approach presented in this paper overcomes this problem by combining the image multiplexing ability offered by FRAME, i.e., high burst rate, with the high frame rate offered by modern high-speed CMOS cameras. The rate at which instantaneous acceleration vector fields can be extracted is thus dictated by the repetition rate of the CMOS camera that greatly exceeds that of previously demonstrated approaches.

Estimating the acceleration vector fields from the FRAME triplets is performed by tracking spray features in time using an in-house developed segmentation and template matching post-processing step. The left column of Fig. 3(a) depicts a FRAME triplet with the post-processing segmentation edge of each measurement outlined in green. The segmentation accuracy can be varied with different parameters such as the radius of the low-pass filter used in the lock-in algorithm or the parameters used in the segmentation algorithm such as thresholds, and filling and/or morphological operation parameters.

![]()

Figure 3.Extracting 2D accelerometry data from a single camera exposure. (a) Thresholding and morphologically closing results in the green segmentation of the shadowgram. (b) Template matching between two frames results in 2D velocity vector fields (color coded arrows), calculated at the pixel level along the segmentation edge. Here we choose to calculate the vectors every fourth pixel, yielding a vector density of

Templates of a preset size ( pixels) are extracted for, depending on the desired vector density, every 2nd to 10th pixel along the segmentation edge. These templates are then laterally shifted over the subsequent images where the shift that maximizes the cross-correlation is set as the distance traveled by the spatial feature. Based on the lateral shift and the time separation between images, velocity vectors for each template are calculated. In contrast to seeded flow velocimetry where the displacement of seeded particles is monitored with grid-based cross-correlation algorithms, this technique yields a vector field that reveals the structural displacement of features along the entire edge of the liquid structures.

Through said template matching of the triplet, two velocity vector maps can be extracted from a single exposure [Fig. 3(b)]. Here the velocity vectors are drawn and color-coded w.r.t their speed, and histograms of their and components are also displayed alongside. In the leftmost zoom-in, the highly uniform velocity vectors of the spray front are highlighted, indicating a region of high stability that is moving with the inertial speed of the entire liquid body. In contrast, the rightmost zoom-in of Fig. 3(b) depicts a liquid ligament that is traveling away from the main body, indicating a possible breakup event. In general, however, the statistics of the histograms show that the spray travels slightly towards the left, while the histograms indicate a movement downward at an average speed of about 15 m/s.

By pairing vectors from the two velocity vector plots, average acceleration vectors can be extracted over the duration of the pulse train triplet [Fig. 3(c)]. In the case of fluid dynamics, this map can then be directly related to physical quantities such as pressure and viscosity via, e.g., the Navier Stokes equation [39]

As a result of the constant velocity field of the spray front, and its large inertial mass, an expected low acceleration (between zero and ) is seen in the corresponding region [lower zoom-in of Fig. 3(c)]. On the other hand, the large inward pointing acceleration vectors in the upper zoom-in display a quickly changing spray morphology in that region, further confirming the possibility that a primary breakup event is about to occur. In general, however, the acceleration histograms of Fig. 3(c) indicate a mean of zero acceleration in both and directions. Despite this, the stochastic nature of the turbulences experienced by the edges of the liquid body results in a Gaussian distribution of forces around this mean.

B. Maintained Sensitivity despite Largely Varying Event Regimes

Due to the aforementioned marriage among high burst rate, burst repetition rate, and long sequence depth offered by the presented videography technique, this type of accelerometry analysis can be performed for the entirety of the event of interest. In the case of Fig. 3(d), the last image corresponds to camera image number 4400 of a sequence running at a burst rate of 500 kHz and burst repetition rate of 75 kHz. As expected, it is visible that the liquid body undergoes many different regimes over the course of the entire event [40]—low mean acceleration and velocity 40 μs after the start of injection (ASOI), high, in general, velocity and increased turbulence in the middle parts (4.8 ms ASOI), and a high degree of breakup activity where air resistance dominates after the nozzle has closed (58.7 ms ASOI). This demonstrates the importance of attaining tracking ability over the entire lifetime of the event to follow the physical cause and effect relationships acting upon the dynamics. For the entire video overlayed with the accelerometry data, refer to

The accuracy of the velocity vectors, extracted using our template matching algorithm, increases as the tracked features undergo large lateral shifts in between consecutive frames. On the other hand, the instabilities and turbulence associated with liquid atomization cause the large, intact, liquid core to disintegrate and break up into smaller, liquid bodies. Such structural deformations, which the algorithm interprets as rigid body motion, become more pronounced as the inter-frame time increases, thus counteracting any gain in accuracy obtained through large lateral shifts.

Another aspect regarding the accuracy of the velocity vectors concerns the large spread in time scales involved in the liquid atomization process. Obtaining an accurate finite difference approximation for both the rapid changes associated with the primary breakup as well as the motion of the main liquid body requires various inter-image times, which is achievable using FRAME. This aspect is exemplified in Fig. 4, where the varying structural speeds observed within the same exposure require different values of to be accurately identified and measured.

![]()

Figure 4.Sensitivity of velocimetry to different inter-pulse durations. (a), (b) Two instances from one injection event are analyzed for three different, simultaneously acquired values of inter-pulse delay time (

C. Single Droplet Tracking

The high contrast of the acquired images in conjunction with the marginal loss in image spatial resolution following the low-pass filtering allows for simultaneous segmentation, force tracking, and extraction of quantitative data from features at different spatial scales. Figure 5 reveals the force dynamics acting on a single droplet that has broken off from the main body.

![]()

Figure 5.High-speed tracking of air resistance on a single droplet. (a) The direction of the forces acting on a single droplet, depicted by the solid yellow arrow (itself equal to the mean of the dashed arrows) indicates strong air resistance. (b) Despite this, the measured decrease in velocity from 46.7 to 24 m/s (depicted as a black circle) is less than expected from air resistance simulations performed using Stoke’s law and Schiller–Naumann (SN) correlation. Due to the deformation of the liquid droplet to a more aerodynamic shape (seen in the second velocity image), the correct drag coefficient is 15 times less than that proposed by SN correlation. See Appendix

Modeling of the velocity progression of this single droplet (simplified to a spherical solid drop of radius of 45.6 μm and initial speed of 46.7 m/s) with a simple Stokes or Schiller–Naumann (SN) model, results in a decrease in velocity to 6 versus 1 m/s, respectively, after 3 μs, significantly lower than the measured value of 24 m/s [blue and red lines of Fig. 5(b) versus the black circle]. However, we see that the droplet does not remain spherical throughout its journey but instead attains a more aerodynamic form after 3 μs [zoom-in of Fig. 5, ], resulting in a reduced drag coefficient. In conjunction with the acceleration vectors pointing in the direction of air resistance, it is possible to infer this inconsistency between model and experiment by taking into account the deformation of the liquid droplet to a more aerodynamic form (SN/15) caused by air resistance [41,42]. See Appendix C for more details.

4. DISCUSSION

The underlying principle of sensor-based high-speed cameras is the ability to shift photoelectrons between electronic components in a structured manner at extremely high speeds. This physical movement of electrons, however, fundamentally limits sequence depth, sensor size, and imaging speed [43], confining these types of technologies to the imaging of either kHz events over a duration of several seconds or MHz events over the duration of microseconds. On the other hand, illumination-based techniques can reach THz imaging speeds, but the fundamental necessity to record the entire sequence on a single sensor within a single exposure usually severely limits their sequence depths and/or spatial resolution. This results in a strict trade-off between imaging speed and temporal length of the image sequence. In this work, we combined illumination-based multiplexed imaging with sensor-based high-speed cameras to perform accelerometry on liquid bodies at video rates significantly faster than previously demonstrated.

The optical hybrid configuration presented herein has several technical advantages over conventional high-speed imaging techniques. First, hybridization of FRAME is not limited to single sensor technology but is compatible with, e.g., rotating mirror cameras (such as Cordin 560 or Brandaris 128), ultrafast framing cameras (Imacon) or ISIS. Second, the independence of the pulse train light sources allows for non-linear temporal sampling of the event. As a result, the time separation between pulses can be tailored to track events ranging from low to high speeds [2,17,44] or events that include both time scales simultaneously [3,4,27]. Moreover, this flexibility allows for the acquisition of suitable inputs to acceleration extraction algorithms such as the one presented herein or in Ref. [36], a task that is, as has been shown, quite sensitive to the between images. Third, although higher frame rates () can be achieved with, e.g., ultrafast framing cameras or double-pulsed PIV systems, the burst repetition rate of such systems—the rate at which velocity or acceleration data can be measured—is usually in the range of . Hence, the extraction of accelerometry data of rapid transient events has, thus far, been restricted to a single-shot basis [38,39]. In contrast, the system presented herein relies on neither double-frame image technology nor intensified (time-gated) cameras, thereby granting a repetition rate up to six orders of magnitude greater. We take advantage of this boost in frame rate enabling the near continuous extraction of acceleration data throughout the entire transient event. Finally, since all images are acquired on a single sensor, calibration procedures to achieve pixel-to-pixel overlapping and compensation for different magnifications are not necessary, which greatly facilitates and decreases the uncertainties related to region matching analyses [45].

Due to the electronic speed limit of CCD and CMOS sensors, a notable amount of development has instead gone into increasing the number of pixels, their sensitivity, and noise characteristics while still maintaining high speeds (e.g., a 100 kfps 2 Mpx sensor was realized in 2017 [46]). This general trend greatly benefits hybridization with multiplexed imaging techniques such as FRAME, as this would allow more dynamic range per multiplexed image, more images per exposure (significantly more than three has been experimentally demonstrated [34]), and higher spatial resolution, enhancing the temporal characteristics of both cameras and FRAME itself. On the other hand, fast illumination technology in the form of high-repetition-rate femtosecond laser sources or burst lasers is becoming more commercially available than ever before, opening up high-repetition-rate THz burst accelerometry of, e.g., chemical reactions [47].

5. CONCLUSION

While the development of double-framed cameras and burst lasers has enabled velocimetry, the ability to measure acceleration has, thus far, been restricted to solutions using multiple camera optical configurations running at low repetition rates. Furthermore, the technological gap restricting simultaneous access to slow kHz and fast MHz–THz processes, limits the possibility to efficiently track a large variety of naturally occurring events. Hybridizing illumination-based imaging with high-speed sensor technology overcomes these restrictions, with the added benefit of being able to reap the benefits of technological advancement from the respective fields. All in all, the ability to measure properties such as acceleration of fast but long duration events is of fundamental importance to understand and model the forces acting upon physical processes, and we envision the measurement of such quantities to become a major part of future research.

Acknowledgment

Acknowledgment. The authors thank Prof. Mattias Richter for use of the high-speed camera, and Saeed Derafshzan for fruitful input on velocimetry history and techniques.

APPENDIX A: TEMPORAL RESOLUTION

The temporal resolution of an imaging system should be defined in such a way as to guarantee unique information in each image. To guarantee this, we have previously defined pulse limits that set strict boundaries to where the next pulse is allowed to be placed in time [

![]()

Figure 6.19 MHz temporal resolution. (a) Due to their shape, the pulses from these nanosecond laser sources have a center of mass [red dashed line of inset] separated from the peak. Hence, the pulse limits (yellow dashed lines), within which 76% of the light intensity lies, are much further apart than the FWHM of the peak. Placing three such pulses in a pulse train, such that they adhere to the defined temporal resolution condition, allows for a 16 MHz pulse train. (b) Pulse limits versus FWHM: the asymmetric pulse shape of the lasers used here causes the pulse limits to be much larger than their Gaussian counterparts (red dots versus blue dots of the plot).

Another way to see it is that videography entails a non-delta spike sampling of the time axis of an event with 2D images. Even though active techniques have the ability to overlap pulses in time, according to the Nyquist theorem, one cannot gain much from this approach. To avoid oversampling, we must define pulse limits, within which the resulting image can be seen as a unique sampling point with the integration time being the distance between said pulse limits.

The pulse characteristics of the lasers used herein were measured with a photodiode and a 6 GHz oscilloscope resulting in pulses of the form of the inset in Fig.

In contrast, had these pulses been Gaussian with similar FWHMs, the maximum temporal resolution would have been 104 MHz, highlighting how the sampling integration window of the illuminating pulse can cause significant variation in the temporal resolution, as is appropriate.

APPENDIX B: ACCELERATION EXTRACTION

To extract velocity and in turn acceleration vectors from the acquired images, the image analysis technique of template matching is used. In general, a subarea of an initial image is chosen, dubbed herein as a template. This template is then used to calculate cross-correlations between itself and a second image (within a preset search area). A vector is subsequently drawn from the position of the original template to the point of highest cross-correlation in the second image. For this to function, there are a few necessary steps to perform.

Background artifacts that arise due to non-uniform illumination and the low-pass filtering of Gaussian noise in the FRAME algorithm can interfere with correlation calculations. Therefore, we segment the images to logical maps via thresholding (with values around 50%–70% of the maximum), morphological closing (disc radius of one to five pixels), and hole filling. The edge pixels of the resulting logical maps [indicated in green in Fig.

Template matching is an established three-step approach to extract velocity vectors from image pairs [System.Xml.XmlElementSystem.Xml.XmlElementSystem.Xml.XmlElement

This three-step approach is performed for, if desired, each pixel along the edge of segmentation. Hence, the spatial resolution of the vector field, i.e., the density of the extracted vectors, is limited only by the post-segmentation spatial resolution of the image.

![]()

Figure 7.Extraction of velocity vectors for each pixel along the segmentation edge. Two examples of where the initial template (green boxes) is scanned within a search area (red dashed boxes) across the subsequent image (outlined in orange). The template is placed at the position of maximum cross-correlation (orange boxes). The velocity vector is then calculated as the distance between the two center points divided by the inter-image time. This process is performed for every pixel along the segmentation edge.

![]()

Figure 8.Validation of the velocity and acceleration extraction algorithm. (a) Experimental validation using the known gravitational acceleration acting upon a rigid sphere. The velocities are first extracted, yielding the displayed vector fields and distributions, where the angular distribution (

The raw data input in Fig.

The imaging of the falling rigid sphere in the experiment, on the other hand, does not result in a perfect circle, and hence, the segmentation and template matching algorithm will extract a distribution of velocities [extracted velocities of Fig.

A more in-depth error analysis attained by propagating a pixel error in segmentation, , into an acceleration error, , is also performed. The acceleration, , is given by

Given , can be expressed as

As for the vectors’ direction, the distribution of is centered around a value of 0.07º (where a value of zero points directly downwards). Performing the same type of analysis as above, the spread of 2.52º corresponds to a rounded pixel error of one, again highlighting the accuracy of the presented process.

APPENDIX C: DRAG ON A SINGLE DROPLET

A droplet’s trajectory can be modeled by solving the equation of motion for a droplet subject to gravity and the drag force:

References

[1] T. Hirata, Z. Miyazaki. High-speed camera imaging for laser ablation process: for further reliable elemental analysis using inductively coupled plasma-mass spectrometry. Anal. Chem., 79, 52-147(2007).

[2] J. E. Field, E. Amer, P. Gren, M. A. Zafar, S. M. Walley. High-speed photographic study of laser damage and ablation. Imaging Sci. J., 63, 119-136(2015).

[3] H. Mikami, C. Lei, N. Nitta, T. Sugimura, T. Ito, Y. Ozeki, K. Goda. High-speed imaging meets single-cell analysis. Chem, 4, 2278-2300(2018).

[4] C. K. Haluska, K. A. Riske, V. Marchi-Artzner, J.-M. Lehn, R. Lipowsky, R. Dimova. Time scales of membrane fusion revealed by direct imaging of vesicle fusion with high temporal resolution. Proc. Natl. Acad. Sci. USA, 103, 15841-15846(2006).

[5] H. Xing, Q. Zhang, C. Braithwaite, B. Pan, J. Zhao. High-speed photography and digital optical measurement techniques for geomaterials: fundamentals and applications. Rock Mech. Rock Eng., 50, 1611-1659(2017).

[6] H. Etoh. A high-speed video camera operating at 4500 fps. J. Inst. Telev. Eng. Jpn., 46, 543-545(1992).

[7] M. Suzuki, Y. Sugama, R. Kuroda, S. Sugawa. Over 100 million frames per second 368 frames global shutter burst CMOS image sensor with pixel-wise trench capacitor memory array. Sensors, 20, 1086(2020).

[8] J. Manin, S. A. Skeen, L. M. Pickett. Performance comparison of state-of-the-art high-speed video cameras for scientific applications. Opt. Eng., 57, 121706(2018).

[9] V. T. S. Dao, N. Ngo, A. Q. Nguyen, K. Morimoto, K. Shimonomura, P. Goetschalckx, L. Haspeslagh, P. De Moor, K. Takehara, T. G. Etoh. An image signal accumulation multi-collection-gate image sensor operating at 25 Mfps with 32 × 32 pixels and 1220 in-pixel frame memory. Sensors, 18, 3112(2018).

[10] O. Shpak, M. Verweij, H. J. Vos, N. de Jong, D. Lohse, M. Versluis. Acoustic droplet vaporization is initiated by superharmonic focusing. Proc. Natl. Acad. Sci. USA, 111, 1697-1702(2014).

[11] K. Kooiman, H. J. Vos, M. Versluis, N. de Jong. Acoustic behavior of microbubbles and implications for drug delivery. Adv. Drug Delivery Rev., 72, 28-48(2014).

[12] I. Beekers, M. Vegter, K. R. Lattwein, F. Mastik, R. Beurskens, A. F. van der Steen, N. de Jong, M. D. Verweij, K. Kooiman. Opening of endothelial cell–cell contacts due to sonoporation. J. Controlled Release, 322, 426-438(2020).

[13] A. Gleason, C. Bolme, H. Lee, B. Nagler, E. Galtier, D. Milathianaki, R. Kraus, J. Eggert, D. Fratanduono, G. Collins, R. Sandberg, W. Yang, W. Mao. Ultrafast visualization of crystallization and grain growth in shock-compressed SiO2. Nat. Commun., 6, 8191(2015).

[14] P. A. Hart, A. Carpenter, L. Claus, D. Damiani, M. Dayton, F.-J. Decker, A. Gleason, P. Heimann, E. Hurd, E. McBride, S. Nelson, M. Sanchez, S. Song, D. Zhu. First X-ray test of the Icarus nanosecond-gated camera. Proc. SPIE, 11038, 110380Q(2019).

[15] F. H. Zhang, S. T. Thoroddsen. Satellite generation during bubble coalescence. Phys. Fluids, 20, 022104(2008).

[16] A. Roth, D. Frantz, W. Chaze, A. Corber, E. Berrocal. High-speed imaging database of water jet disintegration part I: quantitative imaging using liquid laser-induced fluorescence. Int. J. Multiph. Flow, 145, 103641(2021).

[17] M. Alsved, A. Matamis, R. Bohlin, M. Richter, P.-E. Bengtsson, C.-J. Fraenkel, P. Medstrand, J. Löndahl. Exhaled respiratory particles during singing and talking. Aerosol Sci. Technol., 54, 1245-1248(2020).

[18] M. Versluis. High-speed imaging in fluids. Exp. Fluids, 54, 1458(2013).

[19] C. T. Chin, C. Lancée, J. Borsboom, F. Mastik, M. E. Frijlink, N. de Jong, M. Versluis, D. Lohse. Brandaris 128: a digital 25 million frames per second camera with 128 highly sensitive frames. Rev. Sci. Instrum., 74, 5026-5034(2003).

[20] X. Chen, J. Wang, M. Versluis, N. de Jong, F. S. Villanueva. Ultra-fast bright field and fluorescence imaging of the dynamics of micrometer-sized objects. Rev. Sci. Instrum., 84, 063701(2013).

[21] J. Liang, L. V. Wang. Single-shot ultrafast optical imaging. Optica, 5, 1113-1127(2018).

[22] V. Kornienko, E. Kristensson, A. Ehn, A. Fourriere, E. Berrocal. Beyond MHz image recordings using LEDs and the frame concept. Sci. Rep., 10, 16650(2020).

[23] K. Maassen, F. Poursadegh, C. Genzale. Spectral microscopy imaging system for high-resolution and high-speed imaging of fuel sprays. J. Eng. Gas Turbine Power, 142, 091004(2020).

[24] D. Sedarsky, S. Idlahcen, J.-B. Blaisot, C. Rozé. Velocity measurements in the near field of a diesel fuel injector by ultrafast imagery. Exp. Fluids, 54, 1-12(2013).

[25] L. Yin, M. Lundgren, Z. Wang, P. Stamatoglou, M. Richter, Ö. Andersson, P. Tunestål. High efficient internal combustion engine using partially premixed combustion with multiple injections. Appl. Energy, 233–234, 516-523(2019).

[26] S. Li, D. Sanned, J. Huang, E. Berrocal, W. Cai, M. Aldén, M. Richter, Z. Li. Stereoscopic high-speed imaging of iron microexplosions and nanoparticle-release. Opt. Express, 29, 34465-34476(2021).

[27] Y. Tang, C. Kong, Y. Zong, S. Li, J. Zhuo, Q. Yao. Combustion of aluminum nanoparticle agglomerates: from mild oxidation to microexplosion. Proc. Combust. Inst., 36, 2325-2332(2017).

[28] M. Uddi, N. Jiang, E. Mintusov, I. V. Adamovich, W. R. Lempert. Atomic oxygen measurements in air and air/fuel nanosecond pulse discharges by two photon laser induced fluorescence. Proc. Combust. Inst., 32, 929-936(2009).

[29] G. D. Stancu, F. Kaddouri, D. A. Lacoste, C. O. Laux. Atmospheric pressure plasma diagnostics by OES, CRDS and TALIF. J. Phys. D, 43, 124002(2010).

[30] S. Wang, J. Kang, Z. Guo, T. Lee, X. Zhang, Q. Wang, C. Deng, J. Mi. In situ high speed imaging study and modelling of the fatigue fragmentation of dendritic structures in ultrasonic fields. Acta Mater., 165, 388-397(2019).

[31] A. van der Bos, M.-J. van der Meulen, T. Driessen, M. van den Berg, H. Reinten, H. Wijshoff, M. Versluis, D. Lohse. Velocity profile inside piezoacoustic inkjet droplets in flight: comparison between experiment and numerical simulation. Phys. Rev. Appl., 1, 014004(2014).

[32] J. J. Philo, M. D. Frederick, C. D. Slabaugh. 100 kHz PIV in a liquid-fueled gas turbine swirl combustor at 1 MPa. Proc. Combust. Inst., 38, 1571-1578(2021).

[33] A. Ehn, J. Bood, Z. Li, E. Berrocal, M. Aldén, E. Kristensson. Frame: femtosecond videography for atomic and molecular dynamics. Light Sci. Appl., 6, e17045(2017).

[34] S. Ek, V. Kornienko, E. Kristensson. Long sequence single-exposure videography using spatially modulated illumination. Sci. Rep., 10, 18920(2020).

[35] Y. N. Mishra, E. Kristensson, M. Koegl, J. Jonsson, L. Zigan, E. Berrocal. Comparison between two-phase and one-phase SLIPI for instantaneous imaging of transient sprays. Exp. Fluids, 58, 110(2017).

[36] D. Sedarsky, J. Gord, C. Carter, T. Meyer, M. Linne. Fast-framing ballistic imaging of velocity in an aerated spray. Opt. Lett., 34, 2748-2750(2009).

[37] J. Fielding, M. B. Long, G. Fielding, M. Komiyama. Systematic errors in optical-flow velocimetry for turbulent flows and flames. Appl. Opt., 40, 757-764(2001).

[38] D. Sedarsky, M. Rahm, M. Linne. Visualization of acceleration in multiphase fluid interactions. Opt. Lett., 41, 1404-1407(2016).

[39] K. Christensen, R. Adrian. Measurement of instantaneous Eulerian acceleration fields by particle image accelerometry: method and accuracy. Exp. Fluids, 33, 759-769(2002).

[40] J. Eggers, E. Villermaux. Physics of liquid jets. Rep. Prog. Phys., 71, 036601(2008).

[41] C. Shao, K. Luo, J. Fan. Detailed numerical simulation of unsteady drag coefficient of deformable droplet. Chem. Eng. J., 308, 619-631(2017).

[42] I. Silverman, W. Sirignano. Multi-droplet interaction effects in dense sprays. Int. J. Multiph. Flow, 20, 99-116(1994).

[43] T. Etoh, A. Nguyen, Y. Kamakura, K. Shimonomura, T. Le, N. Mori. The theoretical highest frame rate of silicon image sensors. Sensors, 17, 483(2017).

[44] J. E. Chomas, P. A. Dayton, D. May, J. Allen, A. Klibanov, K. Ferrara. Optical observation of contrast agent destruction. Appl. Phys. Lett., 77, 1056-1058(2000).

[45] R. Adrian. Twenty years of particle image velocimetry. Exp. Fluids, 39, 159-169(2005).

[46] T. Kondo, Y. Takemoto, N. Takazawa, M. Tsukimura, H. Saito, H. Kato, J. Aoki, S. Suzuki, Y. Gomi, S. Matsuda, Y. Tadaki. A 3D stacked global-shutter image sensor with pixel-level interconnection technology for high-speed image capturing. Proc. SPIE, 10328, 1032804(2017).

[47] A. H. Zewail. Femtochemistry: atomic-scale dynamics of the chemical bond. J. Phys. Chem. A, 104, 5660-5694(2000).

Set citation alerts for the article

Please enter your email address